Template:Parameter estimation using least squares in nonlinear regression

Parameter Estimation Using Least Squares in Nonlinear Regression

Linear Regression (Least Squares)

The method of least squares requires that a straight line be fitted to a set of data points. If the regression is on [math]\displaystyle{ Y }[/math] , then the sum of the squares of the vertical deviations from the points to the line is minimized. If the regression is on [math]\displaystyle{ X }[/math] , the line is fitted to a set of data points such that the sum of the squares of the horizontal deviations from the points to the line is minimized. To illustrate the method, this section presents a regression on [math]\displaystyle{ Y }[/math] . Consider the linear model [2]:

- [math]\displaystyle{ {{Y}_{i}}={{\widehat{\beta }}_{0}}+{{\widehat{\beta }}_{1}}{{X}_{i1}}+{{\widehat{\beta }}_{2}}{{X}_{i2}}+...+{{\widehat{\beta }}_{p}}{{X}_{ip}} }[/math]

or in matrix form where bold letters indicate matrices:

- [math]\displaystyle{ Y=X\beta }[/math]

- where:

- [math]\displaystyle{ Y=\left[ \begin{matrix} {{Y}_{1}} \\ {{Y}_{2}} \\ \vdots \\ {{Y}_{N}} \\ \end{matrix} \right] }[/math]

- [math]\displaystyle{ X=\left[ \begin{matrix} 1 & {{X}_{1,1}} & \cdots & {{X}_{1,p}} \\ 1 & {{X}_{2,1}} & \cdots & {{X}_{2,p}} \\ \vdots & \vdots & \ddots & \vdots \\ 1 & {{X}_{N,1}} & \cdots & {{X}_{N,p}} \\ \end{matrix} \right] }[/math]

- and:

- [math]\displaystyle{ \beta =\left[ \begin{matrix} {{\beta }_{0}} \\ {{\beta }_{1}} \\ \vdots \\ {{\beta }_{p}} \\ \end{matrix} \right] }[/math]

The vector [math]\displaystyle{ \beta }[/math] holds the values of the parameters. Now let [math]\displaystyle{ \widehat{\beta } }[/math] be the estimates of these parameters, or the regression coefficients. The vector of estimated regression coefficients is denoted by:

- [math]\displaystyle{ \widehat{\beta }=\left[ \begin{matrix} {{\widehat{\beta }}_{0}} \\ {{\widehat{\beta }}_{1}} \\ \vdots \\ {{\widehat{\beta }}_{p}} \\ \end{matrix} \right] }[/math]

Solving for [math]\displaystyle{ \beta }[/math] in Eqn. (linear) requires the analyst to left multiply both sides by the transpose of [math]\displaystyle{ X }[/math] , [math]\displaystyle{ {{X}^{T}} }[/math] :

- [math]\displaystyle{ ({{X}^{T}}X)\widehat{\beta }={{X}^{T}}Y }[/math]

Now the term [math]\displaystyle{ ({{X}^{T}}X) }[/math] becomes a square and invertible matrix. Then taking it to the other side of the equation gives:

- [math]\displaystyle{ \widehat{\beta }={{(}^{T}}^{-1}{{X}^{T}}Y }[/math]

Nonlinear Regression

Nonlinear regression is similar to linear regression, except that a curve is fitted to the data set instead of a straight line. Just as in the linear scenario, the sum of the squares of the horizontal and vertical distances between the line and the points are to be minimized. In the case of the nonlinear Gompertz model [math]\displaystyle{ R=a{{b}^{{{c}^{T}}}} }[/math] , let:

- [math]\displaystyle{ {{Y}_{i}}=f({{T}_{i}},\delta )=a{{b}^{{{c}^{{{T}_{i}}}}}} }[/math]

- where:

- [math]\displaystyle{ {{T}_{i}}=\left[ \begin{matrix} {{T}_{1}} \\ {{T}_{2}} \\ \vdots \\ {{T}_{N}} \\ \end{matrix} \right],\quad i=1,2,...,N }[/math]

- and:

- [math]\displaystyle{ \delta =\left[ \begin{matrix} a \\ b \\ c \\ \end{matrix} \right] }[/math]

The Gauss-Newton method can be used to solve for the parameters [math]\displaystyle{ a }[/math] , [math]\displaystyle{ b }[/math] and [math]\displaystyle{ c }[/math] by performing a Taylor series expansion on [math]\displaystyle{ f({{T}_{i}},\delta ). }[/math] Then approximate the nonlinear model with linear terms and employ ordinary least squares to estimate the parameters. This procedure is performed in an iterative manner and it generally leads to a solution of the nonlinear problem.

This procedure starts by using initial estimates of the parameters [math]\displaystyle{ a }[/math] , [math]\displaystyle{ b }[/math] and [math]\displaystyle{ c }[/math] , denoted as [math]\displaystyle{ g_{1}^{(0)}, }[/math] [math]\displaystyle{ g_{2}^{(0)} }[/math] and [math]\displaystyle{ g_{3}^{(0)}, }[/math] where [math]\displaystyle{ ^{(0)} }[/math] is the iteration number. The Taylor series expansion approximates the mean response, [math]\displaystyle{ f({{T}_{i}},\delta ) }[/math] , around the starting values, [math]\displaystyle{ g_{1}^{(0)}, }[/math] [math]\displaystyle{ g_{2}^{(0)} }[/math] and [math]\displaystyle{ g_{3}^{(0)}. }[/math] For the [math]\displaystyle{ {{i}^{th}} }[/math] observation:

- [math]\displaystyle{ f({{T}_{i}},\delta )\simeq f({{T}_{i}},{{g}^{(0)}})+\underset{k=1}{\overset{p}{\mathop \sum }}\,{{\left[ \frac{\partial f({{T}_{i}},\delta )}{\partial {{\delta }_{k}}} \right]}_{\delta ={{g}^{(0)}}}}({{\delta }_{k}}-g_{k}^{(0)}) }[/math]

- where:

- [math]\displaystyle{ {{g}^{(0)}}=\left[ \begin{matrix} g_{1}^{(0)} \\ g_{2}^{(0)} \\ g_{3}^{(0)} \\ \end{matrix} \right]\lt /math \lt br\gt :Let: \lt br\gt ::\lt math\gt \begin{align} & f_{i}^{(0)}= & f({{T}_{i}},{{g}^{(0)}}) \\ & \nu _{k}^{(0)}= & ({{\delta }_{k}}-g_{k}^{(0)}) \\ & D_{ik}^{(0)}= & {{\left[ \frac{\partial f({{T}_{i}},\delta )}{\partial {{\delta }_{k}}} \right]}_{\delta ={{g}^{(0)}}}} \end{align} }[/math]

So Eqn. (nl1) becomes:

- [math]\displaystyle{ {{Y}_{i}}\simeq f_{i}^{(0)}+\underset{k=1}{\overset{p}{\mathop \sum }}\,D_{ik}^{(0)}\nu _{k}^{(0)} }[/math]

or by shifting [math]\displaystyle{ f_{i}^{(0)} }[/math] to the left of the equation:

- [math]\displaystyle{ Y_{i}^{(0)}\simeq \underset{k=1}{\overset{p}{\mathop \sum }}\,D_{ik}^{(0)}\nu _{k}^{(0)} }[/math]

In matrix form this is given by:

- [math]\displaystyle{ {{Y}^{(0)}}\simeq {{D}^{(0)}}{{\nu }^{(0)}} }[/math]

- where:

- [math]\displaystyle{ {{Y}^{(0)}}=\left[ \begin{matrix} {{Y}_{1}}-f_{1}^{(0)} \\ {{Y}_{2}}-f_{2}^{(0)} \\ \vdots \\ {{Y}_{N}}-f_{N}^{(0)} \\ \end{matrix} \right]=\left[ \begin{matrix} {{Y}_{1}}-g_{1}^{(0)}g_{2}^{(0)g_{3}^{(0){{T}_{1}}}} \\ {{Y}_{1}}-g_{1}^{(0)}g_{2}^{(0)g_{3}^{(0){{T}_{2}}}} \\ \vdots \\ {{Y}_{N}}-g_{1}^{(0)}g_{2}^{(0)g_{3}^{(0){{T}_{N}}}} \\ \end{matrix} \right] }[/math]

- and:

- [math]\displaystyle{ {{\nu }^{(0)}}=\left[ \begin{matrix} g_{1}^{(0)} \\ g_{2}^{(0)} \\ g_{3}^{(0)} \\ \end{matrix} \right] }[/math]

Note that Eqn. (matr) is in the form of the general linear regression model of Eqn. (linear). According to Eqn. (lincoeff), the estimate of the parameters [math]\displaystyle{ {{\nu }^{(0)}} }[/math] is given by:

- [math]\displaystyle{ {{\widehat{\nu }}^{(0)}}={{\left( {{D}^{{{(0)}^{T}}}}{{D}^{(0)}} \right)}^{-1}}{{D}^{{{(0)}^{T}}}}{{Y}^{(0)}} }[/math]

The revised estimated regression coefficients in matrix form are:

- [math]\displaystyle{ {{g}^{(1)}}={{g}^{(0)}}+{{\widehat{\nu }}^{(0)}} }[/math]

The least squares criterion measure, [math]\displaystyle{ Q, }[/math] should be checked to examine whether the revised regression coefficients will lead to a reasonable result. According to the Least Squares Principle, the solution to the values of the parameters are those values that minimize [math]\displaystyle{ Q }[/math] . With the starting coefficients, [math]\displaystyle{ {{g}^{(0)}} }[/math] , [math]\displaystyle{ Q }[/math] is:

- [math]\displaystyle{ {{Q}^{(0)}}=\underset{i=1}{\overset{N}{\mathop \sum }}\,{{\left[ {{Y}_{i}}-f\left( {{T}_{i}},{{g}^{(0)}} \right) \right]}^{2}} }[/math]

And with the coefficients at the end of the first iteration, [math]\displaystyle{ {{g}^{(1)}} }[/math] , [math]\displaystyle{ Q }[/math] is:

- [math]\displaystyle{ {{Q}^{(1)}}=\underset{i=1}{\overset{N}{\mathop \sum }}\,{{\left[ {{Y}_{i}}-f\left( {{T}_{i}},{{g}^{(1)}} \right) \right]}^{2}} }[/math]

For the Gauss-Newton method to work properly and to satisfy the Least Squares Principle, the relationship [math]\displaystyle{ {{Q}^{(k+1)}}\lt {{Q}^{(k)}} }[/math] has to hold for all [math]\displaystyle{ k }[/math] , meaning that [math]\displaystyle{ {{g}^{(k+1)}} }[/math] gives a better estimate than [math]\displaystyle{ {{g}^{(k)}} }[/math] . The problem is not yet completely solved. Now [math]\displaystyle{ {{g}^{(1)}} }[/math] are the starting values, producing a new set of values [math]\displaystyle{ {{g}^{(2)}} }[/math] . The process is continued until the following relationship has been satisfied:

- [math]\displaystyle{ {{Q}^{(s-1)}}-{{Q}^{(s)}}\simeq 0 }[/math]

When using the Gauss-Newton method or some other estimation procedure, it is advisable to try several sets of starting values to make sure that the solution gives relatively consistent results.

Choice of Initial Values

The choice of the starting values is not an easy task. A poor choice may result in a lengthy computation with many iterations. It may also lead to divergence, or to a convergence due to a local minimum. Therefore, good initial values will result in fast computations with few iterations and if multiple minima exist, it will lead to a solution that is a minimum.

Various methods were developed for obtaining valid initial values for the regression parameters. The following procedure is described by Virene [1] in estimating the Gompertz parameters. This procedure is rather simple. It will be used to get the starting values for the Gauss-Newton method, or for any other method that requires initial values. Some analysts are using this method to calculate the parameters if the data set is divisible into three groups of equal size. However, if the data set is not equally divisible, it can still provide good initial estimates.

Consider the case where [math]\displaystyle{ m }[/math] observations are available in the form shown next. Each reliability value, [math]\displaystyle{ {{R}_{i}} }[/math] , is measured at the specified times, [math]\displaystyle{ {{T}_{i}} }[/math] .

- [math]\displaystyle{ \begin{matrix} {{T}_{i}} & {{R}_{i}} \\ {{T}_{0}} & {{R}_{0}} \\ {{T}_{1}} & {{R}_{1}} \\ {{T}_{2}} & {{R}_{2}} \\ \vdots & \vdots \\ {{T}_{m-1}} & {{R}_{m-1}} \\ \end{matrix} }[/math]

- where:

- • [math]\displaystyle{ m=3n, }[/math] [math]\displaystyle{ n }[/math] is equal to the number of items in each equally sized group

- • [math]\displaystyle{ {{T}_{i}}-{{T}_{i-1}}=const }[/math]

- • [math]\displaystyle{ i=0,1,...,m-1 }[/math]

The Gompertz reliability equation is given by:

- [math]\displaystyle{ R=a{{b}^{{{c}^{T}}}} }[/math]

- and:

- [math]\displaystyle{ \ln (R)=\ln (a)+{{c}^{T}}\ln (b) }[/math]

- Define:

- [math]\displaystyle{ S_1=\sum_{i=0}^{n-1} ln(R_i)= n ln(a)+ln(b)\sum_{i=0}^{n-1} c^{T_i} }[/math]

- [math]\displaystyle{ S_2=\sum_{i=n}^{2n-1} ln(R_i)= n ln(a)+ln(b)\sum_{i=n}^{2n-1} c^{T_i} }[/math]

- [math]\displaystyle{ S_3=\sum_{i=2n}^{m-1} ln(R_i)= n ln(a)+ln(b)\sum_{i=2n}^{m-1} c^{T_i} }[/math]

- Then:

- [math]\displaystyle{ \frac{S_3-S_2}{S_2-S_1}=\frac{\sum_{i=2n}{m-1} c^{T_i}-\sum_{i=n}^{2n-1} c^T_i}{\sum_{i=0}^{n-1} c^{T_i}} }[/math]

- [math]\displaystyle{ \frac{S_3-S_2}{S_2-S_1}=c^T_{2n}\frac{\sum_{i=0}{n-1} c^{T_i}-c^{T_n}\sum_{i=0}^{n-1} c^T_i}{c^{T_n}\sum_{i=0}^{n-1} c^{T_i}} }[/math]

- [math]\displaystyle{ \frac{S_3 - S_2}{S_2-S_1}=\frac{c^{T_2n}-c^{T_n}}{c^{T_n}-1}=c^{T_{a_n}}=c^{n\cdot I+T_0} }[/math]

Without loss of generality, take [math]\displaystyle{ {{T}_{{{a}_{0}}}}=0 }[/math] ; then:

- [math]\displaystyle{ \frac{{{S}_{3}}-{{S}_{2}}}{{{S}_{2}}-{{S}_{1}}}={{c}^{n\cdot I}} }[/math]

Solving for [math]\displaystyle{ c }[/math] yields:

Considering Eqns. (gomp3a) and (gomp3b), then:

- [math]\displaystyle{ \begin{align} & {{S}_{1}}-n\cdot \ln (a)= & \ln (b)\underset{i=0}{\overset{n-1}{\mathop \sum }}\,{{c}^{{{T}_{i}}}} \\ & {{S}_{2}}-n\cdot \ln (a)= & \ln (b)\underset{i=n}{\overset{2n-1}{\mathop \sum }}\,{{c}^{{{T}_{i}}}} \end{align} }[/math]

- or:

- [math]\displaystyle{ \frac{{{S}_{1}}-n\cdot \ln (a)}{{{S}_{2}}-n\cdot \ln (a)}=\frac{1}{{{c}^{n\cdot I}}} }[/math]

Reordering the equation yields:

- [math]\displaystyle{ \begin{align} & \ln (a)= & \frac{1}{n}\left( {{S}_{1}}+\frac{{{S}_{2}}-{{S}_{1}}}{1-{{c}^{n\cdot I}}} \right) \\ & a= & {{e}^{\left[ \tfrac{1}{n}\left( {{S}_{1}}+\tfrac{{{S}_{2}}-{{S}_{1}}}{1-{{c}^{n\cdot I}}} \right) \right]}} \end{align} }[/math]

If the reliability values are in percent then [math]\displaystyle{ a }[/math] needs to be divided by [math]\displaystyle{ 100 }[/math] to return the estimate in decimal format. Consider Eqns. (gomp3a) and (gomp3b) again, where:

Reordering Eqn. (gomp6) yields:

- [math]\displaystyle{ \begin{align} & \ln (b)= & \frac{({{S}_{2}}-{{S}_{1}})({{c}^{I}}-1)}{{{(1-{{c}^{n\cdot I}})}^{2}}} \\ & b= & {{e}^{\left[ \tfrac{\left( {{S}_{2}}-{{S}_{1}} \right)\left( {{c}^{I}}-1 \right)}{{{\left( 1-{{c}^{n\cdot I}} \right)}^{2}}} \right]}} \end{align} }[/math]

For the special case where [math]\displaystyle{ I=1 }[/math] , from Eqns. (gomp4), (gomp5) and (gomp7), the parameters are:

- [math]\displaystyle{ \begin{align} & c= & {{\left( \frac{{{S}_{3}}-{{S}_{2}}}{{{S}_{2}}-{{S}_{1}}} \right)}^{\tfrac{1}{n}}} \\ & a= & {{e}^{\left[ \tfrac{1}{n}\left( {{S}_{1}}+\tfrac{{{S}_{2}}-{{S}_{1}}}{1-{{c}^{n}}} \right) \right]}} \\ & b= & {{e}^{\left[ \tfrac{({{S}_{2}}-{{S}_{1}})(c-1)}{{{\left( 1-{{c}^{n}} \right)}^{2}}} \right]}} \end{align} }[/math]

To estimate the values of the parameters [math]\displaystyle{ a,b }[/math] and [math]\displaystyle{ c }[/math] , do the following:

- 1) Arrange the currently available data in terms of [math]\displaystyle{ T }[/math] and [math]\displaystyle{ R }[/math] as in Table 7.1. The [math]\displaystyle{ T }[/math] values should be chosen at equal intervals and increasing in value by 1, such as one month, one hour, etc.

- 2) Calculate the natural log [math]\displaystyle{ R }[/math] .

- 3) Divide the column of values for log [math]\displaystyle{ R }[/math] into three groups of equal size, each containing [math]\displaystyle{ n }[/math] items. There should always be three groups. Each group should always have the same number, [math]\displaystyle{ n }[/math] , of items, measurements or values.

- 4) Add the values of the natural log [math]\displaystyle{ R }[/math] in each group, obtaining the sums identified as [math]\displaystyle{ {{S}_{1}} }[/math] , [math]\displaystyle{ {{S}_{2}} }[/math] and [math]\displaystyle{ {{S}_{3}} }[/math] , starting with the lowest values of the natural log [math]\displaystyle{ R }[/math] .

- 5) Calculate [math]\displaystyle{ c }[/math] from Eqn. (eq9):

- [math]\displaystyle{ c={{\left( \frac{{{S}_{3}}-{{S}_{2}}}{{{S}_{2}}-{{S}_{1}}} \right)}^{\tfrac{1}{n}}} }[/math]

- 6) Calculate [math]\displaystyle{ a }[/math] from Eqn. (eq10):

- [math]\displaystyle{ a={{e}^{\left[ \tfrac{1}{n}\left( {{S}_{1}}+\tfrac{{{S}_{2}}-{{S}_{1}}}{1-{{c}^{n}}} \right) \right]}} }[/math]

- 7) Calculate [math]\displaystyle{ b }[/math] from Eqn. (eq11):

- [math]\displaystyle{ b={{e}^{\left[ \tfrac{({{S}_{2}}-{{S}_{1}})(c-1)}{{{\left( 1-{{c}^{n}} \right)}^{2}}} \right]}}\lt /m :8) Write the Gompertz reliability growth equation. :9) Substitute the value of \lt math\gt T }[/math] , the time at which the reliability goal is to be achieved, to see if the reliability is indeed to be attained or exceeded by [math]\displaystyle{ T }[/math] .

| Group Number | Growth Time [math]\displaystyle{ T }[/math](months) | Reliability [math]\displaystyle{ R }[/math](%) | [math]\displaystyle{ \ln{R} }[/math] |

|---|---|---|---|

| 0 | 58 | 4.060 | |

| 1 | 1 | 66 | 4.190 |

| [math]\displaystyle{ {{S}_{1}} }[/math] = 8.250 | |||

| 2 | 72.5 | 4.284 | |

| 2 | 3 | 78 | 4.357 |

| [math]\displaystyle{ {{S}_{2}} }[/math] = 8.641 | |||

| 4 | 82 | 4.407 | |

| 3 | 5 | 85 | 4.443 |

| [math]\displaystyle{ {{S}_{3}} }[/math] = 8.850 |

Confidence Bounds for the Gompertz Model

The approximate reliability confidence bounds under the Gompertz model can be obtained with nonlinear regression. Additionally, the reliability is always between [math]\displaystyle{ 0 }[/math] and [math]\displaystyle{ 1 }[/math] . In order to keep the endpoints of the confidence interval, the logit transformation is used to obtain the confidence bounds on reliability.

- [math]\displaystyle{ CB=\frac{{{{\hat{R}}}_{i}}}{{{{\hat{R}}}_{i}}+(1-{{{\hat{R}}}_{i}}){{e}^{\pm {{z}_{\alpha }}{{{\hat{\sigma }}}_{R}}/\left[ {{{\hat{R}}}_{i}}(1-{{{\hat{R}}}_{i}}) \right]}}} }[/math]

- [math]\displaystyle{ {{\hat{\sigma }}^{2}}=\frac{SSE}{n-p} }[/math]

where [math]\displaystyle{ p }[/math] is the total number of groups (in this case 3) and [math]\displaystyle{ n }[/math] is the total number of items in each group.

Example 1

A device is required to have a reliability of [math]\displaystyle{ 92% }[/math] at the end of a 12-month design and development period. Table 7.1 gives the data obtained for the first five moths.

- 1) What will the reliability be at the end of this 12-month period?

- 2) What will the maximum achievable reliability be if the reliability program plan pursued during the first 5 months is continued?

- 3) How do the predicted reliability values compare with the actual values?

Solution

Having completed Steps 1 through 4 by preparing Table 7.1 and calculating the last column of the table to find [math]\displaystyle{ {{S}_{1}} }[/math] , [math]\displaystyle{ {{S}_{2}} }[/math] and [math]\displaystyle{ {{S}_{3}} }[/math] , proceed as follows:

- a) Find [math]\displaystyle{ c }[/math] from Eqn. (eq9).

- [math]\displaystyle{ \begin{align} & c= & {{\left( \frac{8.850-8.641}{8.641-8.250} \right)}^{\tfrac{1}{2}}} \\ & = & 0.731 \end{align} }[/math]

- b) Find [math]\displaystyle{ a }[/math] from Eqn. (eq10).

- This is the upper limit for the reliability as [math]\displaystyle{ T\to \infty }[/math] .

- c) Find [math]\displaystyle{ b }[/math] from Eqn. (eq11).

- [math]\displaystyle{ \begin{align} & b= & {{e}^{\left[ \tfrac{(8.641-8.250)(0.731-1)}{{{(1-{{0.731}^{2}})}^{2}}} \right]}} \\ & = & {{e}^{(-0.485)}} \\ & = & 0.615 \end{align} }[/math]

- Now, since the initial values have been determined, the Gauss-Newton method can be used. Therefore, substituting [math]\displaystyle{ {{Y}_{i}}={{R}_{i}}, }[/math] [math]\displaystyle{ g_{1}^{(0)}=94.16, }[/math] [math]\displaystyle{ g_{2}^{(0)}=0.615, }[/math] [math]\displaystyle{ g_{3}^{(0)}=0.731, }[/math] [math]\displaystyle{ {{Y}^{(0)}},{{D}^{(0)}}, }[/math] [math]\displaystyle{ {{\nu }^{(0)}} }[/math] become:

- [math]\displaystyle{ {{Y}^{(0)}}=\left[ \begin{matrix} 0.0916 \\ 0.0015 \\ -0.1190 \\ 0.1250 \\ 0.0439 \\ -0.0743 \\ \end{matrix} \right] }[/math]

- [math]\displaystyle{ {{D}^{(0)}}=\left[ \begin{matrix} 0.6150 & 94.1600 & 0.0000 \\ 0.7009 & 78.4470 & -32.0841 \\ 0.7712 & 63.0971 & -51.6122 \\ 0.8270 & 49.4623 & -60.6888 \\ 0.8704 & 38.0519 & -62.2513 \\ 0.9035 & 28.8742 & -59.0463 \\ \end{matrix} \right] }[/math]

- [math]\displaystyle{ {{\nu }^{(0)}}=\left[ \begin{matrix} g_{1}^{(0)} \\ g_{2}^{(0)} \\ g_{3}^{(0)} \\ \end{matrix} \right]=\left[ \begin{matrix} 94.16 \\ 0.615 \\ 0.731 \\ \end{matrix} \right] }[/math]

The estimate of the parameters [math]\displaystyle{ {{\nu }^{(0)}} }[/math] is given by:

The revised estimated regression coefficients in matrix form are:

- [math]\displaystyle{ {{Q}^{(k+1)}}\lt {{Q}^{(k)}} }[/math]

If the Gauss-Newton method works effectively, then the relationship has to hold, meaning that [math]\displaystyle{ {{g}^{(k+1)}} }[/math] gives better estimates than [math]\displaystyle{ {{g}^{(k)}} }[/math] , after [math]\displaystyle{ k }[/math] . With the starting coefficients, [math]\displaystyle{ {{g}^{(0)}}, }[/math] [math]\displaystyle{ Q }[/math] is:

And with the coefficients at the end of the first iteration, [math]\displaystyle{ {{g}^{(1)}}, }[/math] [math]\displaystyle{ Q }[/math] is:

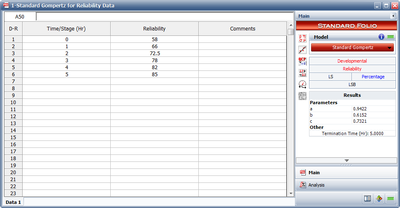

Therefore, it can be justified that the Gauss-Newton method works in the right direction. The iterations are continued until the relationship of Eqn.(crit) is satisfied. Note that RGA uses a different analysis method called the Levenberg-Marquardt. This method utilizes the best features of the Gauss-Newton method and the method of the steepest descent, and occupies a middle ground between these two methods. The estimated parameters using RGA are shown in Figure SGomp1. They are:

- [math]\displaystyle{ \begin{align} & \widehat{a}= & 0.9422 \\ & \widehat{b}= & 0.6152 \\ & \widehat{c}= & 0.7321 \end{align} }[/math]

The Gompertz reliability growth curve is:

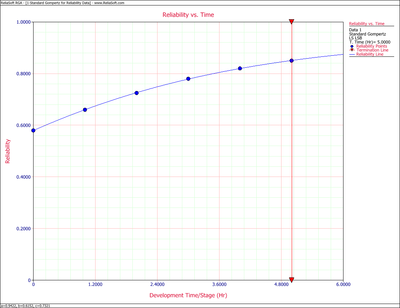

- [math]\displaystyle{ R=0.9422{{(0.6152)}^{{{0.7321}^{T}}}} }[/math]

- 1) The achievable reliability at the end of the 12-month period of design and development is:

The required reliability is [math]\displaystyle{ 92% }[/math] . Consequently, from the previous result, this requirement will barely be met. Every effort should therefore be expended to implement the reliability program plan fully, and perhaps augment it slightly to assure that the reliability goal will be met.

- 2) The maximum achievable reliability from Step 2, or from the value of [math]\displaystyle{ a }[/math] , is [math]\displaystyle{ 0.9422 }[/math] .

- 3) The predicted reliability values, as calculated from the Gompertz equation, Eqn. (eq8), are compared with the actual data in Table 7.2. It may be seen in Table 7.2 that the Gompertz curve appears to provide a very good fit for the data used, since the equation reproduces the available data with less than [math]\displaystyle{ 1% }[/math] error. Eqn. (eq8) is plotted in Figure oldfig32 and identifies the type of reliability growth curve this equation represents.

| Growth Time [math]\displaystyle{ T }[/math](months) | Gompertz Reliability(%) | Raw Data Reliability(%) |

|---|---|---|

| 0 | 57.97 | 58.00 |

| 1 | 66.02 | 66.00 |

| 2 | 72.62 | 72.50 |

| 3 | 77.87 | 78.00 |

| 4 | 81.95 | 82.00 |

| 5 | 85.07 | 85.00 |

| 6 | 87.43 | |

| 7 | 89.20 | |

| 8 | 90.52 | |

| 9 | 91.50 | |

| 10 | 92.22 | |

| 11 | 92.75 | |

| 12 | 93.14 |

Example 2

Calculate the parameters of the Gompertz model using the sequential data in Table 7.3.

| Run Number | Result | Successes | Observed Reliability(%) |

|---|---|---|---|

| 1 | F | 0 | |

| 2 | F | 0 | |

| 3 | F | 0 | |

| 4 | S | 1 | 25.00 |

| 5 | F | 1 | 20.00 |

| 6 | F | 1 | 16.67 |

| 7 | S | 2 | 28.57 |

| 8 | S | 3 | 37.50 |

| 9 | S | 4 | 44.44 |

| 10 | S | 5 | 50.00 |

| 11 | S | 6 | 54.55 |

| 12 | S | 7 | 58.33 |

| 13 | S | 8 | 61.54 |

| 14 | S | 9 | 64.29 |

| 15 | S | 10 | 66.67 |

| 16 | S | 11 | 68.75 |

| 17 | F | 11 | 64.71 |

| 18 | S | 12 | 66.67 |

| 19 | F | 12 | 63.16 |

| 20 | S | 13 | 65.00 |

| 21 | S | 14 | 66.67 |

| 22 | S | 15 | 68.18 |

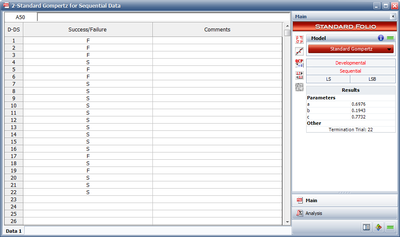

Solution Using RGA, the parameter estimates are shown in Figure SGomp2.