Crow-AMSAA (NHPP): Difference between revisions

Kate Racaza (talk | contribs) |

Lisa Hacker (talk | contribs) No edit summary |

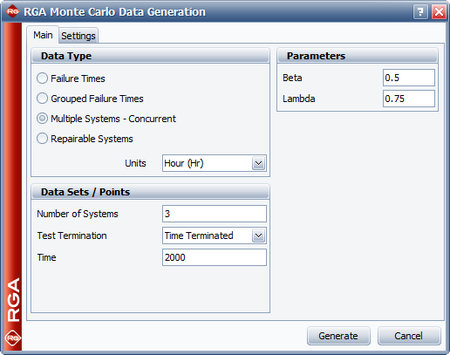

||

| (154 intermediate revisions by 7 users not shown) | |||

| Line 1: | Line 1: | ||

{{template:RGA BOOK| | {{template:RGA BOOK|3.2|Crow-AMSAA}} | ||

Dr. Larry H. Crow [[RGA_References|[17]]] noted that the [[Duane Model]] could be stochastically represented as a Weibull process, allowing for statistical procedures to be used in the application of this model in reliability growth. This statistical extension became what is known as the Crow-AMSAA (NHPP) model. This method was first developed at the U.S. Army Materiel Systems Analysis Activity (AMSAA). It is frequently used on systems when usage is measured on a continuous scale. It can also be applied for the analysis of one shot items when there is high reliability and a large number of trials | Dr. Larry H. Crow [[RGA_References|[17]]] noted that the [[Duane Model]] could be stochastically represented as a Weibull process, allowing for statistical procedures to be used in the application of this model in reliability growth. This statistical extension became what is known as the Crow-AMSAA (NHPP) model. This method was first developed at the U.S. Army Materiel Systems Analysis Activity (AMSAA). It is frequently used on systems when usage is measured on a continuous scale. It can also be applied for the analysis of one shot items when there is high reliability and a large number of trials | ||

Test programs are generally conducted on a phase by phase basis. The Crow-AMSAA model is designed for tracking the reliability within a test phase and not across test phases. A development testing program may consist of several separate test phases. If corrective actions are introduced during a particular test phase, then this type of testing and the associated data are appropriate for analysis by the Crow-AMSAA model. The model analyzes the reliability growth progress within each test phase and can aid in determining the following: | Test programs are generally conducted on a phase by phase basis. The Crow-AMSAA model is designed for tracking the reliability within a test phase and not across test phases. A development testing program may consist of several separate test phases. If corrective actions are introduced during a particular test phase, then this type of testing and the associated data are appropriate for analysis by the Crow-AMSAA model. The model analyzes the reliability growth progress within each test phase and can aid in determining the following: | ||

| Line 11: | Line 11: | ||

*Applicable goodness-of-fit tests | *Applicable goodness-of-fit tests | ||

==Background== | |||

== | |||

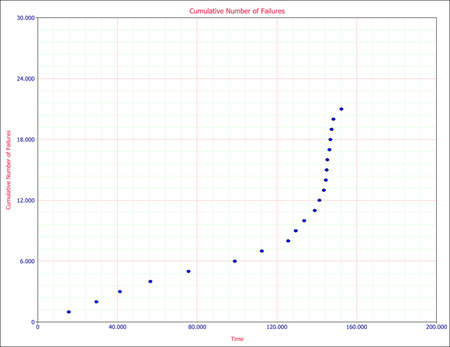

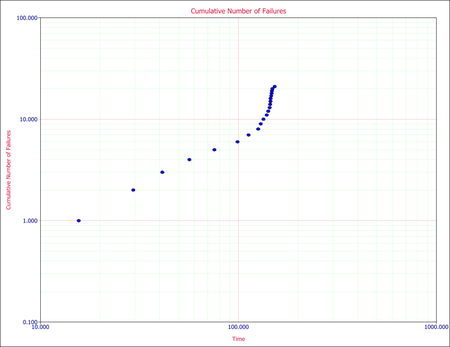

The reliability growth pattern for the Crow-AMSAA model is exactly the same pattern as for the [[Duane Model|Duane postulate]], that is, the cumulative number of failures is linear when plotted on ln-ln scale. Unlike the Duane postulate, the Crow-AMSAA model is statistically based. Under the Duane postulate, the failure rate is linear on ln-ln scale. However, for the Crow-AMSAA model statistical structure, the failure intensity of the underlying non-homogeneous Poisson process (NHPP) is linear when plotted on ln-ln scale. | The reliability growth pattern for the Crow-AMSAA model is exactly the same pattern as for the [[Duane Model|Duane postulate]], that is, the cumulative number of failures is linear when plotted on ln-ln scale. Unlike the Duane postulate, the Crow-AMSAA model is statistically based. Under the Duane postulate, the failure rate is linear on ln-ln scale. However, for the Crow-AMSAA model statistical structure, the failure intensity of the underlying non-homogeneous Poisson process (NHPP) is linear when plotted on ln-ln scale. | ||

Let <math>N(t)\,\!</math> be the cumulative number of failures observed in cumulative test time <math>t\,\!</math>, and let <math>\rho (t)\,\!</math> be the failure intensity for the Crow-AMSAA model. Under the NHPP model, <math>\rho (t)\Delta t\,\!</math> is approximately the probably of a failure occurring over the interval <math>[t,t+\Delta t]\,\!</math> for small <math>\Delta t\,\!</math>. In addition, the expected number of failures experienced over the test interval <math>[0,T]\,\!</math> under the Crow-AMSAA model is given by: | Let <math>N(t)\,\!</math> be the cumulative number of failures observed in cumulative test time <math>t\,\!</math>, and let <math>\rho (t)\,\!</math> be the failure intensity for the Crow-AMSAA model. Under the NHPP model, <math>\rho (t)\Delta t\,\!</math> is approximately the probably of a failure occurring over the interval <math>[t,t+\Delta t]\,\!</math> for small <math>\Delta t\,\!</math>. In addition, the expected number of failures experienced over the test interval <math>[0,T]\,\!</math> under the Crow-AMSAA model is given by: | ||

:<math>E[N(T)]=\int_{0}^{T}\rho (t)dt\,\!</math> | |||

The Crow-AMSAA model assumes that <math>\rho (T)\,\!</math> may be approximated by the Weibull failure rate function: | The Crow-AMSAA model assumes that <math>\rho (T)\,\!</math> may be approximated by the Weibull failure rate function: | ||

:<math>\rho (T)=\frac{\beta }{{{\eta }^{\beta }}}{{T}^{\beta -1}}\,\!</math> | |||

Therefore, if <math>\lambda =\tfrac{1}{{{\eta }^{\beta }}},\,\!</math> the intensity function, <math>\rho (T),\,\!</math> or the instantaneous failure intensity, <math>{{\lambda }_{i}}(T)\,\!</math>, is defined as: | Therefore, if <math>\lambda =\tfrac{1}{{{\eta }^{\beta }}},\,\!</math> the intensity function, <math>\rho (T),\,\!</math> or the instantaneous failure intensity, <math>{{\lambda }_{i}}(T)\,\!</math>, is defined as: | ||

:<math>{{\lambda }_{i}}(T)=\lambda \beta {{T}^{\beta -1}},\text{with }T>0,\text{ }\lambda >0\text{ and }\beta >0\,\!</math> | |||

In the special case of exponential failure times, there is no growth and the failure intensity, <math>\rho (t)\,\!</math>, is equal to <math>\lambda \,\!</math>. In this case, the expected number of failures is given by: | In the special case of exponential failure times, there is no growth and the failure intensity, <math>\rho (t)\,\!</math>, is equal to <math>\lambda \,\!</math>. In this case, the expected number of failures is given by: | ||

:<math>\begin{align} | |||

E[N(T)]= & \ | E[N(T)]= & \int_{0}^{T}\rho (t)dt \\ | ||

= & \lambda T | = & \lambda T | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

In order for the plot to be linear when plotted on ln-ln scale under the general reliability growth case, the following must hold true where the expected number of failures is equal to: | In order for the plot to be linear when plotted on ln-ln scale under the general reliability growth case, the following must hold true where the expected number of failures is equal to: | ||

:<math>\begin{align} | |||

E[N(T)]= & \ | E[N(T)]= & \int_{0}^{T}\rho (t)dt \\ | ||

= & \lambda {{T}^{\beta }} | = & \lambda {{T}^{\beta }} | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

To put a statistical structure on the reliability growth process, consider again the special case of no growth. In this case the number of failures, <math>N(T),\,\!</math> experienced during the testing over <math>[0,T]\,\!</math> is random. The expected number of failures, <math>N(T),\,\!</math> is said to follow the homogeneous (constant) Poisson process with mean <math>\lambda T\,\!</math> and is given by: | To put a statistical structure on the reliability growth process, consider again the special case of no growth. In this case the number of failures, <math>N(T),\,\!</math> experienced during the testing over <math>[0,T]\,\!</math> is random. The expected number of failures, <math>N(T),\,\!</math> is said to follow the homogeneous (constant) Poisson process with mean <math>\lambda T\,\!</math> and is given by: | ||

:<math>\underset{}{\overset{}{\mathop{\Pr }}}\,[N(T)=n]=\frac{{{(\lambda T)}^{n}}{{e}^{-\lambda T}}}{n!};\text{ }n=0,1,2,\ldots \,\!</math> | |||

The Crow-AMSAA model generalizes this no growth case to allow for reliability growth due to corrective actions. This generalization keeps the Poisson distribution for the number of failures but allows for the expected number of failures, <math>E[N(T)],\,\!</math> to be linear when plotted on ln-ln scale. The Crow-AMSAA model lets <math>E[N(T)]=\lambda {{T}^{\beta }}\,\!</math>. The probability that the number of failures, <math>N(T),\,\!</math> will be equal to <math>n\,\!</math> under growth is then given by the Poisson distribution: | The Crow-AMSAA model generalizes this no growth case to allow for reliability growth due to corrective actions. This generalization keeps the Poisson distribution for the number of failures but allows for the expected number of failures, <math>E[N(T)],\,\!</math> to be linear when plotted on ln-ln scale. The Crow-AMSAA model lets <math>E[N(T)]=\lambda {{T}^{\beta }}\,\!</math>. The probability that the number of failures, <math>N(T),\,\!</math> will be equal to <math>n\,\!</math> under growth is then given by the Poisson distribution: | ||

:<math>\underset{}{\overset{}{\mathop{\Pr }}}\,[N(T)=n]=\frac{{{(\lambda {{T}^{\beta }})}^{n}}{{e}^{-\lambda {{T}^{\beta }}}}}{n!};\text{ }n=0,1,2,\ldots \,\!</math> | |||

This is the general growth situation, and the number of failures, <math>N(T)\,\!</math>, follows a non-homogeneous Poisson process. The exponential, "no growth" homogeneous Poisson process is a special case of the non-homogeneous Crow-AMSAA model. This is reflected in the Crow-AMSAA model parameter where <math>\beta =1\,\!</math>. | This is the general growth situation, and the number of failures, <math>N(T)\,\!</math>, follows a non-homogeneous Poisson process. The exponential, "no growth" homogeneous Poisson process is a special case of the non-homogeneous Crow-AMSAA model. This is reflected in the Crow-AMSAA model parameter where <math>\beta =1\,\!</math>. | ||

The cumulative failure rate, <math>{{\lambda }_{c}}\,\!</math>, is: | The cumulative failure rate, <math>{{\lambda }_{c}}\,\!</math>, is: | ||

:<math>\begin{align} | |||

{{\lambda }_{c}}=\lambda {{T}^{\beta -1}} | {{\lambda }_{c}}=\lambda {{T}^{\beta -1}} | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

The cumulative <math>MTB{{F}_{c}}\,\!</math> is: | The cumulative <math>MTB{{F}_{c}}\,\!</math> is: | ||

:<math>MTB{{F}_{c}}=\frac{1}{\lambda }{{T}^{1-\beta }}\,\!</math> | |||

As mentioned above, the local pattern for reliability growth within a test phase is the same as the growth pattern observed by [[Duane Model|Duane]]. The Duane <math>MTB{{F}_{c}}\,\!</math> is equal to: | As mentioned above, the local pattern for reliability growth within a test phase is the same as the growth pattern observed by [[Duane Model|Duane]]. The Duane <math>MTB{{F}_{c}}\,\!</math> is equal to: | ||

:<math>MTB{{F}_{{{c}_{DUANE}}}}=b{{T}^{\alpha }}\,\!</math> | |||

And the Duane cumulative failure rate, <math>{{\lambda }_{c}}\,\!</math>, is: | And the Duane cumulative failure rate, <math>{{\lambda }_{c}}\,\!</math>, is: | ||

:<math>{{\lambda }_{{{c}_{DUANE}}}}=\frac{1}{b}{{T}^{-\alpha }}\,\!</math> | |||

Thus a relationship between Crow-AMSAA parameters and Duane parameters can be developed, such that: | Thus a relationship between Crow-AMSAA parameters and Duane parameters can be developed, such that: | ||

:<math>\begin{align} | |||

{{b}_{DUANE}}= & \frac{1}{{{\lambda }_{AMSAA}}} \\ | {{b}_{DUANE}}= & \frac{1}{{{\lambda }_{AMSAA}}} \\ | ||

{{\alpha }_{DUANE}}= & 1-{{\beta }_{AMSAA}} | {{\alpha }_{DUANE}}= & 1-{{\beta }_{AMSAA}} | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

Note that these relationships are not absolute. They change according to how the parameters (slopes, intercepts, etc.) are defined when the analysis of the data is performed. For the exponential case, <math>\beta =1\,\!</math>, then <math>{{\lambda }_{i}}(T)=\lambda \,\!</math>, a constant. For <math>\beta >1\,\!</math>, <math>{{\lambda }_{i}}(T)\,\!</math> is increasing. This indicates a deterioration in system reliability. For <math>\beta <1\,\!</math>, <math>{{\lambda }_{i}}(T)\,\!</math> is decreasing. This is indicative of reliability growth. Note that the model assumes a Poisson process with the Weibull intensity function, not the Weibull distribution. Therefore, statistical procedures for the Weibull distribution do not apply for this model. The parameter <math>\lambda \,\!</math> is called a scale parameter because it depends upon the unit of measurement chosen for <math>T\,\!</math>, while <math>\beta \,\!</math> is the shape parameter that characterizes the shape of the graph of the intensity function. | Note that these relationships are not absolute. They change according to how the parameters (slopes, intercepts, etc.) are defined when the analysis of the data is performed. For the exponential case, <math>\beta =1\,\!</math>, then <math>{{\lambda }_{i}}(T)=\lambda \,\!</math>, a constant. For <math>\beta >1\,\!</math>, <math>{{\lambda }_{i}}(T)\,\!</math> is increasing. This indicates a deterioration in system reliability. For <math>\beta <1\,\!</math>, <math>{{\lambda }_{i}}(T)\,\!</math> is decreasing. This is indicative of reliability growth. Note that the model assumes a Poisson process with the Weibull intensity function, not the Weibull distribution. Therefore, statistical procedures for the Weibull distribution do not apply for this model. The parameter <math>\lambda \,\!</math> is called a scale parameter because it depends upon the unit of measurement chosen for <math>T\,\!</math>, while <math>\beta \,\!</math> is the shape parameter that characterizes the shape of the graph of the intensity function. | ||

| Line 91: | Line 78: | ||

The total number of failures, <math>N(T)\,\!</math>, is a random variable with Poisson distribution. Therefore, the probability that exactly <math>n\,\!</math> failures occur by time <math>T\,\!</math> is: | The total number of failures, <math>N(T)\,\!</math>, is a random variable with Poisson distribution. Therefore, the probability that exactly <math>n\,\!</math> failures occur by time <math>T\,\!</math> is: | ||

:<math>P[N(T)=n]=\frac{{{[\theta (T)]}^{n}}{{e}^{-\theta (T)}}}{n!}\,\!</math> | |||

The number of failures occurring in the interval from <math>{{T}_{1}}\,\!</math> to <math>{{T}_{2}}\,\!</math> is a random variable having a Poisson distribution with mean: | The number of failures occurring in the interval from <math>{{T}_{1}}\,\!</math> to <math>{{T}_{2}}\,\!</math> is a random variable having a Poisson distribution with mean: | ||

:<math>\theta ({{T}_{2}})-\theta ({{T}_{1}})=\lambda (T_{2}^{\beta }-T_{1}^{\beta })\,\!</math> | |||

The number of failures in any interval is statistically independent of the number of failures in any interval that does not overlap the first interval. At time <math>{{T}_{0}}\,\!</math>, the failure intensity is <math>{{\lambda }_{i}}({{T}_{0}})=\lambda \beta T_{0}^{\beta -1}\,\!</math>. If improvements are not made to the system after time <math>{{T}_{0}}\,\!</math>, it is assumed that failures would continue to occur at the constant rate <math>{{\lambda }_{i}}({{T}_{0}})=\lambda \beta T_{0}^{\beta -1}\,\!</math>. Future failures would then follow an exponential distribution with mean <math>m({{T}_{0}})=\tfrac{1}{\lambda \beta T_{0}^{\beta -1}}\,\!</math>. The instantaneous MTBF of the system at time <math>T\,\!</math> is: | The number of failures in any interval is statistically independent of the number of failures in any interval that does not overlap the first interval. At time <math>{{T}_{0}}\,\!</math>, the failure intensity is <math>{{\lambda }_{i}}({{T}_{0}})=\lambda \beta T_{0}^{\beta -1}\,\!</math>. If improvements are not made to the system after time <math>{{T}_{0}}\,\!</math>, it is assumed that failures would continue to occur at the constant rate <math>{{\lambda }_{i}}({{T}_{0}})=\lambda \beta T_{0}^{\beta -1}\,\!</math>. Future failures would then follow an exponential distribution with mean <math>m({{T}_{0}})=\tfrac{1}{\lambda \beta T_{0}^{\beta -1}}\,\!</math>. The instantaneous MTBF of the system at time <math>T\,\!</math> is: | ||

:<math>m(T)=\frac{1}{\lambda \beta {{T}^{\beta -1}}}\,\!</math> | |||

<math>m(T)\,\!</math> is also called the demonstrated (or achieved) MTBF. | |||

===Note About Applicability=== | ===Note About Applicability=== | ||

The [[Duane Model|Duane]] and Crow-AMSAA models are the most frequently used reliability growth models. Their relationship comes from the fact that both make use of the underlying observed linear relationship between the logarithm of cumulative MTBF and cumulative test time. However, the Duane model does not provide a capability to test whether the change in MTBF observed over time is significantly different from what might be seen due to random error between phases. The Crow-AMSAA model allows for such assessments. Also, the Crow-AMSAA allows for development of hypothesis testing procedures to determine growth presence in the data (where <math>\beta <1\,\!</math> indicates that there is growth in MTBF, <math>\beta =1\,\!</math> indicates a constant MTBF and <math>\beta >1\,\!</math> indicates a decreasing MTBF). Additionally, the Crow-AMSAA model views the process of reliability growth as probabilistic, while the Duane model views the process as deterministic. | The [[Duane Model|Duane]] and Crow-AMSAA models are the most frequently used reliability growth models. Their relationship comes from the fact that both make use of the underlying observed linear relationship between the logarithm of cumulative MTBF and cumulative test time. However, the Duane model does not provide a capability to test whether the change in MTBF observed over time is significantly different from what might be seen due to random error between phases. The Crow-AMSAA model allows for such assessments. Also, the Crow-AMSAA allows for development of hypothesis testing procedures to determine growth presence in the data (where <math>\beta <1\,\!</math> indicates that there is growth in MTBF, <math>\beta =1\,\!</math> indicates a constant MTBF and <math>\beta >1\,\!</math> indicates a decreasing MTBF). Additionally, the Crow-AMSAA model views the process of reliability growth as probabilistic, while the Duane model views the process as deterministic. | ||

== | ==Failure Times Data== | ||

=== | A description of Failure Times Data is presented in the [[RGA Data Types#Failure_Times_Data|RGA Data Types]] page. | ||

The probability density function (''pdf'') of the <math>{{i}^{th}}\,\!</math> event given that the <math>{{(i-1)}^{th}}\,\!</math> event occurred at <math>{{T}_{i-1}}\,\!</math> is: | ===Parameter Estimation for Failure Times Data=== <!-- THIS SECTION HEADER IS LINKED FROM OTHER LOCATIONS IN THIS DOCUMENT AND ALSO FROM Crow Extended - Continuous Evaluation. IF YOU RENAME THE SECTION, YOU MUST UPDATE THE LINK(S). --> | ||

The parameters for the Crow-AMSAA (NHPP) model are estimated using maximum likelihood estimation (MLE). The probability density function (''pdf'') of the <math>{{i}^{th}}\,\!</math> event given that the <math>{{(i-1)}^{th}}\,\!</math> event occurred at <math>{{T}_{i-1}}\,\!</math> is: | |||

:<math>f({{T}_{i}}|{{T}_{i-1}})=\frac{\beta }{\eta }{{\left( \frac{{{T}_{i}}}{\eta } \right)}^{\beta -1}}\cdot {{e}^{-\tfrac{1}{{{\eta }^{\beta }}}\left( T_{i}^{\beta }-T_{i-1}^{\beta } \right)}}\,\!</math> | |||

Let <math>\lambda =\tfrac{1}{{{\eta }^{\beta }}},\,\!</math>, the likelihood function is: | |||

:<math>L={{\lambda }^{n}}{{\beta }^{n}}{{e}^{-\lambda {{T}^{*\beta }}}}\underset{i=1}{\overset{n}{\mathop \prod }}\,T_{i}^{\beta -1}\,\!</math> | |||

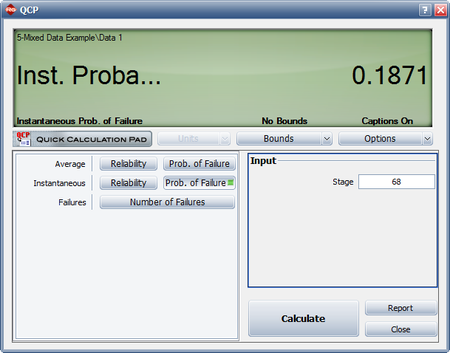

where <math>{{T}^{*}}\,\!</math> is the termination time and is given by: | where <math>{{T}^{*}}\,\!</math> is the termination time and is given by: | ||

:<math>{{T}^{*}}=\left\{ \begin{matrix} | |||

{{T}_{n}}\text{ if the test is failure terminated} \\ | {{T}_{n}}\text{ if the test is failure terminated} \\ | ||

T>{{T}_{n}}\text{ if the test is time terminated} \\ | T>{{T}_{n}}\text{ if the test is time terminated} \\ | ||

\end{matrix} \right\}\,\!</math> | \end{matrix} \right\}\,\!</math> | ||

Taking the natural log on both sides: | Taking the natural log on both sides: | ||

:<math>\Lambda =n\ln \lambda +n\ln \beta -\lambda {{T}^{*\beta }}+(\beta -1)\underset{i=1}{\overset{n}{\mathop \sum }}\,\ln {{T}_{i}}\,\!</math> | |||

And differentiating with respect to <math>\lambda \,\!</math> yields: | And differentiating with respect to <math>\lambda \,\!</math> yields: | ||

:<math>\frac{\partial \Lambda }{\partial \lambda }=\frac{n}{\lambda }-{{T}^{*\beta }}\,\!</math> | |||

Set equal to zero and solve for <math>\lambda \,\!</math> : | Set equal to zero and solve for <math>\lambda \,\!</math> : | ||

:<math>\hat{\lambda }=\frac{n}{{{T}^{*\beta }}}\,\!</math> | |||

Now differentiate with respect to <math>\beta \,\!</math> : | Now differentiate with respect to <math>\beta \,\!</math> : | ||

:<math>\frac{\partial \Lambda }{\partial \beta }=\frac{n}{\beta }-\lambda {{T}^{*\beta }}\ln {{T}^{*}}+\underset{i=1}{\overset{n}{\mathop \sum }}\,\ln {{T}_{i}}\,\!</math> | |||

Set equal to zero and solve for <math>\beta \,\!</math> : | Set equal to zero and solve for <math>\beta \,\!</math> : | ||

:<math>\hat{\beta }=\frac{n}{n\ln {{T}^{*}}-\underset{i=1}{\overset{n}{\mathop{\sum }}}\,\ln {{T}_{i}}}\,\!</math> | |||

This equation is used for both failure terminated and time terminated test data. | |||

: | ====Biasing and Unbiasing of Beta==== <!-- THIS SECTION HEADER IS LINKED FROM: Crow Extended - Continuous Evaluation. IF YOU RENAME THE SECTION, YOU MUST UPDATE THE LINK(S). --> | ||

The equation above returns the biased estimate, <math>\hat{\beta }\,\!</math>. The unbiased estimate, <math>\bar{\beta }\,\!</math>, can be calculated by using the following relationships. For time terminated data (the test ends after a specified test time): | |||

:<math>\bar{\beta }=\frac{N-1}{N}\hat{\beta }\,\!</math> | |||

For failure terminated data ( | For failure terminated data (the test ends after a specified number of failures): | ||

:<math>\bar{\beta }=\frac{N-2}{N-1}\hat{\beta }\,\!</math> | |||

By default <math>\hat{\beta }\,\!</math> is returned. <math>\bar{\beta }\,\!</math> can be returned by selecting the '''Calculate unbiased beta''' option on the Calculations tab of the Application Setup. | |||

{{ | |||

== | ===Cramér-von Mises Test=== | ||

The | The Cramér-von Mises (CVM) goodness-of-fit test validates the hypothesis that the data follows a non-homogeneous Poisson process with a failure intensity equal to <math>u(t)=\lambda \beta {{t}^{\beta -1}}\,\!</math>. This test can be applied when the failure data is complete over the continuous interval <math>[0,{{T}_{q}}]\,\!</math> with no gaps in the data. The CVM data type applies to all data types when the failure times are known, except for Fleet data. | ||

= | If the individual failure times are known, a Cramér-von Mises statistic is used to test the null hypothesis that a non-homogeneous Poisson process with the failure intensity function <math>\rho \left( t \right)=\lambda \,\beta \,{{t}^{\beta -1}}\left( \lambda >0,\beta >0,t>0 \right)\,\!</math> properly describes the reliability growth of a system. The Cramér-von Mises goodness-of-fit statistic is then given by the following expression: | ||

{{ | |||

:<math>C_{M}^{2}=\frac{1}{12M}+\underset{i=1}{\overset{M}{\mathop \sum }}\,{{\left[ {{\left( \frac{{{T}_{i}}}{T} \right)}^{{\bar{\beta }}}}-\frac{2i-1}{2M} \right]}^{2}}\,\!</math> | |||

: | where: | ||

:<math>M=\left\{ \begin{matrix} | |||

N\text{ if the test is time terminated} \\ | |||

N-1\text{ if the test is failure terminated} \\ | |||

\end{matrix} \right\}\,\!</math> | |||

:<math>{\bar{\beta }}\,\!</math> is the unbiased value of Beta. | |||

The failure times, <math>{{T}_{i}}\,\!</math>, must be ordered so that <math>{{T}_{1}}<{{T}_{2}}<\ldots <{{T}_{M}}\,\!</math>. | |||

If the statistic <math>C_{M}^{2}\,\!</math> is less than the critical value corresponding to <math>M\,\!</math> for a chosen significance level, then you can fail to reject the null hypothesis that the Crow-AMSAA model adequately fits the data. | |||

====Critical Values==== | |||

The following table displays the critical values for the Cramér-von Mises goodness-of-fit test given the sample size, <math>M\,\!</math>, and the significance level, <math>\alpha \,\!</math>. | |||

{|border="1" align="center" style="border-collapse: collapse;" cellpadding="5" cellspacing="5" | |||

|- | |||

|colspan="6" style="text-align:center"|'''Critical values for Cramér-von Mises test''' | |||

|- | |||

| ||colspan="5" style="text-align:center;"|<math>\alpha \,\!</math> | |||

|- | |||

|<math>M\,\!</math>|| 0.20|| 0.15|| 0.10|| 0.05|| 0.01 | |||

|- | |||

|2|| 0.138|| 0.149|| 0.162|| 0.175|| 0.186 | |||

|- | |||

|3|| 0.121|| 0.135|| 0.154|| 0.184||0.23 | |||

|- | |||

|4|| 0.121|| 0.134|| 0.155|| 0.191||0.28 | |||

|- | |||

|5|| 0.121|| 0.137|| 0.160|| 0.199||0.30 | |||

|- | |||

|6|| 0.123|| 0.139|| 0.162|| 0.204||0.31 | |||

|- | |||

|7|| 0.124|| 0.140|| 0.165|| 0.208||0.32 | |||

|- | |||

|8|| 0.124|| 0.141|| 0.165|| 0.210||0.32 | |||

|- | |||

|9|| 0.125|| 0.142|| 0.167|| 0.212||0.32 | |||

|- | |||

|10|| 0.125|| 0.142|| 0.167|| 0.212||0.32 | |||

|- | |||

|11|| 0.126|| 0.143|| 0.169|| 0.214||0.32 | |||

|- | |||

|12|| 0.126|| 0.144|| 0.169|| 0.214||0.32 | |||

|- | |||

|13|| 0.126|| 0.144|| 0.169|| 0.214||0.33 | |||

|- | |||

|14|| 0.126|| 0.144|| 0.169|| 0.214||0.33 | |||

|- | |||

|15|| 0.126|| 0.144|| 0.169|| 0.215||0.33 | |||

|- | |||

|16|| 0.127|| 0.145|| 0.171|| 0.216|| 0.33 | |||

|- | |||

|17|| 0.127|| 0.145|| 0.171|| 0.217|| 0.33 | |||

|- | |||

|18|| 0.127|| 0.146|| 0.171|| 0.217|| 0.33 | |||

|- | |||

|19|| 0.127|| 0.146|| 0.171|| 0.217|| 0.33 | |||

|- | |||

|20|| 0.128|| 0.146|| 0.172|| 0.217|| 0.33 | |||

|- | |||

|30|| 0.128|| 0.146|| 0.172|| 0.218|| 0.33 | |||

|- | |||

|60|| 0.128|| 0.147|| 0.173|| 0.220|| 0.33 | |||

|- | |||

|100|| 0.129|| 0.147|| 0.173|| 0.220|| 0.34 | |||

|} | |||

The significance level represents the probability of rejecting the hypothesis even if it's true. So, there is a risk associated with applying the goodness-of-fit test (i.e., there is a chance that the CVM will indicate that the model does not fit, when in fact it does). As the significance level is increased, the CVM test becomes more stringent. Keep in mind that the CVM test passes when the test statistic is less than the critical value. Therefore, the larger the critical value, the more room there is to work with (e.g., a CVM test with a significance level equal to 0.1 is more strict than a test with 0.01). | |||

===Confidence Bounds=== | |||

The RGA software provides two methods to estimate the confidence bounds for the Crow Extended model when applied to developmental testing data. The Fisher Matrix approach is based on the Fisher Information Matrix and is commonly employed in the reliability field. The Crow bounds were developed by Dr. Larry Crow. See the [[Crow-AMSAA Confidence Bounds]] chapter for details on how the confidence bounds are calculated. | |||

===Failure Times Data Examples=== | |||

====Example - Parameter Estimation==== | |||

{{:Crow-AMSAA Parameter Estimation Example}} | |||

{{:Crow-AMSAA_Confidence_Bounds_Example}} | |||

==Multiple Systems== | |||

When more than one system is placed on test during developmental testing, there are multiple data types which are available depending on the testing strategy and the format of the data. The data types that allow for the analysis of multiple systems using the Crow-AMSAA (NHPP) model are given below: | |||

*[[Crow-AMSAA_(NHPP)#Multiple Systems (Known Operating Times)|Multiple Systems (Known Operating Times)]] | |||

*[[Crow-AMSAA_(NHPP)#Multiple Systems (Concurrent Operating Times)|Multiple Systems (Concurrent Operating Times)]] | |||

*[[Crow-AMSAA_(NHPP)#Multiple Systems with Dates|Multiple Systems with Dates]] | |||

=== | ===Goodness-of-fit Tests=== | ||

For all multiple systems data types, the [[Crow-AMSAA (NHPP)#Cram.C3.A9r-von_Mises_Test|Cramér-von Mises (CVM) Test]] is available. For Multiple Systems (Concurrent Operating Times) and Multiple Systems with Dates, two additional tests are also available: [[Hypothesis Tests#Laplace_Trend_Test|Laplace Trend Test]] and [[Hypothesis Tests#Common_Beta_Hypothesis_Test|Common Beta Hypothesis]]. | |||

== | ===Multiple Systems (Known Operating Times)=== | ||

A description of Multiple Systems (Known Operating Times) is presented on the [[RGA Data Types#Multiple_Systems_.28Known_Operating_Times.29|RGA Data Types]] page. | |||

Consider the data in the table below for two prototypes that were placed in a reliability growth test. | |||

: | <center>'''Developmental Test Data for Two Identical Systems''' </center> | ||

{|border="1" align="center" style="border-collapse: collapse;" cellpadding="5" cellspacing="5" | |||

!Failure Number | |||

!Failed Unit | |||

!Test Time Unit 1 (hr) | |||

!Test Time Unit 2 (hr) | |||

!Total Test Time (hr) | |||

!<math>ln{(T)}\,\!</math> | |||

|- | |||

|1|| 1|| 1.0|| 1.7|| 2.7|| 0.99325 | |||

|- | |||

|2|| 1|| 7.3|| 3.0|| 10.3|| 2.33214 | |||

|- | |||

|3|| 2|| 8.7|| 3.8|| 12.5|| 2.52573 | |||

|- | |||

|4|| 2|| 23.3|| 7.3|| 30.6|| 3.42100 | |||

|- | |||

|5|| 2|| 46.4|| 10.6|| 57.0|| 4.04305 | |||

|- | |||

|6|| 1|| 50.1|| 11.2|| 61.3|| 4.11578 | |||

|- | |||

|7|| 1|| 57.8|| 22.2|| 80.0|| 4.38203 | |||

|- | |||

|8|| 2|| 82.1|| 27.4|| 109.5|| 4.69592 | |||

|- | |||

|9|| 2|| 86.6|| 38.4|| 125.0||4.82831 | |||

|- | |||

|10|| 1|| 87.0|| 41.6|| 128.6|| 4.85671 | |||

|- | |||

|11|| 2|| 98.7|| 45.1|| 143.8|| 4.96842 | |||

|- | |||

|12|| 1|| 102.2|| 65.7|| 167.9|| 5.12337 | |||

|- | |||

|13|| 1|| 139.2 ||90.0||229.2|| 5.43459 | |||

|- | |||

|14|| 1|| 166.6|| 130.1|| 296.7|| 5.69272 | |||

|- | |||

|15|| 2|| 180.8|| 139.8 ||320.6||5.77019 | |||

|- | |||

|16|| 1|| 181.3|| 146.9|| 328.2|| 5.79362 | |||

|- | |||

|17|| 2|| 207.9|| 158.3 ||366.2||5.90318 | |||

|- | |||

|18|| 2|| 209.8|| 186.9|| 396.7|| 5.98318 | |||

|- | |||

|19|| 2|| 226.9|| 194.2|| 421.1|| 6.04287 | |||

|- | |||

|20|| 1|| 232.2|| 206.0|| 438.2|| 6.08268 | |||

|- | |||

|21|| 2|| 267.5|| 233.7|| 501.2|| 6.21701 | |||

|- | |||

|22|| 2|| 330.1|| 289.9|| 620.0|| 6.42972 | |||

|} | |||

The Failed Unit column indicates the system that failed and is meant to be informative, but it does not affect the calculations. To combine the data from both systems, the system ages are added together at the times when a failure occurred. This is seen in the Total Test Time column above. Once the single timeline is generated, then the calculations for the parameters Beta and Lambda are the same as the process presented for [[Crow-AMSAA (NHPP)#Parameter_Estimation_for_Failure_Times_Data|Failure Times Data]]. The results of this analysis would match the results of [[Crow-AMSAA (NHPP)#Failure_Times_-_Example_1|Failure Times - Example 1]]. | |||

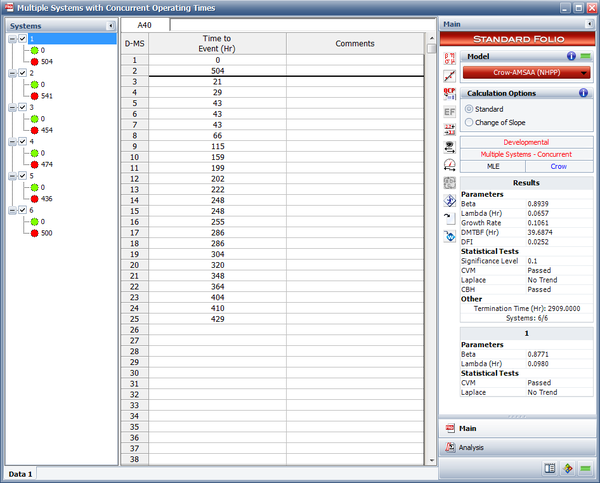

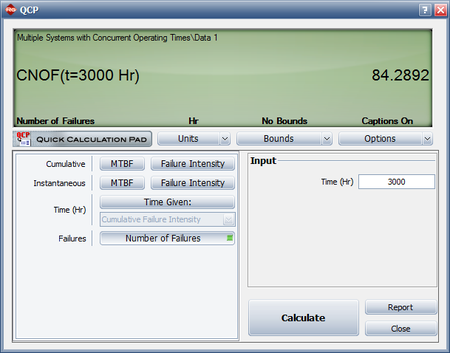

===Multiple Systems (Concurrent Operating Times)=== | |||

A description of Multiple Systems (Concurrent Operating Times) is presented on the [[RGA Data Types#Multiple_Systems_.28Concurrent_Operating_Times.29|RGA Data Types]] page. | |||

====Parameter Estimation for Multiple Systems (Concurrent Operating Times)==== | |||

To estimate the parameters, the equivalent system must first be determined. The equivalent single system (ESS) is calculated by summing the usage across all systems when a failure occurs. Keep in mind that Multiple Systems (Concurrent Operating Times) assumes that the systems are running simultaneously and accumulate the same usage. If the systems have different end times then the equivalent system must only account for the systems that are operating when a failure occurred. Systems with a start time greater than zero are shifted back to t = 0. This is the same as having a start time equal to zero and the converted end time is equal to the end time minus the start time. In addition, all failures times are adjusted by subtracting the start time from each value to ensure that all values occur within t = 0 and the adjusted end time. A start time greater than zero indicates that it is not known as to what events occurred at a time less than the start time. This may have been caused by the events during this period not being tracked and/or recorded properly. | |||

As an example, consider two systems have entered a reliability growth test. Both systems have a start time equal to zero and both begin the test with the same configuration. System 1 operated for 100 hours and System 2 operated for 125 hours. The failure times for each system are given below: | |||

*System 1: 25, 47, 80 | |||

*System 2: 15, 62, 89, 110 | |||

: | To build the ESS, the total accumulated hours across both systems is taken into account when a failure occurs. Therefore, given the data for Systems 1 and 2, the ESS is comprised of the following events: 30, 50, 94, 124, 160, 178, 210. | ||

The ESS combines the data from both systems into a single timeline. The termination time for the ESS is (100 + 125) = 225 hours. The parameter estimates for <math>\hat{\beta }\,\!</math> and <math>\hat{\lambda}\,\!</math> are then calculated using the ESS. This process is the same as the method for [[Crow-AMSAA (NHPP)#Parameter_Estimation_for_Failure_Times_Data|Failure Times data]]. | |||

====Example - Concurrent Operating Times==== | |||

{{:Concurrent Operating Times - Crow-AMSAA (NHPP) Example}} | |||

===Multiple Systems with Dates=== | |||

An overview of the Multiple Systems with Dates data type is presented on the [[RGA Data Types#Multiple_Systems_with_Dates|RGA Data Types]] page. While Multiple Systems with Dates requires a date for each event, including the start and end times for each system, once the equivalent single system is determined, the parameter estimation is the same as it is for Multiple Systems (Concurrent Operating Times). See [[Crow-AMSAA_(NHPP)#Parameter_Estimation_for_Multiple_Systems_.28Concurrent_Operating_Times.29|Parameter Estimation for Multiple Systems (Concurrent Operating Times)]] for details. | |||

: | ==Grouped Data== <!-- THIS SECTION HEADER IS LINKED FROM: Operational Mission Profile Testing, Crow Extended, and Fleet Data Analysis. IF YOU RENAME THE SECTION, YOU MUST UPDATE THE LINK(S). --> | ||

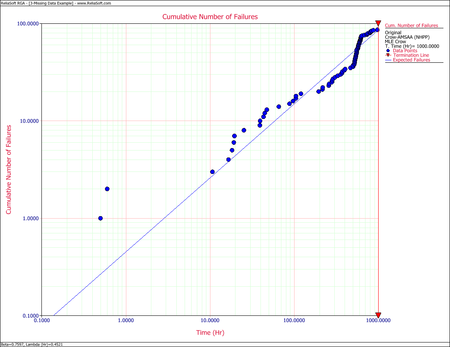

A description of Grouped Data is presented in the [[RGA Data Types#Grouped_Failure_Times|RGA Data Types]] page. | |||

===Parameter Estimation for Grouped Data=== | |||

For analyzing grouped data, we follow the same logic described previously for the [[Duane Model|Duane]] model. If the <math>E[N(T)]\,\!</math> equation from the [[Crow-AMSAA_(NHPP)#Background|Background]] section above is linearized: | |||

:<math>\begin{align} | |||

\ln [E(N(T))]=\ln \lambda +\beta \ln T | |||

\end{align}\,\!</math> | |||

According to Crow [[RGA_References|[9]]], the likelihood function for the grouped data case, (where <math>{{n}_{1}},\,\!</math> <math>{{n}_{2}},\,\!</math> <math>{{n}_{3}},\ldots ,\,\!</math> <math>{{n}_{k}}\,\!</math> failures are observed and <math>k\,\!</math> is the number of groups), is: | |||

:<math>\underset{i=1}{\overset{k}{\mathop \prod }}\,\underset{}{\overset{}{\mathop{\Pr }}}\,({{N}_{i}}={{n}_{i}})=\underset{i=1}{\overset{k}{\mathop \prod }}\,\frac{{{(\lambda T_{i}^{\beta }-\lambda T_{i-1}^{\beta })}^{{{n}_{i}}}}\cdot {{e}^{-(\lambda T_{i}^{\beta }-\lambda T_{i-1}^{\beta })}}}{{{n}_{i}}!}\,\!</math> | |||

\ | |||

And the MLE of <math>\lambda \,\!</math> based on this relationship is: | |||

:<math>\hat{\lambda }=\frac{n}{T_{k}^{\hat{\beta }}}\,\!</math> | |||

where <math>n \,\!</math> is the total number of failures from all the groups. | |||

The estimate of <math>\beta \,\!</math> is the value <math>\hat{\beta }\,\!</math> that satisfies: | |||

:<math>\underset{i=1}{\overset{k}{\mathop \sum }}\,{{n}_{i}}\left[ \frac{T_{i}^{\hat{\beta }}\ln {{T}_{i}}-T_{i-1}^{\hat{\beta }}\ln {{T}_{i-1}}}{T_{i}^{\hat{\beta }}-T_{i-1}^{\hat{\beta }}}-\ln {{T}_{k}} \right]=0\,\!</math> | |||

See [[Crow-AMSAA Confidence Bounds#Grouped_Data|Crow-AMSAA Confidence Bounds]] for details on how confidence bounds for grouped data are calculated. | |||

===Chi-Squared Test=== | |||

A chi-squared goodness-of-fit test is used to test the null hypothesis that the Crow-AMSAA reliability model adequately represents a set of grouped data. This test is applied only when the data is grouped. The expected number of failures in the interval from <math>{{T}_{i-1}}\,\!</math> to <math>{{T}_{i}}\,\!</math> is approximated by: | |||

:<math>{{\hat{\theta }}_{i}}=\hat{\lambda }\left( T_{i}^{{\hat{\beta }}}-T_{i-1}^{{\hat{\beta }}} \right)\,\!</math> | |||

For each interval, <math>{{\hat{\theta }}_{i}}\,\!</math> shall not be less than 5 and, if necessary, adjacent intervals may have to be combined so that the expected number of failures in any combined interval is at least 5. Let the number of intervals after this recombination be <math>d\,\!</math>, and let the observed number of failures in the <math>{{i}^{th}}\,\!</math> new interval be <math>{{N}_{i}}\,\!</math>. Finally, let the expected number of failures in the <math>{{i}^{th}}\,\!</math> new interval be <math>{{\hat{\theta }}_{i}}\,\!</math>. Then the following statistic is approximately distributed as a chi-squared random variable with degrees of freedom <math>d-2\,\!</math>. | |||

:<math>{{\chi }^{2}}=\underset{i=1}{\overset{d}{\mathop \sum }}\,\frac{{{({{N}_{i}}-{{\hat{\theta }}_{i}})}^{2}}}{{{\hat{\theta }}_{i}}}\,\!</math> | |||

{{ | |||

\ | |||

The null hypothesis is rejected if the <math>{{\chi }^{2}}\,\!</math> statistic exceeds the critical value for a chosen significance level. In this case, the hypothesis that the Crow-AMSAA model adequately fits the grouped data shall be rejected. Critical values for this statistic can be found in chi-squared distribution tables. | |||

===Grouped Data Examples=== | |||

====Example - Simple Grouped==== | |||

{{:Crow-AMSAA_Model_-_Grouped_Data_Example}} | |||

====Example - Helicopter System==== | |||

{{ | {{:Crow-AMSAA_Model_-_Helicopter_System_Example}} | ||

<div class="noprint"> | |||

{{Examples Box|RGA Examples|<p>More grouped data examples are available! See also:</p> | |||

{{Examples Link External|http://www.reliasoft.com/rga/examples/rgex1/index.htm|Simple MTBF Determination}}<nowiki/> | |||

}} | |||

</div> | |||

== | <!-- ==Goodness-of-Fit Tests== This section is no longer necessary--> | ||

This section | <!-- {{:Goodness-of-Fit Tests}} This section is no longer necessary--> | ||

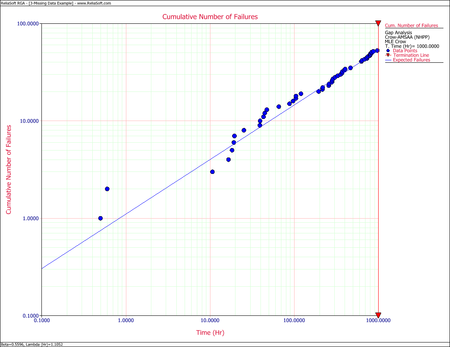

==Missing Data== | |||

{{:Gap Analysis}} | |||

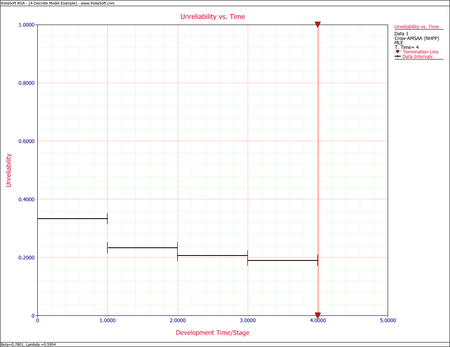

==Discrete Data== | |||

The Crow-AMSAA model can be adapted for the analysis of ''success/failure'' data (also called ''discrete'' or ''attribute'' data). The following discrete data types are available: | |||

*Sequential | |||

*Grouped per Configuration | |||

*Mixed | |||

Sequential data and Grouped per Configuration are very similar as the parameter estimation methodology is the same for both data types. Mixed data is a combination of Sequential Data and Grouped per Configuration and is presented in [[Crow-AMSAA (NHPP)#Mixed_Data|Mixed Data]]. | |||

===Grouped per Configuration=== | |||

Suppose system development is represented by <math>i\,\!</math> configurations. This corresponds to <math>i-1\,\!</math> configuration changes, unless fixes are applied at the end of the test phase, in which case there would be <math>i\,\!</math> configuration changes. Let <math>{{N}_{i}}\,\!</math> be the number of trials during configuration <math>i\,\!</math> and let <math>{{M}_{i}}\,\!</math> be the number of failures during configuration <math>i\,\!</math>. Then the cumulative number of trials through configuration <math>i\,\!</math>, namely <math>{{T}_{i}}\,\!</math>, is the sum of the <math>{{N}_{i}}\,\!</math> for all <math>i\,\!</math>, or: | |||

:<math>{{T}_{i}}=\underset{}{\overset{}{\mathop \sum }}\,{{N}_{i}}\,\!</math> | |||

And the cumulative number of failures through configuration <math>i\,\!</math>, namely <math>{{K}_{i}}\,\!</math>, is the sum of the <math>{{M}_{i}}\,\!</math> for all <math>i\,\!</math>, or: | |||

:<math>{{K}_{i}}=\underset{}{\overset{}{\mathop \sum }}\,{{M}_{i}}\,\!</math> | |||

The expected value of <math>{{K}_{i}}\,\!</math> can be expressed as <math>E[{{K}_{i}}]\,\!</math> and defined as the expected number of failures by the end of configuration <math>i\,\!</math>. Applying the learning curve property to <math>E[{{K}_{i}}]\,\!</math> implies: | |||

:<math>E\left[ {{K}_{i}} \right]=\lambda T_{i}^{\beta }\,\!</math> | |||

Denote <math>{{f}_{1}}\,\!</math> as the probability of failure for configuration 1 and use it to develop a generalized equation for <math>{{f}_{i}}\,\!</math> in terms of the <math>{{T}_{i}}\,\!</math> and <math>{{N}_{i}}\,\!</math>. From the equation above, the expected number of failures by the end of configuration 1 is: | |||

:<math>E\left[ {{K}_{1}} \right]=\lambda T_{1}^{\beta }={{f}_{1}}{{N}_{1}}\,\!</math> | |||

:<math>\therefore {{f}_{1}}=\frac{\lambda T_{1}^{\beta }}{{{N}_{1}}}\,\!</math> | |||

Applying the <math>E\left[ {{K}_{i}}\right]\,\!</math> equation again and noting that the expected number of failures by the end of configuration 2 is the sum of the expected number of failures in configuration 1 and the expected number of failures in configuration 2: | |||

:<math>\begin{align} | |||

{{ | E\left[ {{K}_{2}} \right] = & \lambda T_{2}^{\beta } \\ | ||

= & {{f}_{1}}{{N}_{1}}+{{f}_{2}}{{N}_{2}} \\ | |||

= & \lambda T_{1}^{\beta }+{{f}_{2}}{{N}_{2}} | |||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

= | :<math>\therefore {{f}_{2}}=\frac{\lambda T_{2}^{\beta }-\lambda T_{1}^{\beta }}{{{N}_{2}}}\,\!</math> | ||

By this method of inductive reasoning, a generalized equation for the failure probability on a configuration basis, <math>{{f}_{i}}\,\!</math>, is obtained, such that: | |||

:<math>{{f}_{i}}=\frac{\lambda T_{i}^{\beta }-\lambda T_{i-1}^{\beta }}{{{N}_{i}}}\,\!</math> | |||

In this equation, <math>i\,\!</math> represents the trial number. Thus, an equation for the reliability (probability of success) for the <math>{{i}^{th}}\,\!</math> configuration is obtained: | |||

:<math>\begin{align} | |||

{{R}_{i}}=1-{{f}_{i}} | |||

\end{align}\,\!</math> | |||

===Sequential Data=== | |||

From the [[Crow-AMSAA (NHPP)#Grouped_per_Configuration|Grouped per Configuration]] section, the following equation is given: | |||

: | :<math>{{f}_{i}}=\frac{\lambda T_{i}^{\beta }-\lambda T_{i-1}^{\beta }}{{{N}_{i}}}\,\!</math> | ||

For the special case where <math>{{N}_{i}}=1\,\!</math> for all <math>i\,\!</math>, the equation above becomes a smooth curve, <math>{{g}_{i}}\,\!</math>, that represents the probability of failure for trial by trial data, or: | |||

< | :<math>{{g}_{i}}=\lambda \cdot {{i}^{\beta }}-\lambda \cdot {{\left( i-1 \right)}^{\beta }}\,\!</math> | ||

{ | |||

When <math>{{N}_{i}}=1\,\!</math>, this is the same as Sequential Data where systems are tested on a trial-by-trial basis. The equation for the reliability for the <math>{{i}^{th}}\,\!</math> trial is: | |||

:<math>\begin{align} | |||

{{R}_{i}}=1-{{g}_{i}} | |||

\end{align}\,\!</math> | |||

===Parameter Estimation for Discrete Data===<!-- THIS SECTION HEADER IS LINKED FROM ANOTHER SECTION IN THIS PAGE. IF YOU RENAME THE SECTION, YOU MUST UPDATE THE LINK. --> | |||

This section describes procedures for estimating the parameters of the Crow-AMSAA model for success/failure data which includes Sequential data and Grouped per Configuration. An example is presented illustrating these concepts. The estimation procedures provide maximum likelihood estimates (MLEs) for the model's two parameters, <math>\lambda \,\!</math> and <math>\beta \,\!</math>. The MLEs for <math>\lambda \,\!</math> and <math>\beta \,\!</math> allow for point estimates for the probability of failure, given by: | |||

:<math>{{\hat{f}}_{i}}=\frac{\hat{\lambda }T_{i}^{{\hat{\beta }}}-\hat{\lambda }T_{i-1}^{{\hat{\beta }}}}{{{N}_{i}}}=\frac{\hat{\lambda }\left( T_{i}^{{\hat{\beta }}}-T_{i-1}^{{\hat{\beta }}} \right)}{{{N}_{i}}}\,\!</math> | |||

And the probability of success (reliability) for each configuration <math>i\,\!</math> is equal to: | |||

= | :<math>{{\hat{R}}_{i}}=1-{{\hat{f}}_{i}}\,\!</math> | ||

The likelihood function is: | |||

:<math>\underset{i=1}{\overset{k}{\mathop \prod }}\,\left( \begin{matrix} | |||

{{N}_{i}} \\ | |||

{{M}_{i}} \\ | |||

\end{matrix} \right){{\left( \frac{\lambda T_{i}^{\beta }-\lambda T_{i-1}^{\beta }}{{{N}_{i}}} \right)}^{{{M}_{i}}}}{{\left( \frac{{{N}_{i}}-\lambda T_{i}^{\beta }+\lambda T_{i-1}^{\beta }}{{{N}_{i}}} \right)}^{{{N}_{i}}-{{M}_{i}}}}\,\!</math> | |||

Taking the natural log on both sides yields: | |||

:<math>\begin{align} | |||

& \Lambda = & \underset{i=1}{\overset{K}{\mathop \sum }}\,\left[ \ln \left( \begin{matrix} | |||

{{N}_{i}} \\ | |||

{{M}_{i}} \\ | |||

\end{matrix} \right)+{{M}_{i}}\left[ \ln (\lambda T_{i}^{\beta }-\lambda T_{i-1}^{\beta })-\ln {{N}_{i}} \right] \right] \\ | |||

& & +\underset{i=1}{\overset{K}{\mathop \sum }}\,\left[ ({{N}_{i}}-{{M}_{i}})\left[ \ln ({{N}_{i}}-\lambda T_{i}^{\beta }+\lambda T_{i-1}^{\beta })-\ln {{N}_{i}} \right] \right] | |||

\end{align}\,\!</math> | |||

Taking the derivative with respect to <math>\lambda \,\!</math> and <math>\beta \,\!</math> respectively, exact MLEs for <math>\lambda \,\!</math> and <math>\beta \,\!</math> are values satisfying the following two equations: | |||

:<math>\begin{align} | |||

& \underset{i=1}{\overset{K}{\mathop \sum }}\,{{H}_{i}}\times {{S}_{i}}= & 0 \\ | |||

& \underset{i=1}{\overset{K}{\mathop \sum }}\,{{U}_{i}}\times {{S}_{i}}= & 0 | |||

\end{align}\,\!</math> | |||

where: | |||

:<math>\begin{align} | |||

{{H}_{i}}= & \left[ T_{i}^{\beta }\ln {{T}_{i}}-T_{i-1}^{\beta }\ln {{T}_{i-1}} \right] \\ | |||

{{S}_{i}}= & \frac{{{M}_{i}}}{\left[ \lambda T_{i}^{\beta }-\lambda T_{i-1}^{\beta } \right]}-\frac{{{N}_{i}}-{{M}_{i}}}{\left[ {{N}_{i}}-\lambda T_{i}^{\beta }+\lambda T_{i-1}^{\beta } \right]} \\ | |||

{{\lambda | {{U}_{i}}= & T_{i}^{\beta }-T_{i-1}^{\beta }\, | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

===Example - Grouped per Configuration=== | |||

{{:Crow-AMSAA Discrete Model Example}} | |||

===Mixed Data=== | |||

The Mixed data type provides additional flexibility in terms of how it can handle different testing strategies. Systems can be tested using different configurations in groups or individual trial by trial, or a mixed combination of individual trials and configurations of more than one trial. The Mixed data type allows you to enter the data so that it represents how the systems were tested within the total number of trials. For example, if you launched five (5) missiles for a given configuration and none of them failed during testing, then there would be a row within the data sheet indicating that this configuration operated successfully for these five trials. If the very next trial, the sixth, failed then this would be a separate row within the data. The flexibility with the data entry allows for a greater understanding in terms of how the systems were tested by simply examining the data. The methodology for estimating the parameters <math>\hat{\beta }\,\!</math> and <math>\hat{\lambda}\,\!</math> are the same as those presented in the [[Crow-AMSAA (NHPP)#Grouped_Data|Grouped Data]] section. With Mixed data, the average reliability and average unreliability within a given interval can also be calculated. | |||

: | The average unreliability is calculated as: | ||

:<math>\text{Average Unreliability }({{t}_{1,}}{{t}_{2}})=\frac{\lambda t_{2}^{\beta }-\lambda t_{1}^{\beta }}{{{t}_{2}}-{{t}_{1}}}\,\!</math> | |||

and the average reliability is calculated as: | |||

:<math>\text{Average Reliability }({{t}_{1,}}{{t}_{2}})=1-\frac{\lambda t_{2}^{\beta }-\lambda t_{1}^{\beta }}{{{t}_{2}}-{{t}_{1}}}\,\!</math> | |||

====Mixed Data Confidence Bounds==== | |||

'''Bounds on Average Failure Probability'''<br> | |||

The process to calculate the average unreliability confidence bounds for Mixed data is as follows: | |||

and the average | #Calculate the average failure probability <math>({{t}_{1}},{{t}_{2}})\,\!</math>. | ||

#There will exist a <math>{{t}^{*}}\,\!</math> between <math>{{t}_{1}}\,\!</math> and <math>{{t}_{2}}\,\!</math> such that the instantaneous unreliability at <math>{{t}^{*}}\,\!</math> equals the average unreliability <math>({{t}_{1}},{{t}_{2}})\,\!</math>. The confidence intervals for the instantaneous unreliability at <math>{{t}^{*}}\,\!</math> are the confidence intervals for the average unreliability <math>({{t}_{1}},{{t}_{2}})\,\!</math>. | |||

'''Bounds on Average Reliability'''<br> | |||

The process to calculate the average reliability confidence bounds for Mixed data is as follows: | |||

#Calculate confidence bounds for average unreliability <math>({{t}_{1}},{{t}_{2}})\,\!</math> as described above. | |||

#The confidence bounds for reliability are 1 minus these confidence bounds for average unreliability. | |||

===Mixed Data | ====Example - Mixed Data==== | ||

{{:Crow-AMSAA Discrete Model Grouped Data Example}} | |||

: | |||

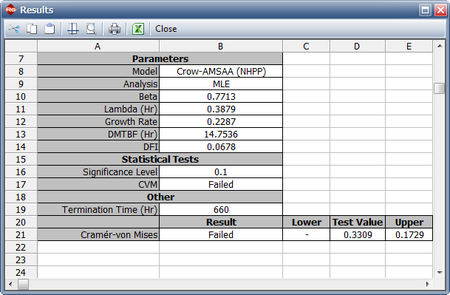

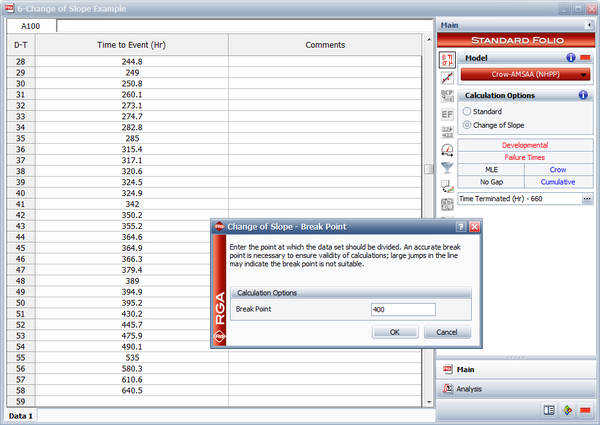

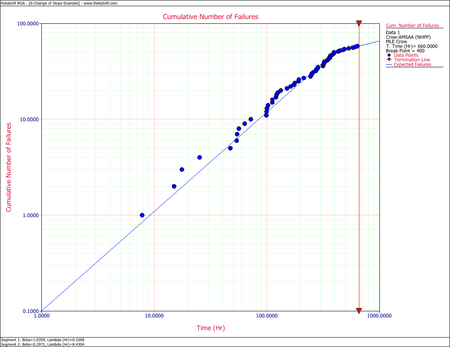

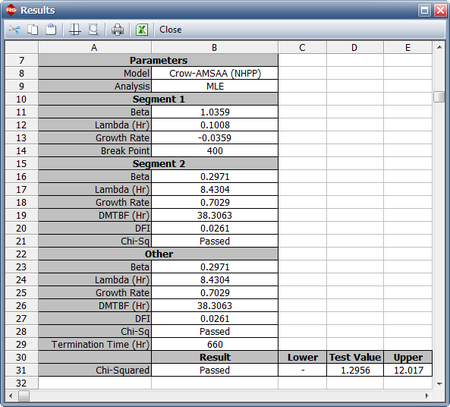

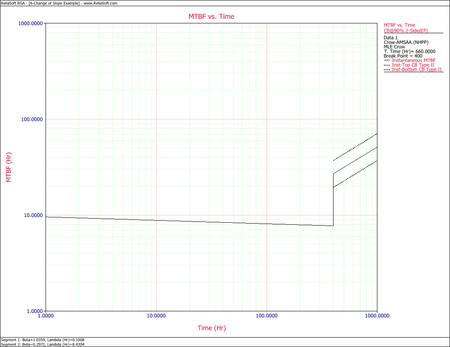

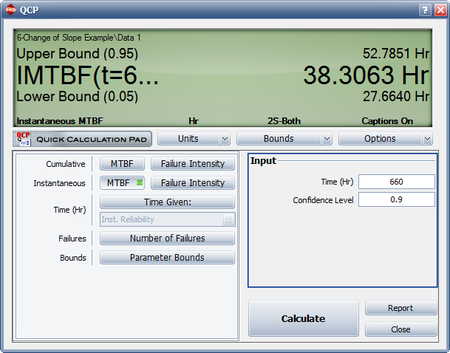

==Change of Slope== | ==Change of Slope== | ||

| Line 443: | Line 517: | ||

==More Examples== | ==More Examples== | ||

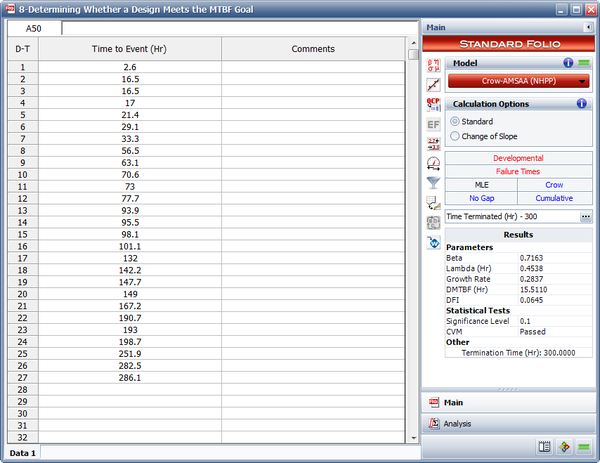

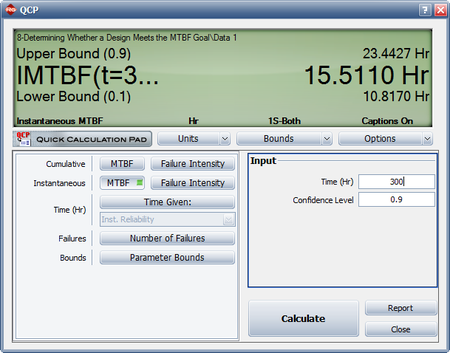

===Determining Whether a Design Meets the MTBF Goal=== | ===Determining Whether a Design Meets the MTBF Goal=== | ||

{{:Failure_Times_Crow-AMSAA_Example}} | |||

{ | |||

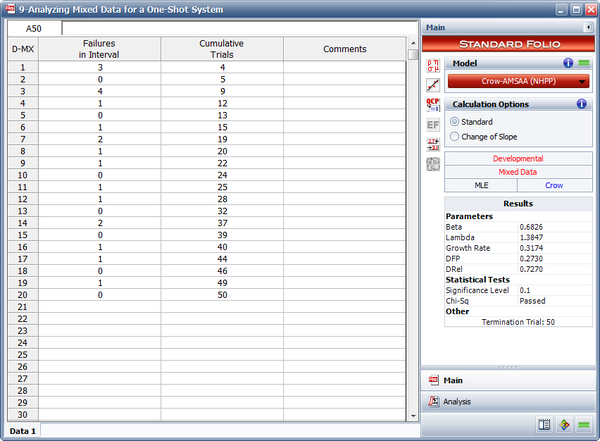

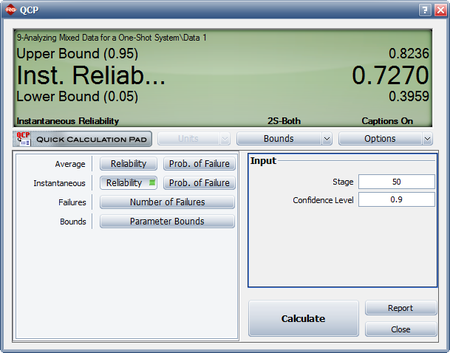

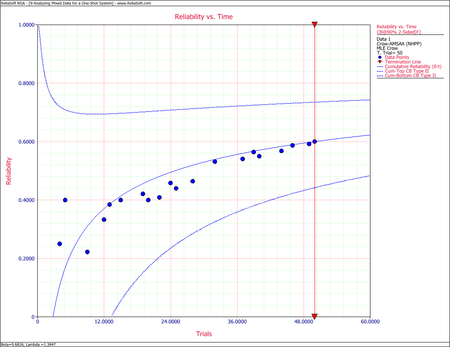

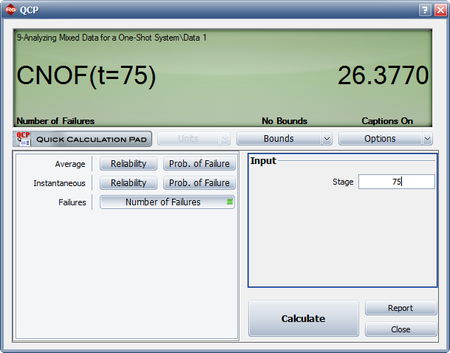

===Analyzing Mixed Data for a One-Shot System=== | ===Analyzing Mixed Data for a One-Shot System=== | ||

{{:Mixed_Data_-_Crow-AMSAA_Example}} | |||

{ | |||

Latest revision as of 00:12, 13 July 2023

Dr. Larry H. Crow [17] noted that the Duane Model could be stochastically represented as a Weibull process, allowing for statistical procedures to be used in the application of this model in reliability growth. This statistical extension became what is known as the Crow-AMSAA (NHPP) model. This method was first developed at the U.S. Army Materiel Systems Analysis Activity (AMSAA). It is frequently used on systems when usage is measured on a continuous scale. It can also be applied for the analysis of one shot items when there is high reliability and a large number of trials

Test programs are generally conducted on a phase by phase basis. The Crow-AMSAA model is designed for tracking the reliability within a test phase and not across test phases. A development testing program may consist of several separate test phases. If corrective actions are introduced during a particular test phase, then this type of testing and the associated data are appropriate for analysis by the Crow-AMSAA model. The model analyzes the reliability growth progress within each test phase and can aid in determining the following:

- Reliability of the configuration currently on test

- Reliability of the configuration on test at the end of the test phase

- Expected reliability if the test time for the phase is extended

- Growth rate

- Confidence intervals

- Applicable goodness-of-fit tests

Background

The reliability growth pattern for the Crow-AMSAA model is exactly the same pattern as for the Duane postulate, that is, the cumulative number of failures is linear when plotted on ln-ln scale. Unlike the Duane postulate, the Crow-AMSAA model is statistically based. Under the Duane postulate, the failure rate is linear on ln-ln scale. However, for the Crow-AMSAA model statistical structure, the failure intensity of the underlying non-homogeneous Poisson process (NHPP) is linear when plotted on ln-ln scale.

Let [math]\displaystyle{ N(t)\,\! }[/math] be the cumulative number of failures observed in cumulative test time [math]\displaystyle{ t\,\! }[/math], and let [math]\displaystyle{ \rho (t)\,\! }[/math] be the failure intensity for the Crow-AMSAA model. Under the NHPP model, [math]\displaystyle{ \rho (t)\Delta t\,\! }[/math] is approximately the probably of a failure occurring over the interval [math]\displaystyle{ [t,t+\Delta t]\,\! }[/math] for small [math]\displaystyle{ \Delta t\,\! }[/math]. In addition, the expected number of failures experienced over the test interval [math]\displaystyle{ [0,T]\,\! }[/math] under the Crow-AMSAA model is given by:

- [math]\displaystyle{ E[N(T)]=\int_{0}^{T}\rho (t)dt\,\! }[/math]

The Crow-AMSAA model assumes that [math]\displaystyle{ \rho (T)\,\! }[/math] may be approximated by the Weibull failure rate function:

- [math]\displaystyle{ \rho (T)=\frac{\beta }{{{\eta }^{\beta }}}{{T}^{\beta -1}}\,\! }[/math]

Therefore, if [math]\displaystyle{ \lambda =\tfrac{1}{{{\eta }^{\beta }}},\,\! }[/math] the intensity function, [math]\displaystyle{ \rho (T),\,\! }[/math] or the instantaneous failure intensity, [math]\displaystyle{ {{\lambda }_{i}}(T)\,\! }[/math], is defined as:

- [math]\displaystyle{ {{\lambda }_{i}}(T)=\lambda \beta {{T}^{\beta -1}},\text{with }T\gt 0,\text{ }\lambda \gt 0\text{ and }\beta \gt 0\,\! }[/math]

In the special case of exponential failure times, there is no growth and the failure intensity, [math]\displaystyle{ \rho (t)\,\! }[/math], is equal to [math]\displaystyle{ \lambda \,\! }[/math]. In this case, the expected number of failures is given by:

- [math]\displaystyle{ \begin{align} E[N(T)]= & \int_{0}^{T}\rho (t)dt \\ = & \lambda T \end{align}\,\! }[/math]

In order for the plot to be linear when plotted on ln-ln scale under the general reliability growth case, the following must hold true where the expected number of failures is equal to:

- [math]\displaystyle{ \begin{align} E[N(T)]= & \int_{0}^{T}\rho (t)dt \\ = & \lambda {{T}^{\beta }} \end{align}\,\! }[/math]

To put a statistical structure on the reliability growth process, consider again the special case of no growth. In this case the number of failures, [math]\displaystyle{ N(T),\,\! }[/math] experienced during the testing over [math]\displaystyle{ [0,T]\,\! }[/math] is random. The expected number of failures, [math]\displaystyle{ N(T),\,\! }[/math] is said to follow the homogeneous (constant) Poisson process with mean [math]\displaystyle{ \lambda T\,\! }[/math] and is given by:

- [math]\displaystyle{ \underset{}{\overset{}{\mathop{\Pr }}}\,[N(T)=n]=\frac{{{(\lambda T)}^{n}}{{e}^{-\lambda T}}}{n!};\text{ }n=0,1,2,\ldots \,\! }[/math]

The Crow-AMSAA model generalizes this no growth case to allow for reliability growth due to corrective actions. This generalization keeps the Poisson distribution for the number of failures but allows for the expected number of failures, [math]\displaystyle{ E[N(T)],\,\! }[/math] to be linear when plotted on ln-ln scale. The Crow-AMSAA model lets [math]\displaystyle{ E[N(T)]=\lambda {{T}^{\beta }}\,\! }[/math]. The probability that the number of failures, [math]\displaystyle{ N(T),\,\! }[/math] will be equal to [math]\displaystyle{ n\,\! }[/math] under growth is then given by the Poisson distribution:

- [math]\displaystyle{ \underset{}{\overset{}{\mathop{\Pr }}}\,[N(T)=n]=\frac{{{(\lambda {{T}^{\beta }})}^{n}}{{e}^{-\lambda {{T}^{\beta }}}}}{n!};\text{ }n=0,1,2,\ldots \,\! }[/math]

This is the general growth situation, and the number of failures, [math]\displaystyle{ N(T)\,\! }[/math], follows a non-homogeneous Poisson process. The exponential, "no growth" homogeneous Poisson process is a special case of the non-homogeneous Crow-AMSAA model. This is reflected in the Crow-AMSAA model parameter where [math]\displaystyle{ \beta =1\,\! }[/math]. The cumulative failure rate, [math]\displaystyle{ {{\lambda }_{c}}\,\! }[/math], is:

- [math]\displaystyle{ \begin{align} {{\lambda }_{c}}=\lambda {{T}^{\beta -1}} \end{align}\,\! }[/math]

The cumulative [math]\displaystyle{ MTB{{F}_{c}}\,\! }[/math] is:

- [math]\displaystyle{ MTB{{F}_{c}}=\frac{1}{\lambda }{{T}^{1-\beta }}\,\! }[/math]

As mentioned above, the local pattern for reliability growth within a test phase is the same as the growth pattern observed by Duane. The Duane [math]\displaystyle{ MTB{{F}_{c}}\,\! }[/math] is equal to:

- [math]\displaystyle{ MTB{{F}_{{{c}_{DUANE}}}}=b{{T}^{\alpha }}\,\! }[/math]

And the Duane cumulative failure rate, [math]\displaystyle{ {{\lambda }_{c}}\,\! }[/math], is:

- [math]\displaystyle{ {{\lambda }_{{{c}_{DUANE}}}}=\frac{1}{b}{{T}^{-\alpha }}\,\! }[/math]

Thus a relationship between Crow-AMSAA parameters and Duane parameters can be developed, such that:

- [math]\displaystyle{ \begin{align} {{b}_{DUANE}}= & \frac{1}{{{\lambda }_{AMSAA}}} \\ {{\alpha }_{DUANE}}= & 1-{{\beta }_{AMSAA}} \end{align}\,\! }[/math]

Note that these relationships are not absolute. They change according to how the parameters (slopes, intercepts, etc.) are defined when the analysis of the data is performed. For the exponential case, [math]\displaystyle{ \beta =1\,\! }[/math], then [math]\displaystyle{ {{\lambda }_{i}}(T)=\lambda \,\! }[/math], a constant. For [math]\displaystyle{ \beta \gt 1\,\! }[/math], [math]\displaystyle{ {{\lambda }_{i}}(T)\,\! }[/math] is increasing. This indicates a deterioration in system reliability. For [math]\displaystyle{ \beta \lt 1\,\! }[/math], [math]\displaystyle{ {{\lambda }_{i}}(T)\,\! }[/math] is decreasing. This is indicative of reliability growth. Note that the model assumes a Poisson process with the Weibull intensity function, not the Weibull distribution. Therefore, statistical procedures for the Weibull distribution do not apply for this model. The parameter [math]\displaystyle{ \lambda \,\! }[/math] is called a scale parameter because it depends upon the unit of measurement chosen for [math]\displaystyle{ T\,\! }[/math], while [math]\displaystyle{ \beta \,\! }[/math] is the shape parameter that characterizes the shape of the graph of the intensity function.

The total number of failures, [math]\displaystyle{ N(T)\,\! }[/math], is a random variable with Poisson distribution. Therefore, the probability that exactly [math]\displaystyle{ n\,\! }[/math] failures occur by time [math]\displaystyle{ T\,\! }[/math] is:

- [math]\displaystyle{ P[N(T)=n]=\frac{{{[\theta (T)]}^{n}}{{e}^{-\theta (T)}}}{n!}\,\! }[/math]

The number of failures occurring in the interval from [math]\displaystyle{ {{T}_{1}}\,\! }[/math] to [math]\displaystyle{ {{T}_{2}}\,\! }[/math] is a random variable having a Poisson distribution with mean:

- [math]\displaystyle{ \theta ({{T}_{2}})-\theta ({{T}_{1}})=\lambda (T_{2}^{\beta }-T_{1}^{\beta })\,\! }[/math]

The number of failures in any interval is statistically independent of the number of failures in any interval that does not overlap the first interval. At time [math]\displaystyle{ {{T}_{0}}\,\! }[/math], the failure intensity is [math]\displaystyle{ {{\lambda }_{i}}({{T}_{0}})=\lambda \beta T_{0}^{\beta -1}\,\! }[/math]. If improvements are not made to the system after time [math]\displaystyle{ {{T}_{0}}\,\! }[/math], it is assumed that failures would continue to occur at the constant rate [math]\displaystyle{ {{\lambda }_{i}}({{T}_{0}})=\lambda \beta T_{0}^{\beta -1}\,\! }[/math]. Future failures would then follow an exponential distribution with mean [math]\displaystyle{ m({{T}_{0}})=\tfrac{1}{\lambda \beta T_{0}^{\beta -1}}\,\! }[/math]. The instantaneous MTBF of the system at time [math]\displaystyle{ T\,\! }[/math] is:

- [math]\displaystyle{ m(T)=\frac{1}{\lambda \beta {{T}^{\beta -1}}}\,\! }[/math]

[math]\displaystyle{ m(T)\,\! }[/math] is also called the demonstrated (or achieved) MTBF.

Note About Applicability

The Duane and Crow-AMSAA models are the most frequently used reliability growth models. Their relationship comes from the fact that both make use of the underlying observed linear relationship between the logarithm of cumulative MTBF and cumulative test time. However, the Duane model does not provide a capability to test whether the change in MTBF observed over time is significantly different from what might be seen due to random error between phases. The Crow-AMSAA model allows for such assessments. Also, the Crow-AMSAA allows for development of hypothesis testing procedures to determine growth presence in the data (where [math]\displaystyle{ \beta \lt 1\,\! }[/math] indicates that there is growth in MTBF, [math]\displaystyle{ \beta =1\,\! }[/math] indicates a constant MTBF and [math]\displaystyle{ \beta \gt 1\,\! }[/math] indicates a decreasing MTBF). Additionally, the Crow-AMSAA model views the process of reliability growth as probabilistic, while the Duane model views the process as deterministic.

Failure Times Data

A description of Failure Times Data is presented in the RGA Data Types page.

Parameter Estimation for Failure Times Data

The parameters for the Crow-AMSAA (NHPP) model are estimated using maximum likelihood estimation (MLE). The probability density function (pdf) of the [math]\displaystyle{ {{i}^{th}}\,\! }[/math] event given that the [math]\displaystyle{ {{(i-1)}^{th}}\,\! }[/math] event occurred at [math]\displaystyle{ {{T}_{i-1}}\,\! }[/math] is:

- [math]\displaystyle{ f({{T}_{i}}|{{T}_{i-1}})=\frac{\beta }{\eta }{{\left( \frac{{{T}_{i}}}{\eta } \right)}^{\beta -1}}\cdot {{e}^{-\tfrac{1}{{{\eta }^{\beta }}}\left( T_{i}^{\beta }-T_{i-1}^{\beta } \right)}}\,\! }[/math]

Let [math]\displaystyle{ \lambda =\tfrac{1}{{{\eta }^{\beta }}},\,\! }[/math], the likelihood function is:

- [math]\displaystyle{ L={{\lambda }^{n}}{{\beta }^{n}}{{e}^{-\lambda {{T}^{*\beta }}}}\underset{i=1}{\overset{n}{\mathop \prod }}\,T_{i}^{\beta -1}\,\! }[/math]

where [math]\displaystyle{ {{T}^{*}}\,\! }[/math] is the termination time and is given by:

- [math]\displaystyle{ {{T}^{*}}=\left\{ \begin{matrix} {{T}_{n}}\text{ if the test is failure terminated} \\ T\gt {{T}_{n}}\text{ if the test is time terminated} \\ \end{matrix} \right\}\,\! }[/math]

Taking the natural log on both sides:

- [math]\displaystyle{ \Lambda =n\ln \lambda +n\ln \beta -\lambda {{T}^{*\beta }}+(\beta -1)\underset{i=1}{\overset{n}{\mathop \sum }}\,\ln {{T}_{i}}\,\! }[/math]

And differentiating with respect to [math]\displaystyle{ \lambda \,\! }[/math] yields:

- [math]\displaystyle{ \frac{\partial \Lambda }{\partial \lambda }=\frac{n}{\lambda }-{{T}^{*\beta }}\,\! }[/math]

Set equal to zero and solve for [math]\displaystyle{ \lambda \,\! }[/math] :

- [math]\displaystyle{ \hat{\lambda }=\frac{n}{{{T}^{*\beta }}}\,\! }[/math]

Now differentiate with respect to [math]\displaystyle{ \beta \,\! }[/math] :

- [math]\displaystyle{ \frac{\partial \Lambda }{\partial \beta }=\frac{n}{\beta }-\lambda {{T}^{*\beta }}\ln {{T}^{*}}+\underset{i=1}{\overset{n}{\mathop \sum }}\,\ln {{T}_{i}}\,\! }[/math]

Set equal to zero and solve for [math]\displaystyle{ \beta \,\! }[/math] :

- [math]\displaystyle{ \hat{\beta }=\frac{n}{n\ln {{T}^{*}}-\underset{i=1}{\overset{n}{\mathop{\sum }}}\,\ln {{T}_{i}}}\,\! }[/math]

This equation is used for both failure terminated and time terminated test data.

Biasing and Unbiasing of Beta

The equation above returns the biased estimate, [math]\displaystyle{ \hat{\beta }\,\! }[/math]. The unbiased estimate, [math]\displaystyle{ \bar{\beta }\,\! }[/math], can be calculated by using the following relationships. For time terminated data (the test ends after a specified test time):

- [math]\displaystyle{ \bar{\beta }=\frac{N-1}{N}\hat{\beta }\,\! }[/math]

For failure terminated data (the test ends after a specified number of failures):

- [math]\displaystyle{ \bar{\beta }=\frac{N-2}{N-1}\hat{\beta }\,\! }[/math]

By default [math]\displaystyle{ \hat{\beta }\,\! }[/math] is returned. [math]\displaystyle{ \bar{\beta }\,\! }[/math] can be returned by selecting the Calculate unbiased beta option on the Calculations tab of the Application Setup.

Cramér-von Mises Test

The Cramér-von Mises (CVM) goodness-of-fit test validates the hypothesis that the data follows a non-homogeneous Poisson process with a failure intensity equal to [math]\displaystyle{ u(t)=\lambda \beta {{t}^{\beta -1}}\,\! }[/math]. This test can be applied when the failure data is complete over the continuous interval [math]\displaystyle{ [0,{{T}_{q}}]\,\! }[/math] with no gaps in the data. The CVM data type applies to all data types when the failure times are known, except for Fleet data.

If the individual failure times are known, a Cramér-von Mises statistic is used to test the null hypothesis that a non-homogeneous Poisson process with the failure intensity function [math]\displaystyle{ \rho \left( t \right)=\lambda \,\beta \,{{t}^{\beta -1}}\left( \lambda \gt 0,\beta \gt 0,t\gt 0 \right)\,\! }[/math] properly describes the reliability growth of a system. The Cramér-von Mises goodness-of-fit statistic is then given by the following expression:

- [math]\displaystyle{ C_{M}^{2}=\frac{1}{12M}+\underset{i=1}{\overset{M}{\mathop \sum }}\,{{\left[ {{\left( \frac{{{T}_{i}}}{T} \right)}^{{\bar{\beta }}}}-\frac{2i-1}{2M} \right]}^{2}}\,\! }[/math]

where:

- [math]\displaystyle{ M=\left\{ \begin{matrix} N\text{ if the test is time terminated} \\ N-1\text{ if the test is failure terminated} \\ \end{matrix} \right\}\,\! }[/math]

- [math]\displaystyle{ {\bar{\beta }}\,\! }[/math] is the unbiased value of Beta.

The failure times, [math]\displaystyle{ {{T}_{i}}\,\! }[/math], must be ordered so that [math]\displaystyle{ {{T}_{1}}\lt {{T}_{2}}\lt \ldots \lt {{T}_{M}}\,\! }[/math]. If the statistic [math]\displaystyle{ C_{M}^{2}\,\! }[/math] is less than the critical value corresponding to [math]\displaystyle{ M\,\! }[/math] for a chosen significance level, then you can fail to reject the null hypothesis that the Crow-AMSAA model adequately fits the data.

Critical Values

The following table displays the critical values for the Cramér-von Mises goodness-of-fit test given the sample size, [math]\displaystyle{ M\,\! }[/math], and the significance level, [math]\displaystyle{ \alpha \,\! }[/math].

| Critical values for Cramér-von Mises test | |||||

| [math]\displaystyle{ \alpha \,\! }[/math] | |||||

| [math]\displaystyle{ M\,\! }[/math] | 0.20 | 0.15 | 0.10 | 0.05 | 0.01 |

| 2 | 0.138 | 0.149 | 0.162 | 0.175 | 0.186 |

| 3 | 0.121 | 0.135 | 0.154 | 0.184 | 0.23 |

| 4 | 0.121 | 0.134 | 0.155 | 0.191 | 0.28 |

| 5 | 0.121 | 0.137 | 0.160 | 0.199 | 0.30 |

| 6 | 0.123 | 0.139 | 0.162 | 0.204 | 0.31 |

| 7 | 0.124 | 0.140 | 0.165 | 0.208 | 0.32 |

| 8 | 0.124 | 0.141 | 0.165 | 0.210 | 0.32 |

| 9 | 0.125 | 0.142 | 0.167 | 0.212 | 0.32 |

| 10 | 0.125 | 0.142 | 0.167 | 0.212 | 0.32 |

| 11 | 0.126 | 0.143 | 0.169 | 0.214 | 0.32 |

| 12 | 0.126 | 0.144 | 0.169 | 0.214 | 0.32 |

| 13 | 0.126 | 0.144 | 0.169 | 0.214 | 0.33 |

| 14 | 0.126 | 0.144 | 0.169 | 0.214 | 0.33 |

| 15 | 0.126 | 0.144 | 0.169 | 0.215 | 0.33 |

| 16 | 0.127 | 0.145 | 0.171 | 0.216 | 0.33 |

| 17 | 0.127 | 0.145 | 0.171 | 0.217 | 0.33 |

| 18 | 0.127 | 0.146 | 0.171 | 0.217 | 0.33 |

| 19 | 0.127 | 0.146 | 0.171 | 0.217 | 0.33 |

| 20 | 0.128 | 0.146 | 0.172 | 0.217 | 0.33 |

| 30 | 0.128 | 0.146 | 0.172 | 0.218 | 0.33 |

| 60 | 0.128 | 0.147 | 0.173 | 0.220 | 0.33 |

| 100 | 0.129 | 0.147 | 0.173 | 0.220 | 0.34 |

The significance level represents the probability of rejecting the hypothesis even if it's true. So, there is a risk associated with applying the goodness-of-fit test (i.e., there is a chance that the CVM will indicate that the model does not fit, when in fact it does). As the significance level is increased, the CVM test becomes more stringent. Keep in mind that the CVM test passes when the test statistic is less than the critical value. Therefore, the larger the critical value, the more room there is to work with (e.g., a CVM test with a significance level equal to 0.1 is more strict than a test with 0.01).

Confidence Bounds

The RGA software provides two methods to estimate the confidence bounds for the Crow Extended model when applied to developmental testing data. The Fisher Matrix approach is based on the Fisher Information Matrix and is commonly employed in the reliability field. The Crow bounds were developed by Dr. Larry Crow. See the Crow-AMSAA Confidence Bounds chapter for details on how the confidence bounds are calculated.

Failure Times Data Examples

Example - Parameter Estimation

A prototype of a system was tested with design changes incorporated during the test. The following table presents the data collected over the entire test. Find the Crow-AMSAA parameters and the intensity function using maximum likelihood estimators.

| Row | Time to Event (hr) | [math]\displaystyle{ ln{(T)}\,\! }[/math] |

|---|---|---|

| 1 | 2.7 | 0.99325 |

| 2 | 10.3 | 2.33214 |

| 3 | 12.5 | 2.52573 |

| 4 | 30.6 | 3.42100 |

| 5 | 57.0 | 4.04305 |

| 6 | 61.3 | 4.11578 |

| 7 | 80.0 | 4.38203 |

| 8 | 109.5 | 4.69592 |

| 9 | 125.0 | 4.82831 |

| 10 | 128.6 | 4.85671 |

| 11 | 143.8 | 4.96842 |

| 12 | 167.9 | 5.12337 |

| 13 | 229.2 | 5.43459 |

| 14 | 296.7 | 5.69272 |

| 15 | 320.6 | 5.77019 |

| 16 | 328.2 | 5.79362 |

| 17 | 366.2 | 5.90318 |

| 18 | 396.7 | 5.98318 |

| 19 | 421.1 | 6.04287 |

| 20 | 438.2 | 6.08268 |

| 21 | 501.2 | 6.21701 |

| 22 | 620.0 | 6.42972 |

Solution

For the failure terminated test, [math]\displaystyle{ {\beta}\,\! }[/math] is:

- [math]\displaystyle{ \begin{align} \widehat{\beta }&=\frac{n}{n\ln {{T}^{*}}-\underset{i=1}{\overset{n}{\mathop{\sum }}}\,\ln {{T}_{i}}} \\ &=\frac{22}{22\ln 620-\underset{i=1}{\overset{22}{\mathop{\sum }}}\,\ln {{T}_{i}}} \\ \end{align}\,\! }[/math]

where:

- [math]\displaystyle{ \underset{i=1}{\overset{22}{\mathop \sum }}\,\ln {{T}_{i}}=105.6355\,\! }[/math]

Then:

- [math]\displaystyle{ \widehat{\beta }=\frac{22}{22\ln 620-105.6355}=0.6142\,\! }[/math]

And for [math]\displaystyle{ {\lambda}\,\! }[/math] :

- [math]\displaystyle{ \begin{align} \widehat{\lambda }&=\frac{n}{{{T}^{*\beta }}} \\ & =\frac{22}{{{620}^{0.6142}}}=0.4239 \\ \end{align}\,\! }[/math]

Therefore, [math]\displaystyle{ {{\lambda }_{i}}(T)\,\! }[/math] becomes:

- [math]\displaystyle{ \begin{align} {{\widehat{\lambda }}_{i}}(T)= & 0.4239\cdot 0.6142\cdot {{620}^{-0.3858}} \\ = & 0.0217906\frac{\text{failures}}{\text{hr}} \end{align}\,\! }[/math]

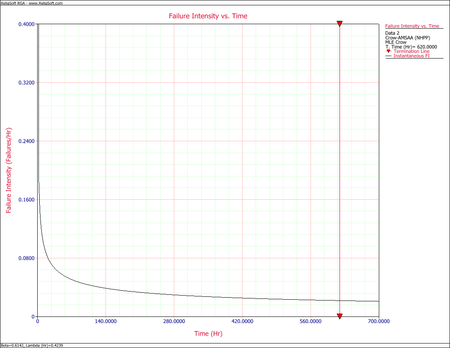

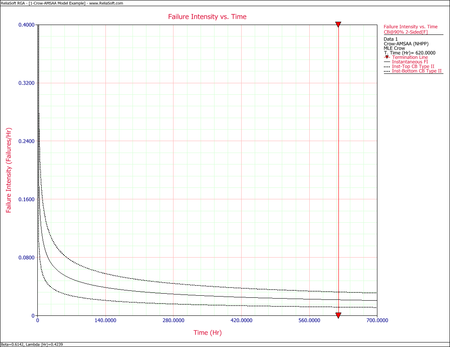

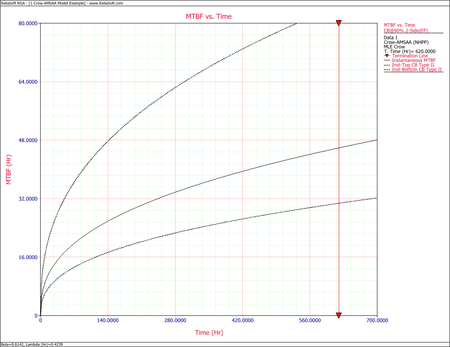

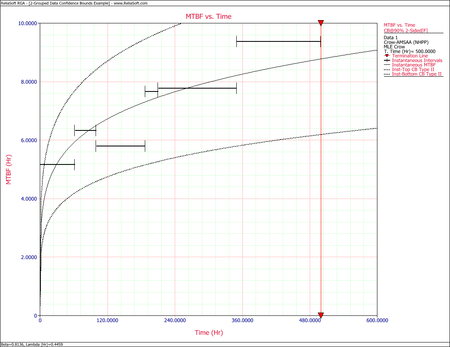

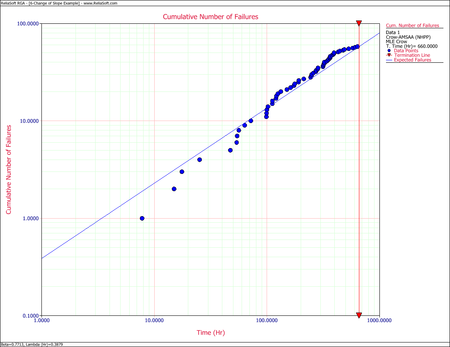

The next figure shows the plot of the failure rate. If no further changes are made, the estimated MTBF is [math]\displaystyle{ \tfrac{1}{0.0217906}\,\! }[/math] or 46 hours.

Example - Confidence Bounds on Failure Intensity

Using the values of [math]\displaystyle{ \hat{\beta }\,\! }[/math] and [math]\displaystyle{ \hat{\lambda }\,\! }[/math] estimated in the example given above, calculate the 90% 2-sided confidence bounds on the cumulative and instantaneous failure intensity.

Solution

Fisher Matrix Bounds

The partial derivatives for the Fisher Matrix confidence bounds are:

- [math]\displaystyle{ \begin{align} \frac{{{\partial }^{2}}\Lambda }{\partial {{\lambda }^{2}}} = & -\frac{22}{{{0.4239}^{2}}}=-122.43 \\ \frac{{{\partial }^{2}}\Lambda }{\partial {{\beta }^{2}}} = & -\frac{22}{{{0.6142}^{2}}}-0.4239\cdot {{620}^{0.6142}}{{(\ln 620)}^{2}}=-967.68 \\ \frac{{{\partial }^{2}}\Lambda }{\partial \lambda \partial \beta } = & -{{620}^{0.6142}}\ln 620=-333.64 \end{align}\,\! }[/math]

The Fisher Matrix then becomes:

- [math]\displaystyle{ \begin{align} \begin{bmatrix}122.43 & 333.64\\ 333.64 & 967.68\end{bmatrix}^{-1} & = \begin{bmatrix}Var(\hat{\lambda}) & Cov(\hat{\beta},\hat{\lambda})\\ Cov(\hat{\beta},\hat{\lambda}) & Var(\hat{\beta})\end{bmatrix} \\ & = \begin{bmatrix} 0.13519969 & -0.046614609\\ -0.046614609 & 0.017105343 \end{bmatrix} \end{align}\,\! }[/math]

For [math]\displaystyle{ T=620\,\! }[/math] hours, the partial derivatives of the cumulative and instantaneous failure intensities are:

- [math]\displaystyle{ \begin{align} \frac{\partial {{\lambda }_{c}}(T)}{\partial \beta }= & \hat{\lambda }{{T}^{\hat{\beta }-1}}\ln (T) \\ = & 0.4239\cdot {{620}^{-0.3858}}\ln 620 \\ = & 0.22811336 \\ \frac{\partial {{\lambda }_{c}}(T)}{\partial \lambda }= & {{T}^{\hat{\beta }-1}} \\ = & {{620}^{-0.3858}} \\ = & 0.083694185 \end{align}\,\! }[/math]

- [math]\displaystyle{ \begin{align} \frac{\partial {{\lambda }_{i}}(T)}{\partial \beta }= & \hat{\lambda }{{T}^{\hat{\beta }-1}}+\hat{\lambda }\hat{\beta }{{T}^{\hat{\beta }-1}}\ln T \\ = & 0.4239\cdot {{620}^{-0.3858}}+0.4239\cdot 0.6142\cdot {{620}^{-0.3858}}\ln 620 \\ = & 0.17558519 \end{align}\,\! }[/math]

- [math]\displaystyle{ \begin{align} \frac{\partial {{\lambda }_{i}}(T)}{\partial \lambda }= & \hat{\beta }{{T}^{\hat{\beta }-1}} \\ = & 0.6142\cdot {{620}^{-0.3858}} \\ = & 0.051404969 \end{align}\,\! }[/math]

Therefore, the variances become:

- [math]\displaystyle{ \begin{align} Var(\hat{\lambda_{c}}(T)) & = 0.22811336^{2}\cdot 0.017105343\ + 0.083694185^{2} \cdot 0.13519969\ -2\cdot 0.22811336\cdot 0.083694185\cdot 0.046614609 \\ & = 0.00005721408 \\ Var(\hat{\lambda_{i}}(T)) & = 0.17558519^{2}\cdot 0.01715343\ + 0.051404969^{2}\cdot 0.13519969\ -2\cdot 0.17558519\cdot 0.051404969\cdot 0.046614609 \\ &= 0.0000431393 \end{align}\,\! }[/math]

The cumulative and instantaneous failure intensities at [math]\displaystyle{ T=620\,\! }[/math] hours are:

- [math]\displaystyle{ \begin{align} {{\lambda }_{c}}(T)= & 0.03548 \\ {{\lambda }_{i}}(T)= & 0.02179 \end{align}\,\! }[/math]

So, at the 90% confidence level and for [math]\displaystyle{ T=620\,\! }[/math] hours, the Fisher Matrix confidence bounds for the cumulative failure intensity are:

- [math]\displaystyle{ \begin{align} {{[{{\lambda }_{c}}(T)]}_{L}}= & 0.02499 \\ {{[{{\lambda }_{c}}(T)]}_{U}}= & 0.05039 \end{align}\,\! }[/math]

The confidence bounds for the instantaneous failure intensity are:

- [math]\displaystyle{ \begin{align} {{[{{\lambda }_{i}}(T)]}_{L}}= & 0.01327 \\ {{[{{\lambda }_{i}}(T)]}_{U}}= & 0.03579 \end{align}\,\! }[/math]

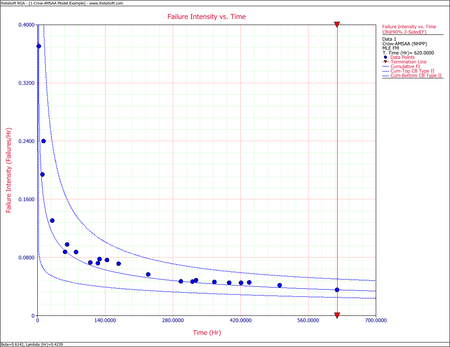

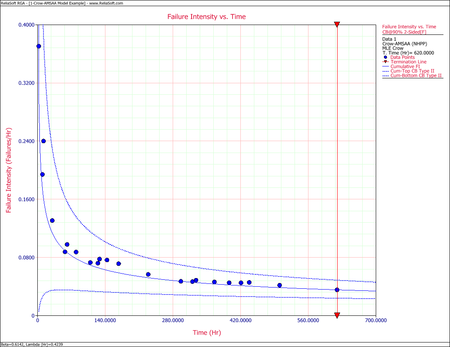

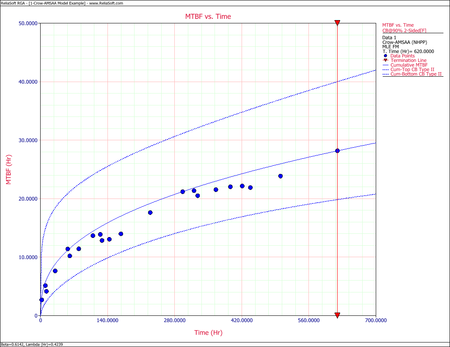

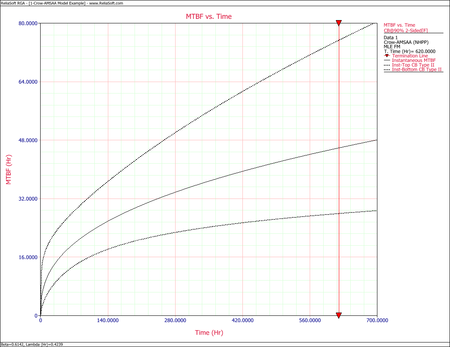

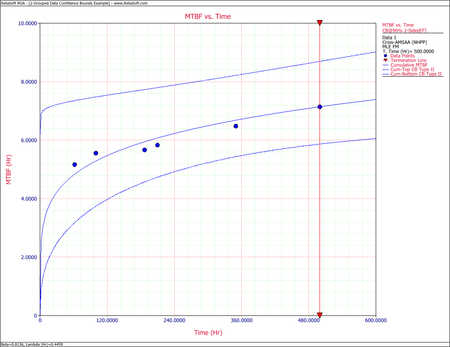

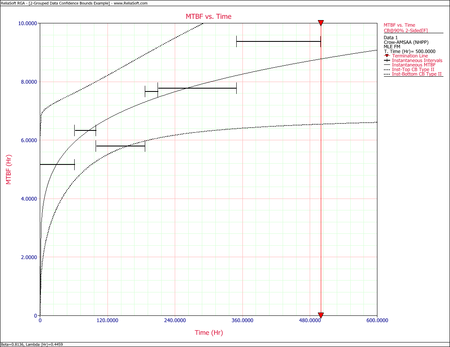

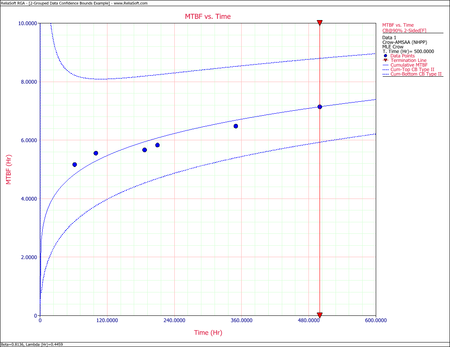

The following figures display plots of the Fisher Matrix confidence bounds for the cumulative and instantaneous failure intensity, respectively.

Crow Bounds

Given that the data is failure terminated, the Crow confidence bounds for the cumulative failure intensity at the 90% confidence level and for [math]\displaystyle{ T=620\,\! }[/math] hours are:

- [math]\displaystyle{ \begin{align} {{[{{\lambda }_{c}}(T)]}_{L}} = & \frac{\chi _{\tfrac{\alpha }{2},2N}^{2}}{2\cdot t} \\ = & \frac{29.787476}{2*620} \\ = & 0.02402 \\ {{[{{\lambda }_{c}}(T)]}_{U}} = & \frac{\chi _{1-\tfrac{\alpha }{2},2N}^{2}}{2\cdot t} \\ = & \frac{60.48089}{2*620} \\ = & 0.048775 \end{align}\,\! }[/math]

The Crow confidence bounds for the instantaneous failure intensity at the 90% confidence level and for [math]\displaystyle{ T=620\,\! }[/math] hours are calculated by first estimating the bounds on the instantaneous MTBF. Once these are calculated, take the inverse as shown below. Details on the confidence bounds for instantaneous MTBF are presented here.

- [math]\displaystyle{ \begin{align} {{[{{\lambda }_{i}}(t)]}_{L}} = & \frac{1}{{{[MTB{{F}_{i}}]}_{U}}} \\ = & \frac{1}{MTB{{F}_{i}}\cdot U} \\ = & 0.01179 \end{align}\,\! }[/math]

- [math]\displaystyle{ \begin{align} {{[{{\lambda }_{i}}(t)]}_{U}}= & \frac{1}{{{[MTB{{F}_{i}}]}_{L}}} \\ = & \frac{1}{MTB{{F}_{i}}\cdot L} \\ = & 0.03253 \end{align}\,\! }[/math]

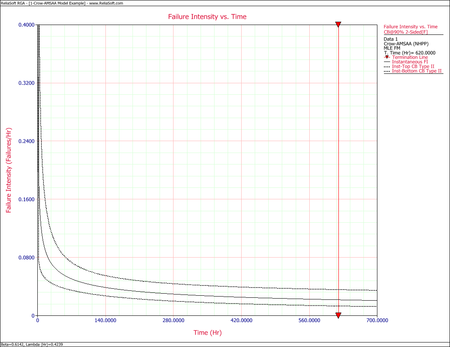

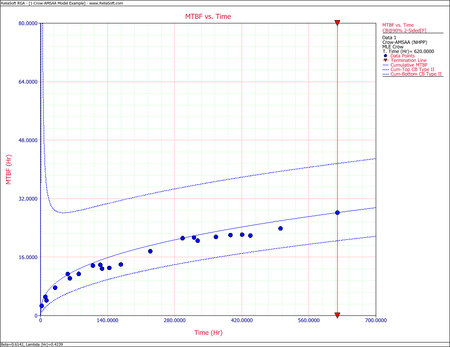

The following figures display plots of the Crow confidence bounds for the cumulative and instantaneous failure intensity, respectively.

Example - Confidence Bounds on MTBF

Calculate the confidence bounds on the cumulative and instantaneous MTBF for the data from the example given above.

Solution

Fisher Matrix Bounds

From the previous example:

- [math]\displaystyle{ \begin{align} Var(\hat{\lambda }) = & 0.13519969 \\ Var(\hat{\beta }) = & 0.017105343 \\ Cov(\hat{\beta },\hat{\lambda }) = & -0.046614609 \end{align}\,\! }[/math]

And for [math]\displaystyle{ T=620\,\! }[/math] hours, the partial derivatives of the cumulative and instantaneous MTBF are:

- [math]\displaystyle{ \begin{align} \frac{\partial {{m}_{c}}(T)}{\partial \beta }= & -\frac{1}{\hat{\lambda }}{{T}^{1-\hat{\beta }}}\ln T \\ = & -\frac{1}{0.4239}{{620}^{0.3858}}\ln 620 \\ = & -181.23135 \\ \frac{\partial {{m}_{c}}(T)}{\partial \lambda } = & -\frac{1}{{{\hat{\lambda }}^{2}}}{{T}^{1-\hat{\beta }}} \\ = & -\frac{1}{{{0.4239}^{2}}}{{620}^{0.3858}} \\ = & -66.493299 \\ \frac{\partial {{m}_{i}}(T)}{\partial \beta } = & -\frac{1}{\hat{\lambda }{{\hat{\beta }}^{2}}}{{T}^{1-\beta }}-\frac{1}{\hat{\lambda }\hat{\beta }}{{T}^{1-\hat{\beta }}}\ln T \\ = & -\frac{1}{0.4239\cdot {{0.6142}^{2}}}{{620}^{0.3858}}-\frac{1}{0.4239\cdot 0.6142}{{620}^{0.3858}}\ln 620 \\ = & -369.78634 \\ \frac{\partial {{m}_{i}}(T)}{\partial \lambda } = & -\frac{1}{{{\hat{\lambda }}^{2}}\hat{\beta }}{{T}^{1-\hat{\beta }}} \\ = & -\frac{1}{{{0.4239}^{2}}\cdot 0.6142}\cdot {{620}^{0.3858}} \\ = & -108.26001 \end{align}\,\! }[/math]

Therefore, the variances become: