|

|

| (106 intermediate revisions by 5 users not shown) |

| Line 1: |

Line 1: |

| {{Template:Doebook|10}} | | {{Template:Doebook|9}} |

| Reliability analysis is commonly thought of as an approach to model failures of existing products. The usual reliability analysis involves characterization of failures of the products using distributions such as exponential, Weibull and lognormal. Based on the fitted distribution, failures are mitigated, or warranty returns are predicted, or maintenance actions are planned. However, reliability analysis can also be used as a powerful tool to design robust products that operate with minimal failures, by adopting the methodology of Design for Reliability (DFR). In DFR, reliability analysis is carried out in conjunction with physics of failure and experiment design techniques. Under this approach, Design of Experiments (DOE) uses life data to "build" reliability into the products, not just quantify the existing reliability. Such an approach, if properly implemented, can result in significant cost savings, especially in terms of fewer warranty returns or repair and maintenance actions. Although DOE techniques can be used to improve product reliability and also make this reliability robust to noise factors, the discussion in this chapter is focused on reliability improvement.

| | This chapter discusses factorial designs that are commonly used in designed experiments, but are not necessarily limited to two level factors. These designs are the [[Highly_Fractional_Factorial_Designs#Plackett-Burman_Designs|Plackett-Burman designs]] and [[Highly_Fractional_Factorial_Designs#Taguchi.27s_Orthogonal_Arrays|Taguchi's orthogonal arrays]]. |

|

| |

|

| =Reliability DOE Analysis= | | ==Plackett-Burman Designs== |

| Reliability DOE (R-DOE) analysis is fairly similar to the analysis of other designed experiments except that the response is the life of the product in the respective units (e.g., for an automobile component the units of life may be miles, for a mechanical component this may be cycles, and for a pharmaceutical product this may be months or years). However, two important differences exist that make R-DOE analysis unique. The first is that life data of most products are typically well modeled by either the lognormal, Weibull or exponential distribution, but usually do not follow the normal distribution. Traditional DOE techniques follow the assumption that response values at any treatment level follow the normal distribution and therefore, the error terms, <math>\epsilon \,\!</math>, can be assumed to be normally and independently distributed. This assumption may not be valid for the response data used in most of the R-DOE analyses. Further, the life data obtained may either be complete or censored and in this case standard regression techniques applicable to the response data in traditional DOEs can no longer be used.

| |

|

| |

|

| Stresses affecting the life of the product may also be investigated using R-DOE analysis. In this case, the primary purpose of any R-DOE analysis is to identify which of the investigated stresses affect the life of the product (by investigating if change in the level of any stress leads to a significant change in the life of the product). Once the important stresses affecting the life of the product have been identified, detailed analyses can be carried out using ReliaSoft's ALTA software. ALTA includes a number of life-stress relationships (LSRs) to model the relation between life and the stress affecting the life of the product.

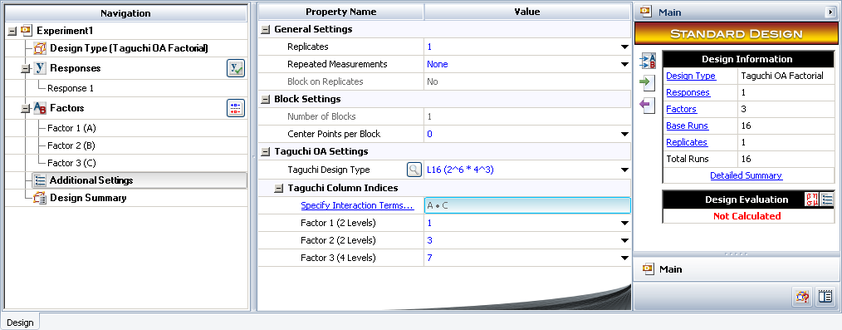

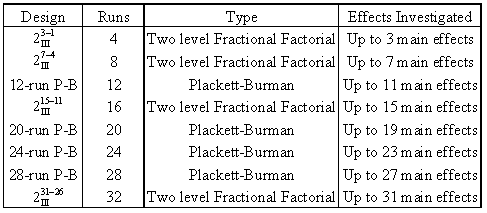

| | It was mentioned in [[Two_Level_Factorial_Experiments| Two Level Factorial Experiments]] that resolution III designs can be used as highly fractional designs to investigate <math>k\,\!</math> main effects using <math>k+1\,\!</math> runs (provided that three factor and higher order interaction effects are not important to the experimenter). A limitation with these designs is that all runs in these designs have to be a power of 2. The valid runs for these designs are 4, 8, 16, 32, etc. Therefore, the next design after the 2 <math>_{\text{III}}^{3-1}\,\!</math> design with 4 runs is the 2 <math>_{\text{III}}^{7-4}\,\!</math> design with 8 runs, and the design after this is the 2 <math>_{\text{III}}^{15-11}\,\!</math> design with 32 runs and so on, as shown in the next table. |

|

| |

|

| =R-DOE Analysis of Lognormally Distributed Data=

| |

| Assume that the life, <math>T\,\!</math>, for a certain product has been found to be lognormally distributed. The probability density function for the lognormal distribution is:

| |

|

| |

|

| ::<math>f(T)=\frac{1}{T{\sigma }'\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln (T)-{\mu }'}{{{\sigma }'}} \right)}^{2}}}}\,\!</math> | | [[Image:doet8.1.png|center|487px|Highly fractional designs to investigate main effects.]] |

|

| |

|

| where <math>{\mu }'\,\!</math> represents the mean of the natural logarithm of the times-to-failure and <math>{\sigma }'\,\!</math> represents the standard deviation of the natural logarithms of the times-to-failure [LDAReference]. If the analyst wants to investigate a single two level factor that may affect the life, <math>T\,\!</math>, then the following model may be used:

| |

|

| |

|

| ::<math>{{T}_{i}}={{\mu }_{i}}+{{\xi }_{i}}\,\!</math>

| | Plackett-Burman designs solve this problem. These designs were proposed by R. L. Plackett and J.P. Burman (1946). They allow the estimation of <math>k\,\!</math> main effects using <math>k+1\,\!</math> runs. In these designs, runs are a multiple of 4 (i.e., 4, 8, 12, 16, 20 and so on). When the runs are a power of 2, the designs correspond to the resolution III two factor fractional factorial designs. Although Plackett-Burman designs are all two level orthogonal designs, the alias structure for these designs is complicated when runs are not a power of 2. |

|

| |

|

| Where:

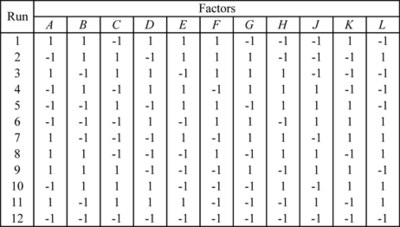

| | As an example, consider the 12-run Plackett-Burman design shown in the figure below. |

| *<math>{{T}_{i}}\,\!</math> represents the times-to-failure at the <math>i\,\!</math> th treatment level of the factor

| |

| *<math>{{\mu }_{i}}\,\!</math> represents the mean value of <math>{{T}_{i}}\,\!</math> for the <math>i\,\!</math> th treatment

| |

| *<math>{{\xi }_{i}}\,\!</math> is the random error term

| |

| *The subscript <math>i\,\!</math> represents the treatment level of the factor with <math>i=1,2\,\!</math> for a two level factor

| |

| <br>

| |

| The model of the equation given above is analogous to the ANOVA model, <math>{{Y}_{i}}={{\mu }_{i}}+{{\epsilon }_{i}}\,\!</math>, used in [[Two_Level_Factorial_Experiments| Two Level Factorial Experiments]] for traditional DOE analyses. Note, however, that the random error term, <math>{{\xi }_{i}}\,\!</math>, is not normally distributed here because the response, <math>T\,\!</math>, is lognormally distributed. It is known that the logarithmic value of a lognormally distributed random variable follows the normal distribution. Therefore, if the logarithmic transformation of <math>T\,\!</math>, <math>ln(T)\,\!</math>, is used, the model will be identical to the ANOVA model, <math>{{Y}_{i}}={{\mu }_{i}}+{{\epsilon }_{i}}\,\!</math>, used in [[Two_Level_Factorial_Experiments| Two Level Factorial Experiments]]. Thus, using the logarithmic failure times, the model can be written as:

| |

|

| |

|

| ::<math>\ln ({{T}_{i}})=\mu _{i}^{\prime }+{{\epsilon }_{i}}\,\!</math>

| |

|

| |

|

| Where:

| | [[Image:doe8.1.png|center|400px|12-run Plackett-Burman design.]] |

| *<math>\ln ({{T}_{i}})\,\!</math> represents the logarithmic times-to-failure at the <math>i\,\!</math> th treatment

| |

| *<math>\mu _{i}^{\prime }\,\!</math> represents the mean of the natural logarithm of the times-to-failure at the <math>i\,\!</math> th treatment

| |

| *<math>{\sigma }'\,\!</math> represents the standard deviation of the natural logarithms of the times-to-failure

| |

|

| |

|

| The random error term, <math>{{\epsilon }_{i}}\,\!</math>, is normally distributed because the response, <math>\ln ({{T}_{i}})\,\!</math>, is normally distributed. Since the model of the equation given above is identical to the ANOVA model used in traditional DOE analysis, regression techniques can be applied here and the R-DOE analysis can be carried out similar to the traditional DOE analyses. Recall from [[Highly_Fractional_Factorial_Designs| Highly Fractional Factorial Designs]] that if the factor(s) affecting the response has only two levels, then the notation of the regression model can be applied to the ANOVA model. Therefore, the model of can be written using a single indicator variable, <math>{{x}_{1}}\,\!</math>, to represent the two level factor as:

| |

|

| |

|

| ::<math>\ln ({{T}_{i}})={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\epsilon }_{i}}\,\!</math>

| | If 11 main effects are to be estimated using this design, then each of these main effects is partially aliased with all other two factor interactions not containing that main effect. For example, the main effect <math>A\,\!</math> is partially aliased with all two factor interactions except <math>AB\,\!</math>, <math>AC\,\!</math>, <math>AD\,\!</math>, <math>AE\,\!</math>, <math>AF\,\!</math>, <math>AG\,\!</math>, <math>AH\,\!</math>, <math>AJ\,\!</math>, <math>AK\,\!</math> and <math>AL\,\!</math>. There are 45 such two factor interactions that are aliased with <math>A\,\!</math>. |

|

| |

|

| where <math>{{\beta }_{0\text{ }}}\,\!</math> is the intercept term and <math>{{\beta }_{1}}\,\!</math> is the effect coefficient for the investigated factor. Setting Eqns. (AnovaModel) and (RegressionNotation) equal to each other returns:

| |

|

| |

| ::<math>\mu _{i}^{\prime }={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}\,\!</math>

| |

|

| |

| The natural logarithm of the times-to-failure at any factor level, <math>\mu _{i}^{\prime }\,\!</math>, is referred to as the life characteristic because it represents a characteristic point of the underlying life distribution. The life characteristic used in the R-DOE analysis will change based on the underlying distribution assumed for the life data. If the analyst wants to investigate the effect of two factors (each at two levels) on the life of the product, then the life characteristic equation can be easily expanded as follows:

| |

|

| |

| ::<math>\mu _{i}^{\prime }={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}\,\!</math>

| |

|

| |

| where <math>{{\beta }_{2}}\,\!</math> is the effect coefficient for the second factor and <math>{{x}_{2}}\,\!</math> is the indicator variable representing the second factor. If the interaction effect is also to be investigated, then the following equation can be used:

| |

|

| |

| ::<math>\mu _{i}^{\prime }={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}+{{\beta }_{12}}{{x}_{i1}}{{x}_{i2}}\,\!</math>

| |

|

| |

| In general the model to investigate a given number of factors can be expressed as:

| |

|

| |

| ::<math>\mu _{i}^{\prime }={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}+{{\beta }_{12}}{{x}_{i1}}{{x}_{i2}}+...\,\!</math>

| |

|

| |

| Based on the model equations mentioned thus far, the analyst can easily conduct an R-DOE analysis for the lognormally distributed life data using standard regression techniques. However this is no longer true once the data also includes censored observations. In the case of censored data, the analysis has to be carried out using maximum likelihood estimation (MLE) techniques.

| |

|

| |

| ===Maximum Likelihood Estimation for the Lognormal Distribution===

| |

| The maximum likelihood estimation method can be used to estimate parameters in R-DOE analyses when censored data are present. The likelihood function is calculated for each observed time to failure, <math>{{t}_{i}}\,\!</math>, and the parameters of the model are obtained by maximizing the log-likelihood function. The likelihood function for complete data following the lognormal distribution is given as:

| |

|

| |

|

| ::<math>\begin{align} | | ::<math>\begin{align} |

| {{L}_{failures}}= & \underset{i=1}{\overset{{{F}_{e}}}{\mathop \prod }}\,f({{t}_{i}},\mu _{i}^{\prime }) \\

| | & A= & A-\frac{1}{3}BC-\frac{1}{3}BD+\frac{1}{3}CD-\frac{1}{3}BE-\frac{1}{3}CE+\frac{1}{3}DE+... \\ |

| = & \underset{i=1}{\overset{{{F}_{e}}}{\mathop \prod }}\,\left[ \frac{1}{{{t}_{i}}{\sigma }'\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln ({{t}_{i}})-\mu _{i}^{\prime }}{{{\sigma }'}} \right)}^{2}}}} \right] \\

| | & & ...+\frac{1}{3}EL-\frac{1}{3}FL-\frac{1}{3}GL+\frac{1}{3}HL+\frac{1}{3}JL-\frac{1}{3}KL |

| = & \underset{i=1}{\overset{{{F}_{e}}}{\mathop \prod }}\,\left[ \frac{1}{{{t}_{i}}{\sigma }'\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}+...)}{{{\sigma }'}} \right)}^{2}}}} \right]

| |

| \end{align}\,\!</math> | | \end{align}\,\!</math> |

|

| |

|

| Where:

| |

| *<math>{{F}_{e}}\,\!</math> is the total number of observed times-to-failure

| |

| *<math>\mu _{i}^{\prime }\,\!</math> is the life characteristic and has been substituted based on the model used to investigate a given number of factors

| |

| *<math>{{t}_{i}}\,\!</math> is the time of the <math>i\,\!</math> th failure

| |

|

| |

|

| For right censored data the likelihood function is:[LDAReference]

| | Due to the complex aliasing, Plackett-Burman designs involving a large number of factors should be used with care. Some of the Plackett-Burman designs available in the DOE folio are included in [[Plackett-Burman_Designs|Appendix C]]. |

|

| |

|

| ::<math>{{L}_{suspensions}}=\underset{i=1}{\overset{{{S}_{e}}}{\mathop \prod }}\,\left[ 1-\frac{1}{\sqrt{2\pi }}\mathop{}_{-\infty }^{\left( \tfrac{\ln ({{t}_{i}})-\mu _{i}^{\prime }}{{{\sigma }'}} \right)}{{e}^{-\tfrac{{{g}^{2}}}{2}}}dg \right]\,\!</math>

| | ==Taguchi's Orthogonal Arrays== |

|

| |

|

| Where:

| | Taguchi's orthogonal arrays are highly fractional orthogonal designs proposed by Dr. Genichi Taguchi, a Japanese industrialist. These designs can be used to estimate main effects using only a few experimental runs. These designs are not only applicable to two level factorial experiments; they can also investigate main effects when factors have more than two levels. Designs are also available to investigate main effects for certain mixed level experiments where the factors included do not have the same number of levels. As in the case of Placket-Burman designs, these designs require the experimenter to assume that interaction effects are unimportant and can be ignored. A few of Taguchi's orthogonal arrays available in a DOE folio are included in [[Taguchi_Orthogonal_Arrays|Appendix D]]. |

| *<math>{{S}_{e}}\,\!</math> is the total number of observed suspensions

| |

| *<math>{{t}_{i}}\,\!</math> is the time of <math>i\,\!</math> th suspension

| |

|

| |

|

| For interval data the likelihood function is:[LDAReference] | | Some of Taguchi's arrays, with runs that are a power of 2, are similar to the corresponding 2 <math>_{\text{III}}^{k-f}\,\!</math> designs. For example, consider the L4 array shown in figure (a) below. The L4 array is denoted as L4(2^3) in a DOE folio. L4 means the array requires 4 runs. 2^3 indicates that the design estimates up to three main effects at 2 levels each. The L4 array can be used to estimate three main effects using four runs provided that the two factor and three factor interactions can be ignored. Figure (b) below shows the 2 <math>_{\text{III}}^{3-1}\,\!</math> design (defining relation <math>I=-ABC\,\!</math> ) which also requires four runs and can be used to estimate up to three main effects, assuming that all two factor and three factor interactions are unimportant. A comparison between the two designs shows that the columns in the two designs are the same except for the arrangement of the columns. In figure (c) below, columns of the L4 array are marked with the name of the effect from the corresponding column of the 2 <math>_{\text{III}}^{3-1}\,\!</math> design. |

|

| |

|

| ::<math>{{L}_{interval}}=\underset{i=11}{\overset{FI}{\mathop \prod }}\,\left[ \frac{1}{\sqrt{2\pi }}\mathop{}_{-\infty }^{\left( \tfrac{\ln (t_{i}^{2})-\mu _{i}^{\prime }}{{{\sigma }'}} \right)}{{e}^{-\tfrac{{{g}^{2}}}{2}}}dg-\frac{1}{\sqrt{2\pi }}\mathop{}_{-\infty }^{\left( \tfrac{\ln (t_{i}^{1})-\mu _{i}^{\prime }}{{{\sigma }'}} \right)}{{e}^{-\tfrac{{{g}^{2}}}{2}}}dg \right]\,\!</math>

| |

|

| |

|

| :where: | | [[Image:doe8.3.png|center|400px|Taguchi's L4 orthogonal array - Figure (a) shows the design, (b) shows the <math>2_{III}^{3-1} \,\!</math> design with the defining relation <math>I=-ABC \,\!</math> and (c) marks the columns of the L4 array with the corresponding columns of the design in (b).]] |

| ::• <math>FI\,\!</math> is the total number of interval data

| |

| ::• <math>t_{i}^{1}\,\!</math> is the beginning time of the <math>i\,\!</math> th interval

| |

| ::• and <math>t_{i}^{2}\,\!</math> is the end time of the <math>i\,\!</math> th interval

| |

| <br>

| |

| The complete likelihood function when all types of data (complete, right censored and interval) are present is:

| |

|

| |

|

| ::<math>L({\sigma }',{{\beta }_{0}},{{\beta }_{1}}...)={{L}_{failures}}\cdot {{L}_{suspensions}}\cdot {{L}_{interval}}\,\!</math>

| |

|

| |

|

| | Similarly, consider the L8(2^7) array shown in figure (a) below. This design can be used to estimate up to seven main effects using eight runs. This array is again similar to the 2 <math>_{\text{III}}^{7-4}\,\!</math> design shown in figure (b) below, except that the aliasing between the columns of the two designs differs in sign for some of the columns (see figure (c)). |

|

| |

|

| Then the log-likelihood function is:

| |

|

| |

|

| ::<math>\Lambda ({\sigma }',{{\beta }_{0}},{{\beta }_{1}}...)=\ln (L)\,\!</math> | | [[Image:doe8.4.png|center|400px|Taguchi's L8 orthogonal array - Figure (a) shows the design, (b) shows the <math>2_{III}^{7-4} \,\!</math> design with the defining relation <math>I=ABD=ACE=BCF=ABCG \,\!</math> and (c) marks the columns of the L8 array with the corresponding columns of the design in (b).]] |

|

| |

|

|

| |

|

| The MLE estimates are obtained by solving for parameters <math>({\sigma }',{{\beta }_{0}},{{\beta }_{1}}...)\,\!</math> so that: | | The L8 array can also be used as a full factorial three factor experiment design in the same way as a <math>2^{3}\,\!</math> design. However, the orthogonal arrays should be used carefully in such cases, taking into consideration the alias relationships between the columns of the array. For the L8 array, figure (c) above shows that the third column of the array is the product of the first two columns. If the L8 array is used as a two level full factorial design in the place of a 2 <math>^{3}\,\!</math> design, and if the main effects are assigned to the first three columns, the main effect assigned to the third column will be aliased with the two factor interaction of the first two main effects. The proper assignment of the main effects to the columns of the L8 array requires the experimenter to assign the three main effects to the first, second and fourth columns. These columns are sometimes referred to as the ''preferred columns'' for the L8 array. To know the preferred columns for any of the orthogonal arrays, the alias relationships between the array columns must be known. The alias relations between the main effects and two factor interactions of the columns for the L8 array are shown in the next table. |

|

| |

|

| ::<math>\begin{align}

| |

| \frac{\partial \Lambda }{\partial {\sigma }'}= & 0 \\

| |

| \frac{\partial \Lambda }{\partial {{\beta }_{0}}}= & 0 \\

| |

| \frac{\partial \Lambda }{\partial {{\beta }_{1}}}= & 0 \\

| |

| & ...

| |

| \end{align}\,\!</math>

| |

|

| |

|

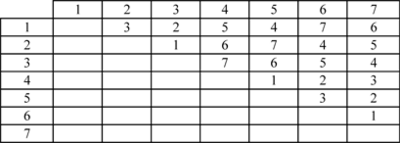

| | [[Image:doet8.2.png|center|400px|Alias relations for the L8 array.]] |

|

| |

|

| Once the estimates are obtained, the significance of any parameter, <math>{{\theta }_{i}}\,\!</math>, can be assessed using the likelihood ratio test.

| |

|

| |

|

| ===Hypothesis Tests===

| | The cell value in any (<math>i,j\,\!</math>) cell of the table gives the column number of the two factor interaction for the <math>i\,\!</math>th row and <math>j\,\!</math>th column. For example, to know which column is confounded with the interaction of the first and second columns, look at the value in the ( <math>1,2\,\!</math> ) cell. The value of 3 indicates that the third column is the same as the product of the first and second columns. The alias relations for some of Taguchi's orthogonal arrays are available in [[Alias_Relations_for_Taguchi_Orthogonal_Arrays|Appendix E]]. |

| Hypothesis testing in R-DOE analyses is carried out using the likelihood ratio test. To test the significance of a factor, the corresponding effect coefficient(s), <math>{{\theta }_{i}}\,\!</math>, is tested. The following statements are used:

| |

|

| |

|

| ::<math>\begin{align}

| | ===Example=== |

| & {{H}_{0}}: & {{\theta }_{i}}=0 \\

| |

| & {{H}_{1}}: & {{\theta }_{i}}\ne 0

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The statistic used for the test is the likelihood ratio, <math>LR\,\!</math>. The likelihood ratio for the parameter <math>{{\theta }_{i}}\,\!</math> is calculated as follows:

| |

| | |

| ::<math>LR=-2\ln \frac{L({{{\hat{\theta }}}_{(-i)}})}{L(\hat{\theta })}\,\!</math>

| |

|

| |

|

| Where:

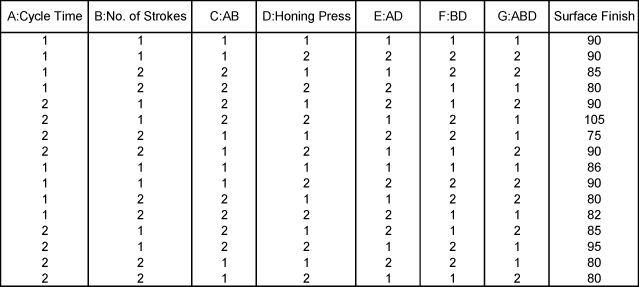

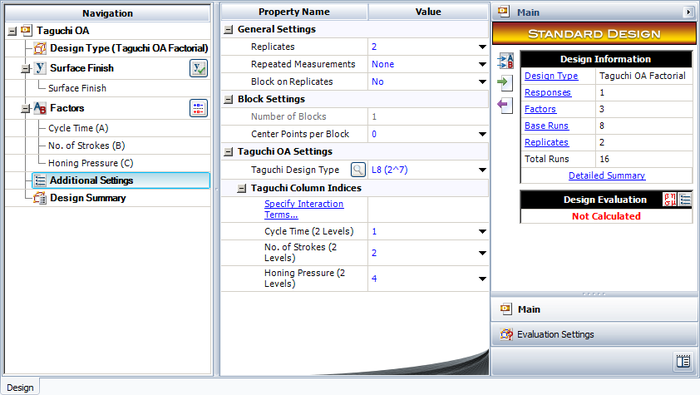

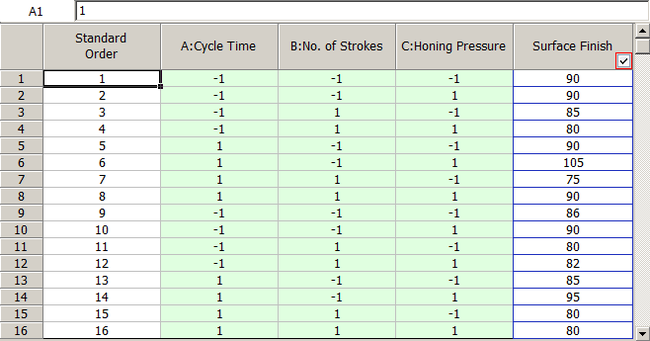

| | Recall the experiment to investigate factors affecting the surface finish of automobile brake drums discussed in [[Two_Level_Factorial_Experiments|Two Level Factorial Experiments]]. The three factors investigated in the experiment were honing pressure (factor A), number of strokes (factor B) and cycle time (factor C). Assume that you used Taguchi's L8 orthogonal array to investigate the three factors instead of the <math>{2}^{3}\,\!</math> design that was used in [[Two_Level_Factorial_Experiments|Two Level Factorial Experiments]]. Based on the discussion in the previous section, the preferred columns for the L8 array are the first, second and fourth columns. Therefore, the three factors should be assigned to these columns. The three factors are assigned to these columns based on the figure (c) above, so that you can easily compare results obtained from the L8 array to the ones included in [[Two_Level_Factorial_Experiments| Two Level Factorial Experiments]]. Based on this assignment, the L8 array for the two replicates, along with the respective response values, should be as shown in the third table. Note that to run the experiment using the L8 array, you would use only the first, the second and the fourth column to set the three factors. |

| *<math>\hat{\theta }\,\!</math> is the vector of all parameter estimates obtained using MLE (i.e., <math>\hat{\theta }=[{{\hat{\sigma }}^{\prime }}\,\!</math> <math>{{\hat{\beta }}_{0}}\,\!</math> <math>{{\hat{\beta }}_{1}}\,\!</math>... <math>{]}'\,\!</math> )

| |

| *<math>{{\hat{\theta }}_{(-i)}}\,\!</math> is the vector of all parameter estimates excluding the estimate of <math>{{\theta }_{i}}\,\!</math>

| |

| *<math>L(\hat{\theta })\,\!</math> is the value of the likelihood function when all parameters are included in the model

| |

| *<math>L({{\hat{\theta }}_{(-i)}})\,\!</math> is the value of the likelihood function when all parameters except <math>{{\theta }_{i}}\,\!</math> are included in the model

| |

|

| |

|

| If the null hypothesis, <math>{{H}_{0}}\,\!</math>, is true then the ratio, <math>-2\ln L({{\hat{\theta }}_{(-i)}})/L(\hat{\theta })\,\!</math>, follows the Chi-Squared distribution with one degree of freedom. Therefore, <math>{{H}_{0}}\,\!</math> is rejected at a significance level, <math>\alpha \,\!</math>, if <math>LR\,\!</math> is greater than the critical value <math>\chi _{1,\alpha }^{2}\,\!</math>.

| |

| <br>

| |

| The likelihood ratio test can also be used to test the significance of a number of parameters, <math>r\,\!</math>, at the same time. In this case, <math>L({{\hat{\theta }}_{(-i)}})\,\!</math> represents the likelihood value when all <math>r\,\!</math> parameters to be tested are not included in the model. In other words, <math>L({{\hat{\theta }}_{(-i)}})\,\!</math> would represent the likelihood value for the reduced model that does not contain the <math>r\,\!</math> parameters under test. Here, the ratio <math>-2\ln L({{\hat{\theta }}_{(-i)}})/L(\hat{\theta })\,\!</math> will follow the Chi-Squared distribution with <math>k-r\,\!</math> degrees of freedom if all <math>r\,\!</math> parameters are insignificant (with <math>k\,\!</math> representing the number of parameters in the full model). Thus, if <math>LR>\chi _{k-r,\alpha }^{2}\,\!</math>, the null hypothesis, <math>{{H}_{0}}\,\!</math>, is rejected and it can be concluded that at least one of the <math>r\,\!</math> parameters is significant.

| |

|

| |

|

| ====Example====

| | [[Image:doet8.3.png|center|639px|Using Taguchi's L8 array to investigate factors affecting the surface finish of automobile brake drums.]] |

|

| |

|

| To illustrate the use of MLE in R-DOE analysis, consider the case where the life of a product is thought to be affected by two factors, <math>A\,\!</math> and <math>B\,\!</math>. The failure of the product has been found to follow the lognormal distribution. The analyst decides to run an R-DOE analysis using a single replicate of the 2 <math>^{2}\,\!</math> design. Previous studies indicate that the interaction between <math>A\,\!</math> and <math>B\,\!</math> does not affect the life of the product. The design for this experiment can be set up in DOE++ as shown in the following figure.

| |

|

| |

|

| <br>

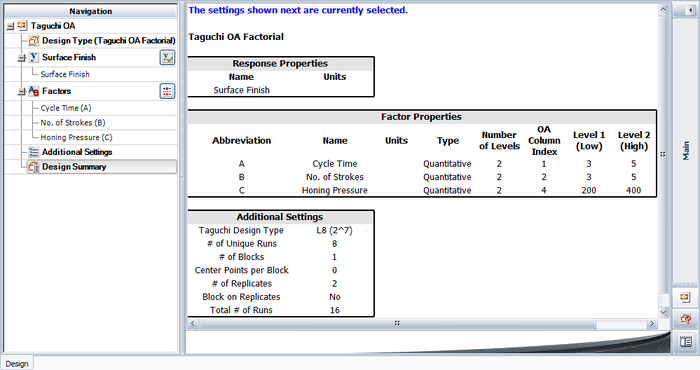

| | The experiment design for this example can be set using the properties shown in the figure below. |

| [[Image:doe11.1.png|thumb|center|400px|Design properties for the experiment in the [[Highly_Fractional_Factorial_Designs#Example| example]].]]

| |

|

| |

|

| The resulting experiment design and the corresponding times-to-failure data obtained are shown next. Note that, although the life data set contains ''complete data'' and regression techniques are applicable, calculations are shown using MLE. DOE ++ uses MLE for all R-DOE analysis calculations.

| |

|

| |

| <br>

| |

| [[Image:doe11.2.png|thumb|center|400px|The <math>2^2\,\!</math> experiment design and the corresponding life data for the [[Highly_Fractional_Factorial_Designs#Example| example]].]]

| |

|

| |

|

| |

|

| Because the purpose of the experiment is to study two factors without considering their interaction, the applicable model for the lognormally distributed response data is:

| | [[Image:doe8_5.png|center|700px|Design properties for the experiment in the [[Highly_Fractional_Factorial_Designs#Example| example]].|link=]] |

|

| |

|

| ::<math>\mu _{i}^{\prime }={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}\,\!</math>

| |

|

| |

|

| where <math>\mu _{i}^{\prime }\,\!</math> is the mean of the natural logarithm of the times-to-failure at the <math>i\,\!</math> th treatment combination ( <math>i=1,2,3,4\,\!</math> ), <math>{{\beta }_{1}}\,\!</math> is the effect coefficient for factor <math>A\,\!</math> and <math>{{\beta }_{2}}\,\!</math> is the effect coefficient for factor <math>B\,\!</math>. The analysis for this case is carried out in DOE++ by dropping the interaction <math>AB\,\!</math> using the Select Effects icon in the Control Panel.

| | Note that for this design, the factor properties are set up as shown in the design summary. |

|

| |

|

| The following hypotheses need to be tested in this example:

| |

|

| |

|

| 1) <math>{{H}_{0}}\ \ :\ \ {{\beta }_{1}}=0\,\!</math>

| | [[Image:doe8_6.png|center|700px|Factor properties for the experiment in the [[Highly_Fractional_Factorial_Designs#Example| example]].|link=]] |

| <math>{{H}_{1}}\ \ :\ \ {{\beta }_{1}}\ne 0\,\!</math>

| |

| <br>

| |

| This test investigates the main effect of factor <math>A\,\!</math>. The statistic for this test is:

| |

|

| |

|

| ::<math>L{{R}_{A}}=-2\ln \frac{{{L}_{\tilde{\ }A}}}{L}\,\!</math>

| |

|

| |

|

| where <math>L\,\!</math> represents the value of the likelihood function when all coefficients are included in the model and <math>{{L}_{\tilde{\ }A}}\,\!</math> represents the value of the likelihood function when all coefficients except <math>{{\beta }_{1}}\,\!</math> are included in the model.

| | The resulting design along with the response values is shown in the figure below. |

|

| |

| 2) <math>{{H}_{0}}\ \ :\ \ {{\beta }_{2}}=0\,\!</math>

| |

| <math>{{H}_{1}}\ \ :\ \ {{\beta }_{2}}\ne 0\,\!</math>

| |

| <br>

| |

| This test investigates the main effect of factor <math>B\,\!</math>. The statistic for this test is:

| |

|

| |

|

| ::<math>L{{R}_{B}}=-2\ln \frac{{{L}_{\tilde{\ }B}}}{L}\,\!</math>

| |

|

| |

|

| where <math>L\,\!</math> represents the value of the likelihood function when all coefficients are included in the model and <math>{{L}_{\tilde{\ }B}}\,\!</math> represents the value of the likelihood function when all coefficients except <math>{{\beta }_{2}}\,\!</math> are included in the model.

| | [[Image:doe8_7.png|center|650px|Experiment design for the [[Highly_Fractional_Factorial_Designs#Example| example]].|link=]] |

|

| |

|

| To calculate the test statistics, the maximum likelihood estimates of the parameters must be known. The estimates are obtained next.

| |

|

| |

|

| ===MLE Estimates===

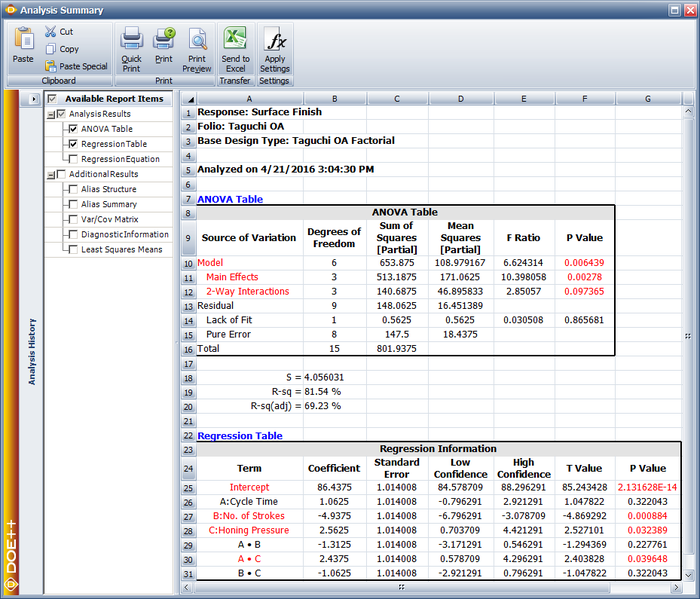

| | And the results from the DOE folio for the design are shown in the next figure. |

| Since the life data for the present experiment are complete and follow the lognormal distribution, the likelihood function can be written as:

| |

|

| |

|

| ::<math>L=\underset{i=1}{\overset{4}{\mathop \prod }}\,\left[ \frac{1}{{{t}_{i}}{\sigma }'\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln ({{t}_{i}})-\mu _{i}^{\prime }}{{{\sigma }'}} \right)}^{2}}}} \right]\,\!</math>

| |

|

| |

|

| | [[Image:doe8_8_1.png|center|700px|Results for the experiment in the example.|link=]] |

|

| |

|

| Substituting <math>\mu _{i}^{\prime }\,\!</math> from the applicable model for the lognormally distributed response data, the likelihood function is:

| |

|

| |

|

| ::<math>L=\underset{i=1}{\overset{4}{\mathop \prod }}\,\left[ \frac{1}{{{t}_{i}}{\sigma }'\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})}{{{\sigma }'}} \right)}^{2}}}} \right]\,\!</math>

| | The results identify honing pressure, number of strokes, and the interaction between honing pressure and cycle time to be significant effects. This is identical to the conclusion obtained from the <math>{2}^{3}\,\!</math> design used in [[Two_Level_Factorial_Experiments|Two Level Factorial Experiments]]. |

| | |

| Then the log-likelihood function is:

| |

| | |

| ::<math>\begin{align}

| |

| \Lambda ({\sigma }',{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}})= & \ln (L) \\

| |

| = & \underset{i=1}{\overset{4}{\mathop \sum }}\,\ln \left[ \frac{1}{{{t}_{i}}{\sigma }'\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})}{{{\sigma }'}} \right)}^{2}}}} \right] \\

| |

| = & \ln \left[ \frac{1}{{{t}_{1}}{{t}_{2}}{{t}_{3}}{{t}_{4}}{{({\sigma }'\sqrt{2\pi })}^{4}}} \right]+ \\

| |

| & \left[ -\frac{1}{2}\underset{i=1}{\overset{4}{\mathop \sum }}\,{{\left( \frac{\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})}{{{\sigma }'}} \right)}^{2}} \right] \\

| |

| = & -[\ln ({{t}_{1}}{{t}_{2}}{{t}_{3}}{{t}_{4}})+4\ln ({\sigma }')+2\ln (2\pi )]+ \\

| |

| & \left[ -\frac{1}{2}\underset{i=1}{\overset{4}{\mathop \sum }}\,{{\left( \frac{\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})}{{{\sigma }'}} \right)}^{2}} \right]

| |

| \end{align}\,\!</math>

| |

| | |

| To obtain the MLE estimates of the parameters, <math>{\sigma }',{{\beta }_{0}},{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{2}}\,\!</math>, the log-likelihood function must be differentiated with respect to these parameters:

| |

| | |

| ::<math>\begin{align}

| |

| \frac{\partial \Lambda }{\partial {\sigma }'}= & -\frac{4}{{{\sigma }'}}+\frac{1}{{{({\sigma }')}^{3}}}\underset{i=1}{\overset{4}{\mathop \sum }}\,{{[\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})]}^{2}} \\

| |

| \frac{\partial \Lambda }{\partial {{\beta }_{0}}}= & \frac{1}{{{({\sigma }')}^{2}}}\underset{i=1}{\overset{4}{\mathop \sum }}\,[\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})] \\

| |

| \frac{\partial \Lambda }{\partial {{\beta }_{1}}}= & \frac{1}{{{({\sigma }')}^{2}}}\underset{i=1}{\overset{4}{\mathop \sum }}\,{{x}_{i1}}[\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})] \\

| |

| \frac{\partial \Lambda }{\partial {{\beta }_{2}}}= & \frac{1}{{{({\sigma }')}^{2}}}\underset{i=1}{\overset{4}{\mathop \sum }}\,{{x}_{i2}}[\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})]

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| Equating the <math>\partial \Lambda /\partial {{\theta }_{i}}\,\!</math> terms to zero returns the required estimates. The coefficients <math>{{\hat{\beta }}_{0}}\,\!</math>, <math>{{\hat{\beta }}_{1}}\,\!</math> and <math>{{\hat{\beta }}_{2}}\,\!</math> are obtained first as these are required to estimate <math>{{\hat{\sigma }}^{\prime }}\,\!</math>. Setting <math>\partial \Lambda /\partial {{\beta }_{0}}=0\,\!</math> :

| |

| | |

| ::<math>\underset{i=1}{\overset{4}{\mathop \sum }}\,[\ln ({{t}_{i}})-({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}})]=0\,\!</math>

| |

| | |

| Substituting the values of <math>{{t}_{i}}\,\!</math>, <math>{{x}_{i1}}\,\!</math> and <math>{{x}_{i2}}\,\!</math> from the figure above and simplifying:

| |

| | |

| ::<math>\ln {{t}_{1}}+\ln {{t}_{2}}+\ln {{t}_{3}}+\ln {{t}_{4}}-4{{\beta }_{0}}=0\,\!</math>

| |

| | |

| :Thus:

| |

| | |

| ::<math>\begin{align}

| |

| {{{\hat{\beta }}}_{0}}= & \frac{1}{4}(\ln {{t}_{1}}+\ln {{t}_{2}}+\ln {{t}_{3}}+\ln {{t}_{4}}) \\

| |

| = & \frac{1}{4}(3.2958+3.2189+3.912+4.0073) \\

| |

| = & 3.6085

| |

| \end{align}\,\!</math>

| |

| | |

| Setting <math>\partial \Lambda /\partial {{\beta }_{1}}=0\,\!</math> :

| |

| | |

| ::<math>{{x}_{i1}}\ln {{t}_{1}}+{{x}_{i1}}\ln {{t}_{2}}+{{x}_{i1}}\ln {{t}_{3}}+{{x}_{i1}}\ln {{t}_{4}}-4{{\beta }_{1}}=0\,\!</math>

| |

| | |

| :Thus:

| |

| | |

| ::<math>\begin{align}

| |

| {{{\hat{\beta }}}_{1}}= & \frac{1}{4}(-\ln {{t}_{1}}+\ln {{t}_{2}}-\ln {{t}_{3}}+\ln {{t}_{4}}) \\

| |

| = & \frac{1}{4}(-3.2958+3.2189-3.912+4.0073) \\

| |

| = & 0.0046

| |

| \end{align}\,\!</math>

| |

| | |

| Setting <math>\partial \Lambda /\partial {{\beta }_{2}}=0\,\!</math> :

| |

| | |

| ::<math>{{x}_{i2}}\ln {{t}_{1}}+{{x}_{i2}}\ln {{t}_{2}}+{{x}_{i3}}\ln {{t}_{3}}+{{x}_{i4}}\ln {{t}_{4}}-4{{\beta }_{2}}=0\,\!</math>

| |

| | |

| | |

| :Thus:

| |

| | |

| ::<math>\begin{align}

| |

| {{{\hat{\beta }}}_{2}}= & \frac{1}{4}(-\ln {{t}_{1}}-\ln {{t}_{2}}+\ln {{t}_{3}}+\ln {{t}_{4}}) \\

| |

| = & \frac{1}{4}(-3.2958-3.2189+3.912+4.0073) \\

| |

| = & 0.3512

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| Knowing <math>{{\hat{\beta }}_{0}},{{\hat{\beta }}_{1}}\,\!</math> and <math>{{\hat{\beta }}_{2}}\,\!</math>, <math>{{\hat{\sigma }}^{\prime }}\,\!</math> can now be obtained. Setting <math>\partial \Lambda /\partial {\sigma }'=0\,\!</math> :

| |

| | |

| ::<math>-\frac{4}{{{\sigma }'}}+\frac{1}{{{({\sigma }')}^{3}}}\underset{i=1}{\overset{4}{\mathop \sum }}\,{{[\ln ({{t}_{i}})-(3.6085+0.0046{{x}_{i1}}+0.3512{{x}_{i2}})]}^{2}}=0\,\!</math>

| |

| | |

| :Thus:

| |

| | |

| ::<math>\begin{align}

| |

| {{{\hat{\sigma }}}^{\prime }}= & \frac{1}{2}\sqrt{\underset{i=1}{\overset{4}{\mathop \sum }}\,{{[\ln ({{t}_{i}})-(3.6085+0.0046{{x}_{i1}}+0.3512{{x}_{i2}})]}^{2}}} \\

| |

| = & 0.043

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| Once the estimates have been calculated, the likelihood ratio test can be carried out for the two factors.

| |

| | |

| ===Likelihood Ratio Test===

| |

| The likelihood ratio test for factor <math>A\,\!</math> is conducted by using the likelihood value corresponding to the full model and the likelihood value when <math>A\,\!</math> is not included in the model. The likelihood value corresponding to the full model (in this case <math>\mu _{i}^{\prime }={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}\,\!</math> ) is:

| |

| | |

| ::<math>\begin{align}

| |

| L= & \underset{i=1}{\overset{4}{\mathop \prod }}\,\left[ \frac{1}{{{t}_{i}}{{{\hat{\sigma }}}^{\prime }}\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln ({{t}_{i}})-({{{\hat{\beta }}}_{0}}+{{{\hat{\beta }}}_{1}}{{x}_{i1}}+{{{\hat{\beta }}}_{2}}{{x}_{i2}})}{{{{\hat{\sigma }}}^{\prime }}} \right)}^{2}}}} \right] \\

| |

| = & 0.000537311

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The corresponding logarithmic value is <math>\ln (L)=\ln (0.000537311)=-7.529\,\!</math>.

| |

| The likelihood value for the reduced model that does not contain factor <math>A\,\!</math> (in this case <math>\mu _{i}^{\prime }={{\beta }_{0}}+{{\beta }_{2}}{{x}_{i2}}\,\!</math> ) is:

| |

| | |

| ::<math>\begin{align}

| |

| {{L}_{\tilde{\ }A}}= & \underset{i=1}{\overset{4}{\mathop \prod }}\,\left[ \frac{1}{{{t}_{i}}{{{\hat{\sigma }}}^{\prime }}\sqrt{2\pi }}{{e}^{-\frac{1}{2}{{\left( \frac{\ln ({{t}_{i}})-({{{\hat{\beta }}}_{0}}+{{{\hat{\beta }}}_{2}}{{x}_{i2}})}{{{{\hat{\sigma }}}^{\prime }}} \right)}^{2}}}} \right] \\

| |

| = & 0.000525337

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The corresponding logarithmic value is <math>\ln ({{L}_{\tilde{\ }A}})=\ln (0.000525337)=-7.552\,\!</math>.

| |

| Therefore, the likelihood ratio to test the significance of factor <math>A\,\!</math> is:

| |

| | |

| ::<math>\begin{align}

| |

| L{{R}_{A}}= & -2\ln \frac{{{L}_{\tilde{\ }A}}}{L} \\

| |

| = & -2\ln \frac{0.000525337}{0.000537311} \\

| |

| = & 0.0451

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The <math>p\,\!</math> value corresponding to <math>L{{R}_{A}}\,\!</math> is:

| |

| | |

| ::<math>\begin{align}

| |

| p\text{ }value= & 1-P(\chi _{1}^{2}<L{{R}_{A}}) \\

| |

| = & 1-0.1682 \\

| |

| = & 0.8318

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| Assuming that the desired significance level for the present experiment is 0.1, since <math>p\,\!</math> <math>value>0.1\,\!</math>, <math>{{H}_{0}}\ \ :\ \ {{\beta }_{1}}=0\,\!</math> cannot be rejected and it can be concluded that factor <math>A\,\!</math> does not affect the life of the product.

| |

| <br>

| |

| The likelihood ratio to test factor <math>B\,\!</math> can be calculated in a similar way as shown next:

| |

| | |

| ::<math>\begin{align}

| |

| L{{R}_{B}}= & -2\ln \frac{{{L}_{\tilde{\ }B}}}{L} \\

| |

| = & -2\ln \frac{1.17995E-07}{0.000537311} \\

| |

| = & 16.8475

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The <math>p\,\!</math> value corresponding to <math>L{{R}_{B}}\,\!</math> is:

| |

| | |

| ::<math>\begin{align}

| |

| p\text{ }value= & 1-P(\chi _{1}^{2}<L{{R}_{B}}) \\

| |

| = & 1-0.99996 \\

| |

| = & 0.00004

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| Since <math>p\,\!</math> <math>value<0.1\,\!</math>, <math>{{H}_{0}}\ \ :\ \ {{\beta }_{2}}=0\,\!</math> is rejected and it is concluded that factor <math>B\,\!</math> affects the life of the product. The previous calculation results are displayed as the Likelihood Ratio Test Table in the results obtained from DOE++ as shown in the figure below.

| |

|

| |

|

| | === Preferred Columns in Taguchi OA=== |

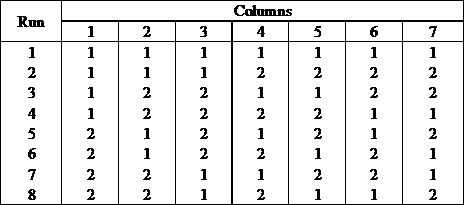

| | One of the difficulties of using Taguchi OA is to assign factors to the appropriate columns of the array. For example, take a simple Taguchi OA L8(2^7), which can be used for experiments with up to 7 factors. If you have only 3 factors, which 3 columns in this array should be used? The DOE folio provides a simple utility to help users utilize Taguchi OA more effectively by assigning factors to the appropriate columns. |

| | Let’s use Taguchi OA L8(2^7) as an example. The design table for this array is: |

| | | |

| | | [[Image:DOEtableChapter9.png|center]] |

| <br>

| |

| [[Image:doe11.3.png|thumb|center|400px|Likelihood ratio test results from DOE++ for the experiment in the [[Highly_Fractional_Factorial_Designs#Example| example]].]] | |

| | |

| ==Fisher Matrix Bounds on Parameters==

| |

| In general, the MLE estimates of the parameters are asymptotically normal. This means that for large sample sizes the distribution of the estimates from the same population would be very close to the normal distribution [MeekerAndEscobar]. If <math>\hat{\theta }\,\!</math> is the MLE estimate of any parameter, <math>\theta \,\!</math>, then the ( <math>1-\alpha \,\!</math> )% two-sided confidence bounds on the parameter are:

| |

| | |

| ::<math>\hat{\theta }-{{z}_{\alpha /2}}\cdot \sqrt{Var(\hat{\theta })}<\theta <\hat{\theta }+{{z}_{\alpha /2}}\cdot \sqrt{Var(\hat{\theta })}\,\!</math>

| |

| | |

| | |

| where <math>Var(\hat{\theta })\,\!</math> represents the variance of <math>\hat{\theta }\,\!</math> and <math>{{z}_{\alpha /2}}\,\!</math> is the critical value corresponding to a significance level of <math>\alpha /2\,\!</math> on the standard normal distribution. The variance of the parameter, <math>Var(\hat{\theta })\,\!</math>, is obtained using the Fisher information matrix. For <math>k\,\!</math> parameters, the Fisher information matrix is obtained from the log-likelihood function <math>\Lambda \,\!</math> as follows:

| |

| | |

| | |

| ::<math>F=\left[ \begin{matrix}

| |

| -\frac{{{\partial }^{2}}\Lambda }{\partial \theta _{1}^{2}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{1}}\partial {{\theta }_{2}}} & ... & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{1}}\partial {{\theta }_{k}}} \\

| |

| -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{1}}\partial {{\theta }_{2}}} & -\frac{{{\partial }^{2}}\Lambda }{\partial \theta _{2}^{2}} & ... & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{2}}\partial {{\theta }_{k}}} \\

| |

| . & . & ... & . \\

| |

| . & . & ... & . \\

| |

| -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{1}}\partial {{\theta }_{k}}} & . & ... & -\frac{{{\partial }^{2}}\Lambda }{\partial \theta _{k}^{2}} \\

| |

| \end{matrix} \right]\,\!</math>

| |

|

| |

|

|

| |

|

| The variance-covariance matrix is obtained by inverting the Fisher matrix <math>F\,\!</math> :

| | This is a fractional factorial design for 7 factors. For any fractional factorial design, the first thing we need to do is check its alias structure. In general, the alias structures for Taguchi OAs are very complicated. People usually use the following table to represent the alias relations between each factor. For the above orthogonal array, the alias table is: |

|

| |

|

| | {| style="text-align:center;" cellpadding="2" border="1" align="center" |

| | |- |

| | |1|| 2|| 3|| 4|| 5|| 6|| 7 |

| | |- |

| | |2x3|| 1x3|| 1x2|| 1x5|| 1x4|| 1x7|| 1x6 |

| | |- |

| | |4x5|| 4x6|| 4x7|| 2x6|| 2x7|| 2x4|| 2x5 |

| | |- |

| | |6x7|| 5x7|| 5x6|| 3x7|| 3x6|| 3x5|| 3x4 |

| | |} |

|

| |

|

| ::<math>\left[ \begin{matrix}

| |

| Var({{{\hat{\theta }}}_{1}}) & Cov({{{\hat{\theta }}}_{1}},{{{\hat{\theta }}}_{2}}) & ... & {} \\

| |

| Cov({{{\hat{\theta }}}_{1}},{{{\hat{\theta }}}_{2}}) & Var({{{\hat{\theta }}}_{2}}) & ... & {} \\

| |

| . & . & ... & {} \\

| |

| . & . & ... & {} \\

| |

| Cov({{{\hat{\theta }}}_{1}},{{{\hat{\theta }}}_{k}}) & . & ... & Var({{{\hat{\theta }}}_{k}}) \\

| |

| \end{matrix} \right]=\,\!</math>

| |

|

| |

|

| | In the above table, an Arabic number is used to represent a factor. For instance, “1” represents the factor assigned to the 1st column in the array. “2x3” represents the interaction effect of the two factors assigned to column 2 and 3. Each column in the above alias table lists all the 2-way interaction effects that are aliased with the main effect of the factor assigned to this column. For example, for the 1st column, the main effect of the factor assigned to it is aliased with interaction effects of 2x3, 4x5 and 6x7. |

| | If an experiment has only 3 factors and these 3 factors A, B and C are assigned to the first 3 columns of the above L8(2^7) array, then the design table will be: |

|

| |

|

| | {| style="text-align:center;" cellpadding="2" border="1" align="center" |

| | |- |

| | |Run|| A (Column 1)|| B (Column 2)|| C (Column 3) |

| | |- |

| | |1|| 1|| 1|| 1 |

| | |- |

| | |2|| 1|| 1|| 1 |

| | |- |

| | |3|| 1|| 2|| 2 |

| | |- |

| | |4|| 1|| 2|| 2 |

| | |- |

| | |5|| 2|| 1|| 2 |

| | |- |

| | |6|| 2|| 1|| 2 |

| | |- |

| | |7|| 2|| 2|| 1 |

| | |- |

| | |8|| 2|| 2|| 1 |

| | |} |

|

| |

|

| ::<math>{{\left[ \begin{matrix} | | The alias structure for the above table is: |

| -\frac{{{\partial }^{2}}\Lambda }{\partial \theta _{1}^{2}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{1}}\partial {{\theta }_{2}}} & ... & {} \\

| |

| -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{1}}\partial {{\theta }_{2}}} & -\frac{{{\partial }^{2}}\Lambda }{\partial \theta _{2}^{2}} & ... & {} \\

| |

| . & . & ... & {} \\

| |

| . & . & ... & {} \\

| |

| -\frac{{{\partial }^{2}}\Lambda }{\partial {{\theta }_{1}}\partial {{\theta }_{k}}} & . & ... & -\frac{{{\partial }^{2}}\Lambda }{\partial \theta _{k}^{2}} \\

| |

| \end{matrix} \right]}^{-1}}\,\!</math>

| |

|

| |

|

| | {| style="text-align:center;" cellpadding="2" align="center" |

| | |- |

| | |[I] = I – ABC |

| | |- |

| | |[A] = A – BC |

| | |- |

| | |[B] = B – AC |

| | |- |

| | |[C] = C – AB |

| | |} |

|

| |

|

| Once the variance-covariance matrix is known the variance of any parameter can be obtained from the diagonal elements of the matrix. Note that if a parameter, <math>\theta \,\!</math>, can take only positive values, it is assumed that the <math>\ln (\hat{\theta })\,\!</math> follows the normal distribution [MeekerAndEscobar]. The bounds on the parameter in this case are:

| | This is a resolution 3 design. All the main effects are aliased with 2-way interactions. There are many ways to choose 3 columns from the 7 columns of L8(2^7). If the 3 factors are assigned to column 1, 2, and 4, then the design table is: |

|

| |

|

| ::<math>CI\text{ }on\text{ }\ln (\hat{\theta })=\ln (\hat{\theta })\pm {{z}_{\alpha /2}}\sqrt{Var(\ln (\hat{\theta }))}\,\!</math>

| | {| style="text-align:center;" cellpadding="2" border="1" align="center" |

| | |- |

| | |Run|| A (Column 1)|| B (Column 2)|| C (Column 4) |

| | |- |

| | |1|| 1|| 1|| 1 |

| | |- |

| | |2|| 1|| 1|| 1 |

| | |- |

| | |3|| 1|| 2|| 2 |

| | |- |

| | |4|| 1|| 2|| 2 |

| | |- |

| | |5|| 2|| 1|| 2 |

| | |- |

| | |6|| 2|| 1|| 2 |

| | |- |

| | |7|| 2|| 2|| 1 |

| | |- |

| | |8|| 2|| 2|| 1 |

| | |} |

|

| |

|

|

| |

|

| Using <math>Var[f(\hat{\theta })]={{(\partial f/\partial \theta )}^{2}}\cdot Var(\hat{\theta })\,\!</math> we get <math>Var(\ln (\hat{\theta }))={{(1/\hat{\theta })}^{2}}Var(\hat{\theta })\,\!</math>. Substituting this value we have:

| | For experiments using the above design table, all the effects will be alias free. Therefore, this design is much better than the previous one which used column 1, 2, and 3 of L8(2^7). Although both designs have the same number of runs, more information can be obtained from this design since it is alias free. |

|

| |

|

| ::<math>\begin{align}

| | Clearly, it is very important to assign factors to the right columns when applying Taguchi OA. The DOE folio can help users automatically choose the right columns when the number of factors is less than the number of columns in a Taguchi OA. The selection is based on the specified model terms by users. Let’s use an example to explain this. |

| CI\text{ }on\text{ }\ln (\hat{\theta })= & \ln (\hat{\theta })\pm {{z}_{\alpha /2}}\sqrt{{{(1/\hat{\theta })}^{2}}Var(\hat{\theta })} \\

| |

| = & \ln (\hat{\theta })\pm ({{z}_{\alpha /2}}/\hat{\theta })\sqrt{Var(\hat{\theta })} \\

| |

| or\text{ }CI\text{ }on\text{ }\hat{\theta }= & \exp [\ln (\hat{\theta })\pm ({{z}_{\alpha /2}}/\hat{\theta })\sqrt{Var(\hat{\theta })}] \\

| |

| = & \hat{\theta }\cdot \exp [\pm ({{z}_{\alpha /2}}/\hat{\theta })\sqrt{Var(\hat{\theta })}]

| |

| \end{align}\,\!</math>

| |

|

| |

|

|

| |

| Knowing <math>Var(\hat{\theta })\,\!</math> from the variance-covariance matrix, the confidence bounds on <math>\hat{\theta }\,\!</math> can then be determined.

| |

| ====Example==== | | ====Example==== |

| <br>

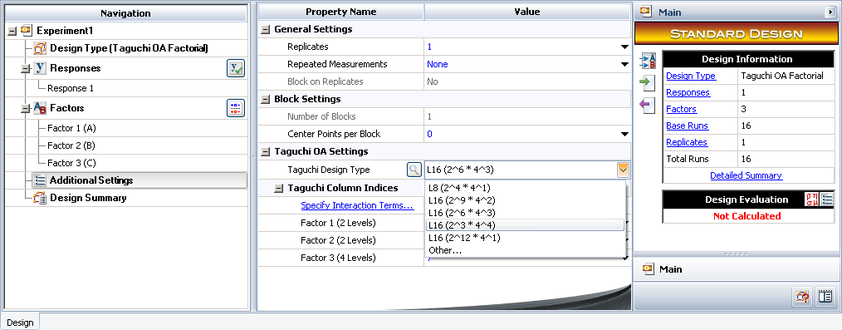

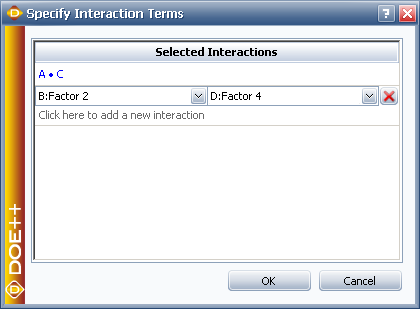

| | Design an experiment with 3 qualitative factors. Factors A and B have 2 levels; factor C has 4 levels. The experimenters are interested in all the main effects and the interaction effect AC. |

| | |

| Continuing with the [[Highly_Fractional_Factorial_Designs#Example| example]], the confidence bounds on the MLE estimates of the parameters <math>{{\beta }_{0}}\,\!</math>, <math>{{\beta }_{1}}\,\!</math>, <math>{{\beta }_{2}}\,\!</math> and <math>{\sigma }'\,\!</math> can now be obtained. The Fisher information matrix for the example is:

| |

| | |

| ::<math>\begin{align}

| |

| F= & \left[ \begin{matrix}

| |

| -\frac{{{\partial }^{2}}\Lambda }{\partial \beta _{0}^{2}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\beta }_{0}}\partial {{\beta }_{1}}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\beta }_{0}}\partial {{\beta }_{2}}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\beta }_{0}}\partial {\sigma }'} \\

| |

| {} & -\frac{{{\partial }^{2}}\Lambda }{\partial \beta _{1}^{2}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\beta }_{1}}\partial {{\beta }_{2}}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\beta }_{1}}\partial {\sigma }'} \\

| |

| {} & {} & -\frac{{{\partial }^{2}}\Lambda }{\partial \beta _{2}^{2}} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\beta }_{2}}\partial {\sigma }'} \\

| |

| sym. & {} & {} & -\frac{{{\partial }^{2}}\Lambda }{\partial {{\sigma }^{\prime 2}}} \\

| |

| \end{matrix} \right] \\

| |

| = & \left[ \begin{matrix}

| |

| \tfrac{4}{{{\sigma }^{\prime 2}}} & \tfrac{1}{{{\sigma }^{\prime 2}}}\underset{i=1}{\overset{4}{\mathop{\sum }}}\,{{x}_{i1}} & \tfrac{1}{{{\sigma }^{\prime 2}}}\underset{i=1}{\overset{4}{\mathop{\sum }}}\,{{x}_{i2}} & \tfrac{2}{{{\sigma }^{\prime 3}}}[\underset{i=1}{\overset{4}{\mathop{\sum }}}\,(\ln {{t}_{i}}-\mu _{i}^{\prime })] \\

| |

| {} & \tfrac{1}{{{\sigma }^{\prime 2}}}\underset{i=1}{\overset{4}{\mathop{\sum }}}\,x_{i1}^{2} & \tfrac{1}{{{\sigma }^{\prime 2}}}\underset{i=1}{\overset{4}{\mathop{\sum }}}\,{{x}_{i1}}{{x}_{i2}} & \tfrac{2}{{{\sigma }^{\prime 3}}}[\underset{i=1}{\overset{4}{\mathop{\sum }}}\,{{x}_{i1}}\cdot (\ln {{t}_{i}}-\mu _{i}^{\prime })] \\

| |

| {} & {} & \tfrac{1}{{{\sigma }^{\prime 2}}}\underset{i=1}{\overset{4}{\mathop{\sum }}}\,x_{i2}^{2} & \tfrac{2}{{{\sigma }^{\prime 3}}}[\underset{i=1}{\overset{4}{\mathop{\sum }}}\,{{x}_{i2}}\cdot (\ln {{t}_{i}}-\mu _{i}^{\prime })] \\

| |

| sym. & {} & {} & \tfrac{4}{{{\sigma }^{\prime 2}}}+\tfrac{(-3)}{{{\sigma }^{\prime 4}}}\underset{i=1}{\overset{4}{\mathop{\sum }}}\,{{(\ln {{t}_{i}}-\mu _{i}^{\prime })}^{2}}] \\

| |

| \end{matrix} \right] \\

| |

| = & \left[ \begin{matrix}

| |

| 2165.6741 & 0 & 0 & -1.1195E-11 \\

| |

| {} & 2165.6741 & 0 & -1.1195E-11 \\

| |

| {} & {} & 2165.6741 & -3.358E-11 \\

| |

| sym. & {} & {} & 4330.8227 \\

| |

| \end{matrix} \right]

| |

| \end{align}\,\!</math>

| |

|

| |

|

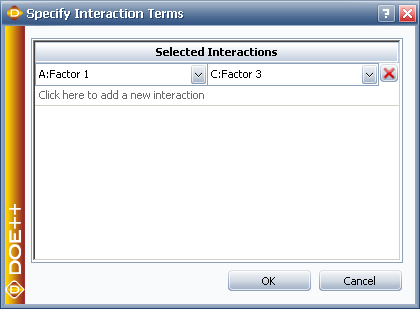

| | Based on this requirement, Taguchi OA L16(2^6*4^3) can be used since it can handle both 2 level and 4 level factors. It has 9 columns. The first 6 columns are used for 2 level factors and the last 3 columns are used for 4 level factors. We need to assign factor A and B to two of the first 6 columns, and assign factor C to one of the last 3 columns. |

|

| |

|

| The variance-covariance matrix can be obtained by taking the inverse of the Fisher matrix <math>F\,\!</math> :

| | In a Weibull++ DOE folio, we can choose L16(2^6*4^3) in the Additional Settings. |

|

| |

|

| ::<math>\left[ \begin{matrix} | | [[Image:doe8_9.png|center|842px|Selecting the Taguchi OA design type.|link=]] |

| Var({{{\hat{\beta }}}_{0}}) & Cov({{{\hat{\beta }}}_{0}},{{{\hat{\beta }}}_{1}}) & Cov({{{\hat{\beta }}}_{0}},{{{\hat{\beta }}}_{2}}) & Cov({{{\hat{\beta }}}_{0}},{{{\hat{\sigma }}}^{\prime }}) \\

| |

| {} & Var({{{\hat{\beta }}}_{1}}) & Cov({{{\hat{\beta }}}_{1}},{{{\hat{\beta }}}_{2}}) & Cov({{{\hat{\beta }}}_{0}},{{{\hat{\sigma }}}^{\prime }}) \\

| |

| {} & {} & Var({{{\hat{\beta }}}_{2}}) & Cov({{{\hat{\beta }}}_{0}},{{{\hat{\sigma }}}^{\prime }}) \\

| |

| sym. & {} & {} & Var({{{\hat{\sigma }}}^{\prime }}) \\

| |

| \end{matrix} \right]={{F}^{-1}}\,\!</math>

| |

|

| |

|

| | Click '''Specify Interaction Terms''' to specify the interaction terms that are of interest to the experimenters. |

|

| |

|

| Inverting <math>F\,\!</math> returns the following matrix:

| | [[Image:doe8_10.png|center|420px|Specifying the interaction terms of interest.|link=]] |

|

| |

|

| ::<math>{{F}^{-1}}=\left[ \begin{matrix}

| | Based on the specified interaction effects, the DOE folio will assign each factor to the appropriate column. In this case, they are column 1, 3, and 7 as shown below. |

| 4.617E-4 & 0 & 0 & 0 \\

| |

| {} & 4.617E-4 & 0 & 0 \\

| |

| {} & {} & 4.617E-4 & 0 \\

| |

| sym. & {} & {} & 2.309E-4 \\

| |

| \end{matrix} \right]\,\!</math>

| |

|

| |

|

| | [[Image:doe8_11.png|center|842px|The interaction terms of interest have been specified.|link=]] |

|

| |

|

| Therefore, the variance of the parameter estimates are:

| | However, for a given Taguchi OA, it may not be possible to estimate all the specified interaction terms. If not all the requirements can be satisfied, the DOE folio will assign factors to columns that result in the least number of aliased effects. In this case, users should either use another Taguchi OA or other design types. The following example is one of these cases. |

|

| |

|

| ::<math>\begin{align}

| | ====Example==== |

| Var({{{\hat{\beta }}}_{0}})= & 4.617E-4 \\

| | Design an experiment for a test with 4 qualitative factors. Factors A and B have 2 levels; C and D have 4 levels. We are interested in all the main effects and the interaction effects AC and BD. |

| Var({{{\hat{\beta }}}_{1}})= & 4.617E-4 \\

| |

| Var({{{\hat{\beta }}}_{2}})= & 4.617E-4 \\

| |

| Var({{{\hat{\sigma }}}^{\prime }})= & 2.309E-4

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| Knowing the variance, the confidence bounds on the parameters can be calculated. For example, the 90% bounds ( <math>\alpha =0.1\,\!</math> ) on <math>{{\hat{\beta }}_{2}}\,\!</math> can be calculated as shown next:

| |

| | |

| ::<math>\begin{align}

| |

| CI= & {{{\hat{\beta }}}_{2}}\pm {{z}_{\alpha /2}}\cdot \sqrt{Var({{{\hat{\beta }}}_{2}})} \\

| |

| = & {{{\hat{\beta }}}_{2}}\pm {{z}_{0.05}}\cdot \sqrt{Var({{{\hat{\beta }}}_{2}})} \\ | |

| = & 0.3512\pm 1.645\cdot \sqrt{4.617E-4} \\ | |

| = & 0.3512\pm 0.0354 \\ | |

| = & 0.3158\text{ }and\text{ }0.3866 | |

| \end{align}\,\!</math>

| |

| | |

| | |

| The 90% bounds on <math>{\sigma }'\,\!</math> are (considering that <math>{\sigma }'\,\!</math> can only take positive values):

| |

| | |

| ::<math>\begin{align}

| |

| CI= & {{{\hat{\sigma }}}^{\prime }}\cdot \exp [\pm ({{z}_{0.05}}/{{{\hat{\sigma }}}^{\prime }})\sqrt{Var({{{\hat{\sigma }}}^{\prime }})}] \\

| |

| = & 0.043\cdot \exp [\pm (1.645/0.043)\sqrt{2.309E-4}] \\

| |

| = & 0.024\text{ }and\text{ }0.077

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The standard error for the parameters can be obtained by taking the positive square root of the variance. For example, the standard error for <math>{{\hat{\beta }}_{1}}\,\!</math> is:

| |

| | |

| ::<math>\begin{align}

| |

| se({{{\hat{\beta }}}_{1}})= & \sqrt{Var({{{\hat{\beta }}}_{1}})} \\

| |

| = & \sqrt{4.617E-4} \\

| |

| = & 0.0215

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The <math>z\,\!</math> statistic for <math>{{\hat{\beta }}_{1}}\,\!</math> is:

| |

| | |

| ::<math>\begin{align}

| |

| {{z}_{0}}= & \frac{{{{\hat{\beta }}}_{1}}}{se({{{\hat{\beta }}}_{1}})} \\

| |

| = & \frac{0.0046}{0.0215} \\

| |

| = & 0.21

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The <math>p\,\!</math> value corresponding to this statistic based on the standard normal distribution is:

| |

| | |

| ::<math>\begin{align}

| |

| p\text{ }value= & 2\cdot (1-P(Z\le |{{z}_{0}}|) \\

| |

| = & 2\cdot (1-0.58435) \\

| |

| = & 0.8313

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The previous calculation results are displayed as MLE Information in the results obtained from DOE++ as shown in the following figure. In the figure, the Effect corresponding to each factor is simply twice the MLE estimate of the coefficient for that factor. Generally, the <math>p\,\!</math> value corresponding to any coefficient in the MLE Information table should match the value obtained from the likelihood ratio test (displayed in the Likelihood Ratio Test table of the figure below). If the sample size is not large enough, as in the case of the present example, a difference may be seen in the two values. In such cases, the <math>p\,\!</math> value from the likelihood ratio test should be given preference. For the present example, the <math>p\,\!</math> value of 0.8318 for <math>{{\hat{\beta }}_{1}}\,\!</math>, obtained from the likelihood ratio test, would be preferred to the <math>p\,\!</math> value of 0.8313 displayed under MLE information. For details see [MeekerAndEscobar].

| |

|

| |

| | |

| <br>

| |

| [[Image:doe11.4.png|thumb|center|400px|MLE information from DOE++ for the [[Highly_Fractional_Factorial_Designs#Example_2| example]].]]

| |

| | |

| =R-DOE Analysis of Data Following the Weibull Distribution=

| |

| The probability density function for the two parameter Weibull distribution is:

| |

| | |

| ::<math>f(T)=\frac{\beta }{\eta }{{\left( \frac{T}{\eta } \right)}^{\beta -1}}\exp \left[ -{{\left( \frac{T}{\eta } \right)}^{\beta }} \right]\,\!</math>

| |

| | |

| | |

| where <math>\eta \,\!</math> is the scale parameter of the Weibull distribution and <math>\beta \,\!</math> is the shape parameter.[LDAReference] To distinguish the Weibull shape parameter from the effect coefficients, the shape parameter is represented as <math>Beta\,\!</math> instead of <math>\beta \,\!</math> in the remaining chapter.

| |

| For data following the two parameter Weibull distribution, the life characteristic used in R-DOE analysis is the scale parameter, <math>\eta \,\!</math>.[ALTReference] Since <math>\eta \,\!</math> represents life data that cannot take negative values, a logarithmic transformation is applied to it. The resulting model used in the R-DOE analysis for a two factor experiment with each factor at two levels can be written as follows:

| |

| | |

| ::<math>\ln ({{\eta }_{i}})={{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}+{{\beta }_{12}}{{x}_{i1}}{{x}_{i2}}\,\!</math>

| |

| | |

| :where:

| |

| ::• <math>{{\eta }_{i}}\,\!</math> is the value of the scale parameter at the <math>i\,\!</math> th treatment combination of the two factors

| |

| ::• <math>{{x}_{1}}\,\!</math> is the indicator variable representing the level of the first factor

| |

| ::• <math>{{x}_{2}}\,\!</math> is the indicator variable representing the level of the second factor

| |

| ::• <math>{{\beta }_{0}}\,\!</math> is the intercept term

| |

| ::• <math>{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{2}}\,\!</math> are the effect coefficients for the two factors

| |

| ::• and <math>{{\beta }_{12}}\,\!</math> is the effect coefficient for the interaction of the two factors

| |

| <br>

| |

| The model can be easily expanded to include other factors and their interactions. Note that when any data follows the Weibull distribution, the logarithmic transformation of the data follows the extreme-value distribution, whose probability density function is given as follows:

| |

| | |

| ::<math>f(\ln (T))=\frac{1}{{{\sigma }''}}\exp \left[ \frac{\ln (T)-{\mu }''}{{{\sigma }''}}-\exp \left( \frac{\ln (T)-{\mu }''}{{{\sigma }''}} \right) \right]\,\!</math>

| |

| | |

| where the <math>T\,\!</math> s follows the Weibull distribution, <math>{\mu }''\,\!</math> is the location parameter of the extreme-value distribution and <math>{\sigma }''\,\!</math> is the scale parameter of the extreme-value distribution. Eqns. (EtaEquation) and (EVD) show that for R-DOE analysis of life data that follows the Weibull distribution, the random error terms, <math>{{\epsilon }_{i}}\,\!</math>, will follow the extreme-value distribution (and not the normal distribution). Hence, regression techniques are not applicable even if the data is complete. Therefore, maximum likelihood estimation has to be used.

| |

| ===Maximum Likelihood Estimation for the Weibull Distribution===

| |

| The likelihood function for complete data in R-DOE analysis of Weibull distributed life data is:

| |

| | |

| ::<math>\begin{align}

| |

| {{L}_{failures}}= & \underset{i=1}{\overset{{{F}_{e}}}{\mathop{\prod }}}\,f({{t}_{i}},{{\eta }_{i}}) \\

| |

| = & \underset{i=1}{\overset{{{F}_{e}}}{\mathop{\prod }}}\,\left[ \frac{Beta}{{{\eta }_{i}}}{{\left( \frac{{{t}_{i}}}{{{\eta }_{i}}} \right)}^{Beta-1}}\exp \left[ -{{\left( \frac{{{t}_{i}}}{{{\eta }_{i}}} \right)}^{Beta}} \right] \right]

| |

| \end{align}\,\!</math>

| |

| | |

| :where:

| |

| ::• <math>{{F}_{e}}\,\!</math> is the total number of observed times-to-failure

| |

| ::• <math>{{\eta }_{i}}\,\!</math> is the life characteristic at the <math>i\,\!</math> th treatment

| |

| ::• and <math>{{t}_{i}}\,\!</math> is the time of the <math>i\,\!</math> th failure

| |

| <br>

| |

| For right censored data, the likelihood function is:

| |

| | |

| ::<math>{{L}_{suspensions}}=\underset{i=1}{\overset{{{S}_{e}}}{\mathop{\prod }}}\,\left[ \exp \left[ -{{\left( \frac{{{t}_{i}}}{{{\eta }_{i}}} \right)}^{Beta}} \right] \right]\,\!</math>

| |

| | |

| :where:

| |

| ::• <math>{{S}_{e}}\,\!</math> is the total number of observed suspensions

| |

| ::• and <math>{{t}_{i}}\,\!</math> is the time of <math>i\,\!</math> th suspension

| |

| <br>

| |

| For interval data, the likelihood function is:

| |

| | |

| ::<math>{{L}_{interval}}=\underset{i=1}{\overset{FI}{\mathop{\prod }}}\,\left[ \exp \left[ -{{\left( \frac{t_{i}^{2}}{{{\eta }_{i}}} \right)}^{Beta}} \right]-\exp \left[ -{{\left( \frac{t_{i}^{1}}{{{\eta }_{i}}} \right)}^{Beta}} \right] \right]\,\!</math>

| |

| | |

| :where:

| |

| ::• <math>FI\,\!</math> is the total number of interval data

| |

| ::• <math>t_{i}^{1}\,\!</math> is the beginning time of the <math>i\,\!</math> th interval

| |

| ::• and <math>t_{i}^{2}\,\!</math> is the end time of the <math>i\,\!</math> th interval

| |

| <br>

| |

| In each of the likelihood functions, <math>{{\eta }_{i}}\,\!</math> is substituted based on Eqn. (EtaEquation) as:

| |

| | |

| ::<math>{{\eta }_{i}}=\exp ({{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}+...)\,\!</math>

| |

| | |

| | |

| The complete likelihood function when all types of data (complete, right and left censored) are present is:

| |

| | |

| ::<math>L(Beta,{{\beta }_{0}},{{\beta }_{1}}...)={{L}_{failures}}\cdot {{L}_{suspensions}}\cdot {{L}_{interval}}\,\!</math>

| |

| | |

| | |

| Then the log-likelihood function is:

| |

| | |

| ::<math>\Lambda (Beta,{{\beta }_{0}},{{\beta }_{1}}...)=\ln (L)\,\!</math>

| |

| | |

| | |

| The MLE estimates are obtained by solving for parameters <math>(Beta,{{\beta }_{0}},{{\beta }_{1}}...)\,\!</math> so that:

| |

| | |

| ::<math>\begin{align}

| |

| \frac{\partial \Lambda }{\partial Beta}= & 0 \\

| |

| \frac{\partial \Lambda }{\partial {{\beta }_{0}}}= & 0 \\

| |

| \frac{\partial \Lambda }{\partial {{\beta }_{1}}}= & 0 \\

| |

| & ...

| |

| \end{align}\,\!</math>

| |

| | |

| Once the estimates are obtained, the significance of any parameter, <math>{{\theta }_{i}}\,\!</math>, can be assessed using the likelihood ratio test. Other results can also be obtained as discussed in Sections [[Highly_Fractional_Factorial_Designs#Maximum_Likelihood_Estimation_for_the_Lognormal_Distribution| MLE for the Lognormal]] and [[Highly_Fractional_Factorial_Designs#Fisher_Matrix_Bounds_on_Parameters| Fisher Matrix Bounds on Parameters]].

| |

| | |

| =R-DOE Analysis of Data Following the Exponential Distribution=

| |

| The exponential distribution is a special case of the Weibull distribution when the shape parameter <math>Beta\,\!</math> is equal to 1. Substituting <math>Beta=1\,\!</math> in the probability density function for the 2-parameter Weibull distribution gives:

| |

|

| |

|

| ::<math>\begin{align}

| | Assume again we want to use Taguchi OA L16(2^6*4^3). Click '''Specify Interaction Terms''' as shown above to specify the interaction effects that you want to estimate in the experiment. |

| f(T)= & \frac{1}{\eta }\exp \left( -\frac{T}{\eta } \right) \\

| |

| = & \lambda \exp (-\lambda T)

| |

| \end{align}\,\!</math>

| |

| | |

| where <math>1/\eta \,\!</math> of the Weibull ''pdf'' has been replaced by <math>\lambda \,\!</math>. Parameter <math>\lambda \,\!</math> is called the failure rate [LDAReference]. Hence, R-DOE analysis for exponentially distributed data can be carried out by substituting <math>Beta=1\,\!</math> and replacing <math>1/\eta \,\!</math> by <math>\lambda \,\!</math> in the Weibull distribution.

| |

| | |

| =Model Diagnostics=

| |

| Residual plots can be used to check if the model obtained, based on the MLE estimates, is a good fit to the data. DOE++ uses standardized residuals for R-DOE analyses. If the data follows the lognormal distribution, then standardized residuals are calculated using the following equation:

| |

| | |

| ::<math>\begin{align}

| |

| {{{\hat{e}}}_{i}}= & \frac{\ln ({{t}_{i}})-{{{\hat{\mu }}}_{i}}}{{{{\hat{\sigma }}}^{\prime }}} \\

| |

| = & \frac{\ln ({{t}_{i}})-({{{\hat{\beta }}}_{0}}+{{{\hat{\beta }}}_{1}}{{x}_{i1}}+{{{\hat{\beta }}}_{2}}{{x}_{i2}}+...)}{{{{\hat{\sigma }}}^{\prime }}}

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| For the probability plot, the standardized residuals are displayed on a normal probability plot. This is because under the assumed model for the lognormal distribution, the standardized residuals should follow a normal distribution with a mean of 0 and a standard deviation of 1.

| |

| <br>

| |

| For data that follows the Weibull distribution, the standardized residuals are calculated as shown next:

| |

| | |

| ::<math>\begin{align}

| |

| {{{\hat{e}}}_{i}}= & \hat{B}eta[\ln ({{t}_{i}})-\ln ({{{\hat{\eta }}}_{i}})] \\

| |

| = & \hat{B}eta[\ln ({{t}_{i}})-({{{\hat{\beta }}}_{0}}+{{{\hat{\beta }}}_{1}}{{x}_{i1}}+{{{\hat{\beta }}}_{2}}{{x}_{i2}}+...)]

| |

| \end{align}\,\!</math>

| |

| | |

| | |

| The probability plot, in this case, is used to check if the residuals follow the extreme-value distribution with a mean of 0. Note that in all residual plots, when an observation, <math>{{t}_{i}}\,\!</math>, is censored the corresponding residual is also censored.

| |

| | |

| =Application Examples=

| |

| ==Example==

| |

| <br>

| |

| [[Image:doe11.5.png|thumb|center|400px|The <math>2^{5-2}\,\!</math> experiment design for the [[Highly_Fractional_Factorial_Designs#Example_3| example]] to study factors affecting the reliability of fluorescent lights.]]

| |

| | | |

| <br>

| | [[Image:doe8_12.png|center|420px|Specifying the interaction terms of interest.|link=]] |

| [[Image:doe11.6.png|thumb|center|400px|Results of the R-DOE analysis for the experiment in the [[Highly_Fractional_Factorial_Designs#Example_3| example]].]] | |

|

| |

| <br>

| |

| This example illustrates the use of R-DOE analysis to design reliability into the products. An experiment was carried out to investigate the effect of five factors (each at two levels) on the reliability of fluorescent lights (Taguchi, 1987, p. 930). The factors, <math>A\,\!</math> through <math>E\,\!</math>, were studied using a 2 <math>^{5-2}\,\!</math> design (with the defining relations <math>D=-AC\,\!</math> and <math>E=-BC\,\!</math> ) under the assumption that all interaction effects, except <math>AB\,\!</math> <math>(=DE)\,\!</math>, can be assumed to be inactive. For each treatment, two lights were tested (two replicates) with the readings taken every two days. The experiment was run for 20 days and, if a light had not failed by the 20th day, it was assumed to be a suspension. The experimental design and the corresponding failure times are shown in the figure above. The short duration of the experiment and failure times were probably because the lights were tested under conditions which resulted in stress higher than normal conditions. The failure of the lights was assumed to follow the lognormal distribution.

| |

| <br>

| |

|

| |

|

| The analysis results from DOE++ for this experiment are shown in the figure above. The results are obtained by selecting the main effects of the five factors and the interaction <math>AB\,\!</math> using the Select Effects icon in the Control Panel. The results show that factors <math>B\,\!</math>, <math>D\,\!</math> and <math>E\,\!</math> are active at a significance level of 0.05. The MLE estimates of the effect coefficients corresponding to these factors are <math>-0.2015\,\!</math>, <math>0.2729\,\!</math> and <math>-0.1527\,\!</math>, respectively. Based on these coefficients, the best settings for these effects to improve the reliability of the fluorescent lights (by maximizing the response, which in this case is the failure time) are:

| | When you click '''OK''', you will see a message warning that some of the specified interaction effects are aliased with main effects. These means that it is not possible to clearly estimate all the main effects and the specified interaction effects AC and BD. |

| <br>

| |

| <br>

| |

| <br>

| |

| :• Factor <math>B\,\!</math> should be set at the lower level of <math>-1\,\!</math> since its coefficient is negative

| |

| :• Factor <math>D\,\!</math> should be set at the higher level of <math>1\,\!</math> since its coefficient is positive

| |

| :• Factor <math>E\,\!</math> should be set at the lower level of <math>-1\,\!</math> since its coefficient is negative

| |

| <br>

| |

| <br>

| |

| Note that, since actual factor levels are not disclosed (presumably for proprietary reasons), predictions beyond the test conditions cannot be carried out in this case.

| |

| <br>

| |

| <br>

| |

|

| |

|

| ==Example==

| | This can be explained by checking the alias table of an L16(2^6*4^3) design as given below. |

| <br>

| |

| Consider a product whose reliability is thought to be affected by eight potential factors - <math>A\,\!</math> (temperature), <math>B\,\!</math> (humidity), <math>C\,\!</math> (load), <math>D\,\!</math> (fan-speed), <math>E\,\!</math> (voltage), <math>F\,\!</math> (material), <math>G\,\!</math> (vibration) and <math>H\,\!</math> (current). Assuming that all interaction effects are absent, a 2 <math>^{8-4}\,\!</math> design is used to investigate the eight factors at two levels. The generators used to obtain the design are <math>E=ABC\,\!</math>, <math>F=BCD\,\!</math>, <math>G=ACD\,\!</math> and <math>H=ABD\,\!</math>. The design and the corresponding life data obtained are shown in the figure below. Readings for the experiment are taken every 20 time units and the test is terminated at 200 time units. The life of the product is assumed to follow the Weibull distribution.

| |

| <br>

| |

|

| |

|

| The results from DOE++ for this experiment are shown in the figure below. The results show that only factors <math>A\,\!</math> and <math>D\,\!</math> are active at a significance level of 0.1. <br>

| | {| style="text-align:center;" cellpadding="2" border="1" align="center" |

| Assume that, in terms of the actual units, the <math>-1\,\!</math> level of factor <math>A\,\!</math> corresponds to a temperature of 333 <math>K\,\!</math> and the <math>+1\,\!</math> level corresponds to a temperature of 383 <math>K\,\!</math>. Similarly, assume that the two levels of factor <math>D\,\!</math> are 1000 <math>rpm\,\!</math> and 2000 <math>rpm\,\!</math> respectively. From the MLE estimates of the effect coefficients it can be noted that to improve reliability (by maximizing the response) factors <math>A\,\!</math> and <math>D\,\!</math> should be set as follows:

| | |- |

| <br>

| | |1|| 2|| 3|| 4|| 5|| 6|| 7|| 8|| 9 |

| <br>

| | |- |