Multiple Linear Regression Analysis: Difference between revisions

Chuck Smith (talk | contribs) No edit summary |

|||

| (145 intermediate revisions by 3 users not shown) | |||

| Line 1: | Line 1: | ||

{{Template:Doebook|4}} | {{Template:Doebook|4}} | ||

This chapter expands on the analysis of simple linear regression models and discusses the analysis of multiple linear regression models. A major portion of the results displayed in | This chapter expands on the analysis of simple linear regression models and discusses the analysis of multiple linear regression models. A major portion of the results displayed in [https://koi-3QN72QORVC.marketingautomation.services/net/m?md=Rw01CJDOxn%2FabhkPlZsy6DwBQ%2BaCXsGR Weibull++] DOE folios are explained in this chapter because these results are associated with multiple linear regression. One of the applications of multiple linear regression models is Response Surface Methodology (RSM). RSM is a method used to locate the optimum value of the response and is one of the final stages of experimentation. It is discussed in [[Response_Surface_Methods_for_Optimization| Response Surface Methods]]. Towards the end of this chapter, the concept of using indicator variables in regression models is explained. Indicator variables are used to represent qualitative factors in regression models. The concept of using indicator variables is important to gain an understanding of ANOVA models, which are the models used to analyze data obtained from experiments. These models can be thought of as first order multiple linear regression models where all the factors are treated as qualitative factors. ANOVA models are discussed in the [[One Factor Designs]] and [[General Full Factorial Designs]] chapters. | ||

==Multiple Linear Regression Model== | ==Multiple Linear Regression Model== | ||

| Line 10: | Line 10: | ||

The model is linear because it is linear in the parameters <math>{{\beta }_{0}}\,\!</math>, <math>{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{2}}\,\!</math>. The model describes a plane in the three dimensional space of <math>Y\,\!</math>, <math>{{x}_{1}}\,\!</math> and <math>{{x}_{2}}\,\!</math>. The parameter <math>{{\beta }_{0}}\,\!</math> is the intercept of this plane. Parameters <math>{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{2}}\,\!</math> are referred to as partial regression coefficients. Parameter <math>{{\beta }_{1}}\,\!</math> represents the change in the mean response corresponding to a unit change in <math>{{x}_{1}}\,\!</math> when <math>{{x}_{2}}\,\!</math> is held constant. Parameter <math>{{\beta }_{2}}\,\!</math> represents the change in the mean response corresponding to a unit change in <math>{{x}_{2}}\,\!</math> when <math>{{x}_{1}}\,\!</math> is held constant. | The model is linear because it is linear in the parameters <math>{{\beta }_{0}}\,\!</math>, <math>{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{2}}\,\!</math>. The model describes a plane in the three-dimensional space of <math>Y\,\!</math>, <math>{{x}_{1}}\,\!</math> and <math>{{x}_{2}}\,\!</math>. The parameter <math>{{\beta }_{0}}\,\!</math> is the intercept of this plane. Parameters <math>{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{2}}\,\!</math> are referred to as ''partial regression coefficients''. Parameter <math>{{\beta }_{1}}\,\!</math> represents the change in the mean response corresponding to a unit change in <math>{{x}_{1}}\,\!</math> when <math>{{x}_{2}}\,\!</math> is held constant. Parameter <math>{{\beta }_{2}}\,\!</math> represents the change in the mean response corresponding to a unit change in <math>{{x}_{2}}\,\!</math> when <math>{{x}_{1}}\,\!</math> is held constant. | ||

Consider the following example of a multiple linear regression model with two predictor variables, <math>{{x}_{1}}\,\!</math> and <math>{{x}_{2}}\,\!</math> : | Consider the following example of a multiple linear regression model with two predictor variables, <math>{{x}_{1}}\,\!</math> and <math>{{x}_{2}}\,\!</math> : | ||

| Line 30: | Line 30: | ||

[[Image:doe5.1.png | [[Image:doe5.1.png|center|437px|Regression plane for the model <math>Y=30+5 x_1+7 x_2+\epsilon\,\!</math>]] | ||

[[Image:doe5.2.png | [[Image:doe5.2.png|center|337px|Countour plot for the model <math>Y=30+5 x_1+7 x_2+\epsilon\,\!</math>]] | ||

| Line 51: | Line 51: | ||

[[Image:doe5.3.png | [[Image:doe5.3.png|center|437px|Regression plane for the model <math>Y=30+5 x_1+7 x_2+3 x_1 x_2+\epsilon\,\!</math>]] | ||

[[Image:doe5.4.png | [[Image:doe5.4.png|center|337px|Countour plot for the model <math>Y=30+5 x_1+7 x_2+3 x_1 x_2+\epsilon\,\!</math>]] | ||

| Line 67: | Line 67: | ||

::<math>Y=500+5{{x}_{1}}+7{{x}_{2}}-3{{x}_{3}}-5{{x}_{4}}+3{{x}_{5}}+\epsilon\,\!</math> | ::<math>Y=500+5{{x}_{1}}+7{{x}_{2}}-3{{x}_{3}}-5{{x}_{4}}+3{{x}_{5}}+\epsilon\,\!</math> | ||

==Estimating Regression Models Using Least Squares== | ==Estimating Regression Models Using Least Squares== | ||

| Line 150: | Line 144: | ||

The estimated regression model is also referred to as the fitted model. The observations, <math>{{y}_{i}}\,\!</math>, may be different from the fitted values <math>{{\hat{y}}_{i}}\,\!</math> obtained from this model. The difference between these two values is the residual, <math>{{e}_{i}}\,\!</math>. The vector of residuals, <math>e\,\!</math>, is obtained as: | The estimated regression model is also referred to as the ''fitted model''. The observations, <math>{{y}_{i}}\,\!</math>, may be different from the fitted values <math>{{\hat{y}}_{i}}\,\!</math> obtained from this model. The difference between these two values is the residual, <math>{{e}_{i}}\,\!</math>. The vector of residuals, <math>e\,\!</math>, is obtained as: | ||

| Line 172: | Line 166: | ||

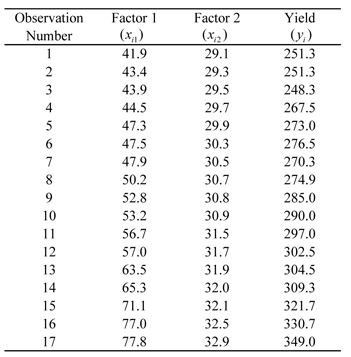

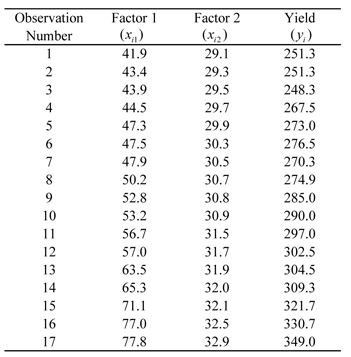

[[Image:doet5.1.png| | [[Image:doet5.1.png||center|351px|Observed yield data for various levels of two factors.|link=]] | ||

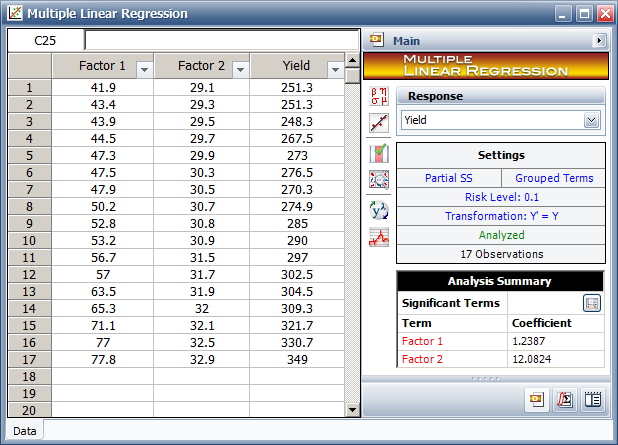

The data of the above table can be entered into | The data of the above table can be entered into the Weibull++ DOE folio using the multiple linear regression folio tool as shown in the following figure. | ||

[[Image:doe5_7.png|center|618px|Multiple Regression tool in Webibull++ with the data in the table.|link=]] | |||

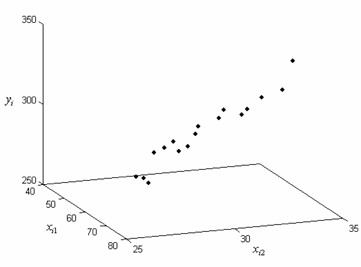

A scatter plot for the data is shown next. | |||

[[Image:doe5.8.png|center|361px|Three-dimensional scatter plot for the observed data in the table.|link=]] | |||

The first order regression model applicable to this data set having two predictor variables is: | |||

| Line 199: | Line 205: | ||

349.0 \\ | 349.0 \\ | ||

\end{matrix} \right]\,\!</math> | \end{matrix} \right]\,\!</math> | ||

| Line 252: | Line 252: | ||

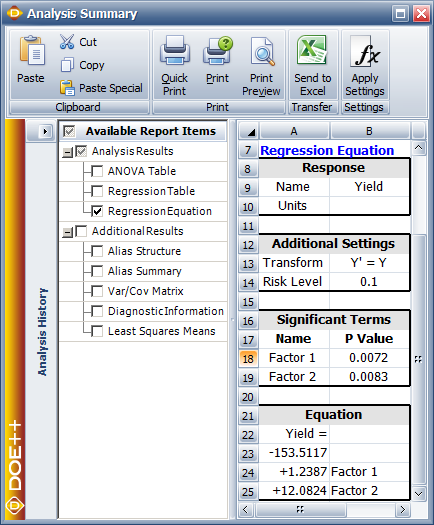

The fitted regression model can be viewed in | The fitted regression model can be viewed in the Weibull++ DOE folio, as shown next. | ||

[[Image:doe5_9.png|center|434px|Equation of the fitted regression model for the data from the table.|link=]] | |||

A plot of the fitted regression plane is shown in the following figure. | |||

[[Image:doe5.10.png|center|362px|Fitted regression plane <math>\hat{y}=-153.5+1.24 x_1+12.08 x_2\,\!</math> for the data from the table.|link=]] | |||

The fitted regression model can be used to obtain fitted values, <math>{{\hat{y}}_{i}}\,\!</math>, corresponding to an observed response value, <math>{{y}_{i}}\,\!</math>. For example, the fitted value corresponding to the fifth observation is: | |||

| Line 263: | Line 273: | ||

& = & 266.3 | & = & 266.3 | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

| Line 282: | Line 286: | ||

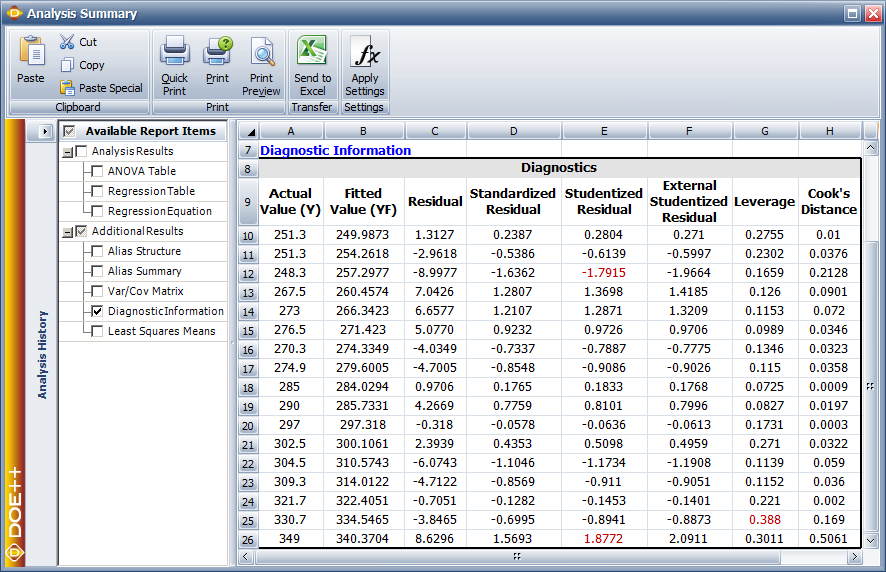

In | In Weibull++ DOE folios, fitted values and residuals are shown in the Diagnostic Information table of the detailed summary of results. The values are shown in the following figure. | ||

[[Image:doe5_11.png|center|886px|Fitted values and residuals for the data in the table.|link=]] | |||

The fitted regression model can also be used to predict response values. For example, to obtain the response value for a new observation corresponding to 47 units of <math>{{x}_{1}}\,\!</math> and 31 units of <math>{{x}_{2}}\,\!</math>, the value is calculated using: | |||

| Line 297: | Line 307: | ||

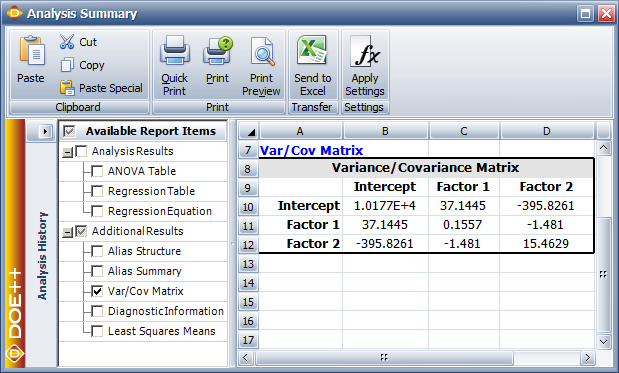

<math>C\,\!</math> is a symmetric matrix whose diagonal elements, <math>{{C}_{jj}}\,\!</math>, represent the variance of the estimated <math>j\,\!</math> th regression coefficient, <math>{{\hat{\beta }}_{j}}\,\!</math>. The off-diagonal elements, <math>{{C}_{ij}}\,\!</math>, represent the covariance between the <math>i\,\!</math> th and <math>j\,\!</math> th estimated regression coefficients, <math>{{\hat{\beta }}_{i}}\,\!</math> and <math>{{\hat{\beta }}_{j}}\,\!</math>. The value of <math>{{\hat{\sigma }}^{2}}\,\!</math> is obtained using the error mean square, <math>M{{S}_{E}}\,\!</math>. The variance-covariance matrix for the data in the table (see [[Multiple_Linear_Regression_Analysis#Estimating_Regression_Models_Using_Least_Squares| Estimating Regression Models Using Least Squares]]) can be viewed in the DOE folio, as shown next. | |||

[[Image:doe5_12.png|center|619px|The variance-covariance matrix for the data in table.|link=]] | |||

Calculations to obtain the matrix are given in this [[Multiple_Linear_Regression_Analysis#Example| example]]. The positive square root of <math>{{C}_{jj}}\,\!</math> represents the estimated standard deviation of the <math>j\,\!</math> th regression coefficient, <math>{{\hat{\beta }}_{j}}\,\!</math>, and is called the estimated standard error of <math>{{\hat{\beta }}_{j}}\,\!</math> (abbreviated <math>se({{\hat{\beta }}_{j}})\,\!</math> ). | |||

::<math>se({{\hat{\beta }}_{j}})=\sqrt{{{C}_{jj}}}\,\!</math> | |||

==Hypothesis Tests in Multiple Linear Regression== | ==Hypothesis Tests in Multiple Linear Regression== | ||

| Line 312: | Line 322: | ||

Three types of hypothesis tests can be carried out for multiple linear regression models: | Three types of hypothesis tests can be carried out for multiple linear regression models: | ||

#Test for significance of regression: This test checks the significance of the whole regression model. | |||

#<math>t\,\!</math> test: This test checks the significance of individual regression coefficients. | |||

#<math>F\,\!</math> test: This test can be used to simultaneously check the significance of a number of regression coefficients. It can also be used to test individual coefficients. | |||

===Test for Significance of Regression=== | ===Test for Significance of Regression=== | ||

| Line 386: | Line 394: | ||

=====Example===== | =====Example===== | ||

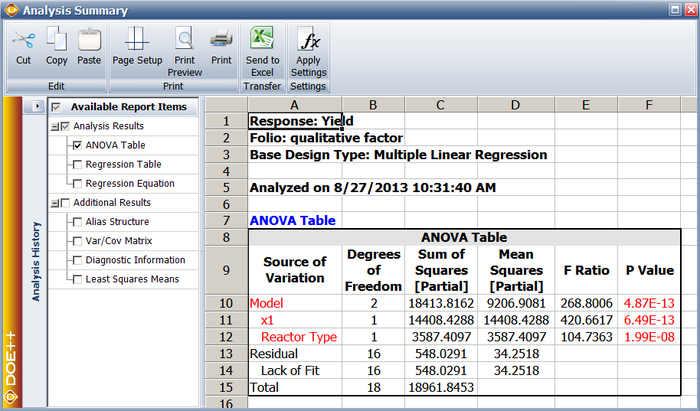

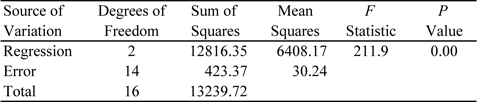

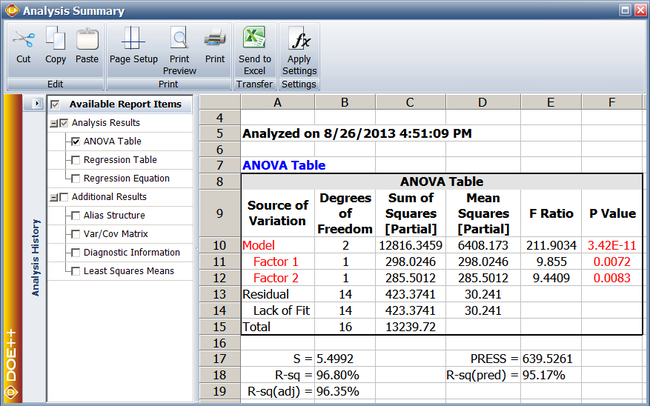

The test for the significance of regression, for the regression model obtained for the data in the table(see [[Multiple_Linear_Regression_Analysis#Estimating_Regression_Models_Using_Least_Squares| Estimating Regression Models Using Least Squares]]), is illustrated in this example. The null hypothesis for the model is: | The test for the significance of regression, for the regression model obtained for the data in the table (see [[Multiple_Linear_Regression_Analysis#Estimating_Regression_Models_Using_Least_Squares| Estimating Regression Models Using Least Squares]]), is illustrated in this example. The null hypothesis for the model is: | ||

| Line 405: | Line 413: | ||

The hat matrix, <math>H\,\!</math> is calculated as follows using the design matrix <math>X\,\!</math> from Example: | The hat matrix, <math>H\,\!</math> is calculated as follows using the design matrix <math>X\,\!</math> from the previous [[Multiple_Linear_Regression_Analysis#Example| example]]: | ||

| Line 429: | Line 437: | ||

The degrees of freedom associated with <math>S{{S}_{R}}\,\!</math> is <math>k\,\!</math>, which equals to a value of two since there are two predictor variables in the data in the table(see [[Multiple_Linear_Regression_Analysis#Estimating_Regression_Models_Using_Least_Squares| Multiple Linear Regression Analysis]]). Therefore, the regression mean square is: | The degrees of freedom associated with <math>S{{S}_{R}}\,\!</math> is <math>k\,\!</math>, which equals to a value of two since there are two predictor variables in the data in the table (see [[Multiple_Linear_Regression_Analysis#Estimating_Regression_Models_Using_Least_Squares| Multiple Linear Regression Analysis]]). Therefore, the regression mean square is: | ||

| Line 481: | Line 489: | ||

[[Image:doet5.2.png | [[Image:doet5.2.png|center|477px|ANOVA table for the significance of regression test.|link=]] | ||

===Test on Individual Regression Coefficients (''t'' Test)=== | ===Test on Individual Regression Coefficients (''t'' Test)=== | ||

| Line 505: | Line 513: | ||

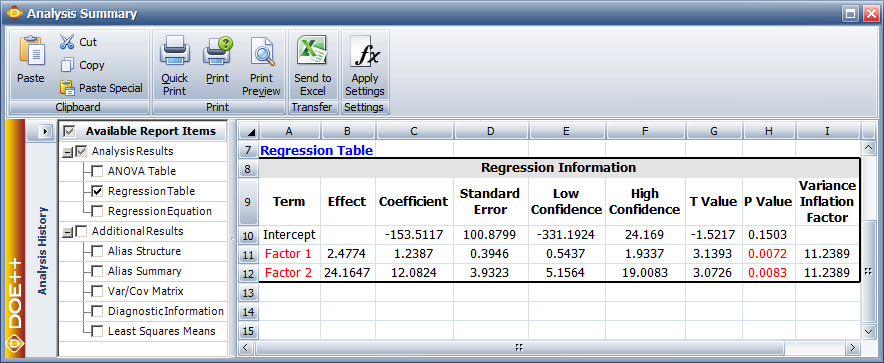

This test measures the contribution of a variable while the remaining variables are included in the model. For the model <math>\hat{y}={{\hat{\beta }}_{0}}+{{\hat{\beta }}_{1}}{{x}_{1}}+{{\hat{\beta }}_{2}}{{x}_{2}}+{{\hat{\beta }}_{3}}{{x}_{3}}\,\!</math>, if the test is carried out for <math>{{\beta }_{1}}\,\!</math>, then the test will check the significance of including the variable <math>{{x}_{1}}\,\!</math> in the model that contains <math>{{x}_{2}}\,\!</math> and <math>{{x}_{3}}\,\!</math> (i.e., the model <math>\hat{y}={{\hat{\beta }}_{0}}+{{\hat{\beta }}_{2}}{{x}_{2}}+{{\hat{\beta }}_{3}}{{x}_{3}}\,\!</math> ). Hence the test is also referred to as partial or marginal test. In DOE | This test measures the contribution of a variable while the remaining variables are included in the model. For the model <math>\hat{y}={{\hat{\beta }}_{0}}+{{\hat{\beta }}_{1}}{{x}_{1}}+{{\hat{\beta }}_{2}}{{x}_{2}}+{{\hat{\beta }}_{3}}{{x}_{3}}\,\!</math>, if the test is carried out for <math>{{\beta }_{1}}\,\!</math>, then the test will check the significance of including the variable <math>{{x}_{1}}\,\!</math> in the model that contains <math>{{x}_{2}}\,\!</math> and <math>{{x}_{3}}\,\!</math> (i.e., the model <math>\hat{y}={{\hat{\beta }}_{0}}+{{\hat{\beta }}_{2}}{{x}_{2}}+{{\hat{\beta }}_{3}}{{x}_{3}}\,\!</math> ). Hence the test is also referred to as partial or marginal test. In DOE folios, this test is displayed in the Regression Information table. | ||

====Example==== | ====Example==== | ||

| Line 584: | Line 592: | ||

Since the <math>p\,\!</math> value is less than the significance, <math>\alpha =0.1\,\!</math>, it is concluded that <math>{{\beta }_{2}}\,\!</math> is significant. The hypothesis test on <math>{{\beta }_{1}}\,\!</math> can be carried out in a similar manner. | Since the <math>p\,\!</math> value is less than the significance, <math>\alpha =0.1\,\!</math>, it is concluded that <math>{{\beta }_{2}}\,\!</math> is significant. The hypothesis test on <math>{{\beta }_{1}}\,\!</math> can be carried out in a similar manner. | ||

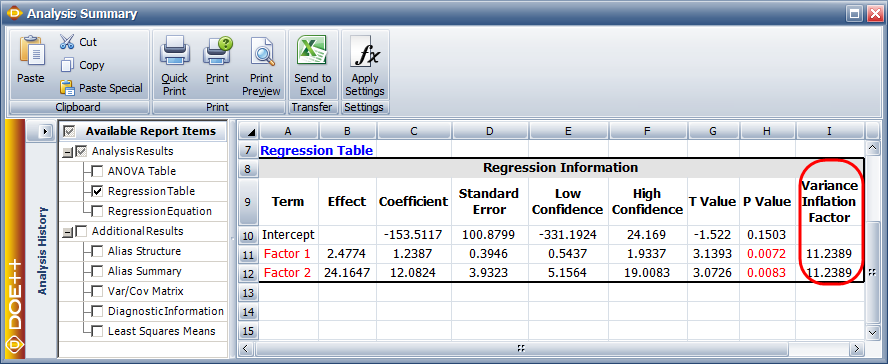

As explained in [[Simple_Linear_Regression_Analysis| Simple Linear Regression Analysis]], in DOE | As explained in [[Simple_Linear_Regression_Analysis| Simple Linear Regression Analysis]], in DOE folios, the information related to the <math>t\,\!</math> test is displayed in the Regression Information table as shown in the figure below. | ||

[[Image:doe5_13.png|center|884px|Regression results for the data.|link=]] | |||

[[ | |||

In this table, the <math>t\,\!</math> test for <math>{{\beta }_{2}}\,\!</math> is displayed in the row for the term Factor 2 because <math>{{\beta }_{2}}\,\!</math> is the coefficient that represents this factor in the regression model. Columns labeled Standard Error, T Value and P Value represent the standard error, the test statistic for the <math>t\,\!</math> test and the <math>p\,\!</math> value for the <math>t\,\!</math> test, respectively. These values have been calculated for <math>{{\beta }_{2}}\,\!</math> in this example. The Coefficient column represents the estimate of regression coefficients. These values are calculated as shown in [[Multiple_Linear_Regression_Analysis#Example|this]] example. The Effect column represents values obtained by multiplying the coefficients by a factor of 2. This value is useful in the case of two factor experiments and is explained in [[Two_Level_Factorial_Experiments| Two-Level Factorial Experiments]]. Columns labeled Low Confidence and High Confidence represent the limits of the confidence intervals for the regression coefficients and are explained in [[Multiple_Linear_Regression_Analysis#Confidence_Intervals_in_Multiple_Linear_Regression|Confidence Intervals in Multiple Linear Regression]]. The Variance Inflation Factor column displays values that give a measure of ''multicollinearity''. This is explained in [[Multiple_Linear_Regression_Analysis#Multicollinearity|Multicollinearity]]. | |||

===Test on Subsets of Regression Coefficients (Partial ''F'' Test)=== | ===Test on Subsets of Regression Coefficients (Partial ''F'' Test)=== | ||

This test can be considered to be the general form of the <math>t\,\!</math> test mentioned in the previous section. This is because the test simultaneously checks the significance of including many (or even one) regression coefficients in the multiple linear regression model. Adding a variable to a model increases the regression sum of squares, <math>S{{S}_{R}}\,\!</math>. The test is based on this increase in the regression sum of squares. The increase in the regression sum of squares is called the extra sum of squares. | This test can be considered to be the general form of the <math>t\,\!</math> test mentioned in the previous section. This is because the test simultaneously checks the significance of including many (or even one) regression coefficients in the multiple linear regression model. Adding a variable to a model increases the regression sum of squares, <math>S{{S}_{R}}\,\!</math>. The test is based on this increase in the regression sum of squares. The increase in the regression sum of squares is called the ''extra sum of squares''. | ||

Assume that the vector of the regression coefficients, <math>\beta\,\!</math>, for the multiple linear regression model, <math>y=X\beta +\epsilon\,\!</math>, is partitioned into two vectors with the second vector, <math>{{\ | Assume that the vector of the regression coefficients, <math>\beta\,\!</math>, for the multiple linear regression model, <math>y=X\beta +\epsilon\,\!</math>, is partitioned into two vectors with the second vector, <math>{{\theta}_{2}}\,\!</math>, containing the last <math>r\,\!</math> regression coefficients, and the first vector, <math>{{\theta}_{1}}\,\!</math>, containing the first ( <math>k+1-r\,\!</math> ) coefficients as follows: | ||

::<math>\beta =\left[ \begin{matrix} | ::<math>\beta =\left[ \begin{matrix} | ||

{{\ | {{\theta}_{1}} \\ | ||

{{\ | {{\theta}_{2}} \\ | ||

\end{matrix} \right]\,\!</math> | \end{matrix} \right]\,\!</math> | ||

| Line 604: | Line 615: | ||

::<math>{{\ | ::<math>{{\theta}_{1}}=[{{\beta }_{0}},{{\beta }_{1}}...{{\beta }_{k-r}}{]}'\text{ and }{{\theta}_{2}}=[{{\beta }_{k-r+1}},{{\beta }_{k-r+2}}...{{\beta }_{k}}{]}'\text{ }\,\!</math> | ||

The hypothesis statements to test the significance of adding the regression coefficients in <math>{{\ | The hypothesis statements to test the significance of adding the regression coefficients in <math>{{\theta}_{2}}\,\!</math> to a model containing the regression coefficients in <math>{{\theta}_{1}}\,\!</math> may be written as: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

& {{H}_{0}}: & {{\ | & {{H}_{0}}: & {{\theta}_{2}}=0 \\ | ||

& {{H}_{1}}: & {{\ | & {{H}_{1}}: & {{\theta}_{2}}\ne 0 | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

| Line 619: | Line 630: | ||

::<math>{{F}_{0}}=\frac{S{{S}_{R}}({{\ | ::<math>{{F}_{0}}=\frac{S{{S}_{R}}({{\theta}_{2}}|{{\theta}_{1}})/r}{M{{S}_{E}}}\,\!</math> | ||

where <math>S{{S}_{R}}({{\theta}_{2}}|{{\theta}_{1}})\,\!</math> is the the increase in the regression sum of squares when the variables corresponding to the coefficients in <math>{{\theta}_{2}}\,\!</math> are added to a model already containing <math>{{\theta}_{1}}\,\!</math>, and <math>M{{S}_{E}}\,\!</math> is obtained from the equation given in [[Simple_Linear_Regression_Analysis#Mean_Squares|Simple Linear Regression Analysis]]. The value of the extra sum of squares is obtained as explained in the next section. | |||

The null hypothesis, <math>{{H}_{0}}\,\!</math>, is rejected if <math>{{F}_{0}}>{{f}_{\alpha ,r,n-(k+1)}}\,\!</math>. Rejection of <math>{{H}_{0}}\,\!</math> leads to the conclusion that at least one of the variables in <math>{{x}_{k-r+1}}\,\!</math>, <math>{{x}_{k-r+2}}\,\!</math>... <math>{{x}_{k}}\,\!</math> contributes significantly to the regression model. In a DOE folio, the results from the partial <math>F\,\!</math> test are displayed in the ANOVA table. | |||

[[Image:doe5_14.png|center|650px|ANOVA Table for Extra Sum of Squares in Weibull++.]] | |||

===Types of Extra Sum of Squares=== | ===Types of Extra Sum of Squares=== | ||

The extra sum of squares can be calculated using either the partial (or adjusted) sum of squares or the sequential sum of squares. The type of extra sum of squares used affects the calculation of the test statistic for the partial <math>F\,\!</math> test described above. In DOE | The extra sum of squares can be calculated using either the partial (or adjusted) sum of squares or the sequential sum of squares. The type of extra sum of squares used affects the calculation of the test statistic for the partial <math>F\,\!</math> test described above. In DOE folios, selection for the type of extra sum of squares is available as shown in the figure below. The partial sum of squares is used as the default setting. The reason for this is explained in the following section on the partial sum of squares. | ||

====Partial Sum of Squares==== | ====Partial Sum of Squares==== | ||

| Line 639: | Line 650: | ||

Assume that we need to know the partial sum of squares for <math>{{\beta }_{2}}\,\!</math>. The partial sum of squares for <math>{{\beta }_{2}}\,\!</math> is the increase in the regression sum of squares when <math>{{\beta }_{2}}\,\!</math> is added to the model. This increase is the difference in the regression sum of squares for the full model of the equation given above and the model that includes all terms except <math>{{\beta }_{2}}\,\!</math>. These terms are <math>{{\beta }_{0}}\,\!</math>, <math>{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{12}}\,\!</math>. The model that contains these terms is: | The sum of squares of regression of this model is denoted by <math>S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}})\,\!</math>. Assume that we need to know the partial sum of squares for <math>{{\beta }_{2}}\,\!</math>. The partial sum of squares for <math>{{\beta }_{2}}\,\!</math> is the increase in the regression sum of squares when <math>{{\beta }_{2}}\,\!</math> is added to the model. This increase is the difference in the regression sum of squares for the full model of the equation given above and the model that includes all terms except <math>{{\beta }_{2}}\,\!</math>. These terms are <math>{{\beta }_{0}}\,\!</math>, <math>{{\beta }_{1}}\,\!</math> and <math>{{\beta }_{12}}\,\!</math>. The model that contains these terms is: | ||

| Line 645: | Line 656: | ||

The partial sum of squares for <math>{{\beta }_{2}}\,\!</math>can be represented as <math>S{{S}_{R}}({{\beta }_{2}}|{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{12}})\,\!</math> and is calculated as follows: | The sum of squares of regression of this model is denoted by <math>S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{12}})\,\!</math>. The partial sum of squares for <math>{{\beta }_{2}}\,\!</math>can be represented as <math>S{{S}_{R}}({{\beta }_{2}}|{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{12}})\,\!</math> and is calculated as follows: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

S{{S}_{R}}({{\beta }_{2}}|{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{12}}) | S{{S}_{R}}({{\beta }_{2}}|{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{12}})& = & S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}})-S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{12}}) | ||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

For the present case, <math>{{\ | For the present case, <math>{{\theta}_{2}}=[{{\beta }_{2}}{]}'\,\!</math> and <math>{{\theta}_{1}}=[{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{12}}{]}'\,\!</math>. It can be noted that for the partial sum of squares <math>{{\beta }_{1}}\,\!</math> contains all coefficients other than the coefficient being tested. | ||

A Weibull++ DOE folio has the partial sum of squares as the default selection. This is because the <math>t\,\!</math> test is a partial test, i.e., the <math>t\,\!</math> test on an individual coefficient is carried by assuming that all the remaining coefficients are included in the model (similar to the way the partial sum of squares is calculated). The results from the <math>t\,\!</math> test are displayed in the Regression Information table. The results from the partial <math>F\,\!</math> test are displayed in the ANOVA table. To keep the results in the two tables consistent with each other, the partial sum of squares is used as the default selection for the results displayed in the ANOVA table. | |||

The partial sum of squares for all terms of a model may not add up to the regression sum of squares for the full model when the regression coefficients are correlated. If it is preferred that the extra sum of squares for all terms in the model always add up to the regression sum of squares for the full model then the sequential sum of squares should be used. | The partial sum of squares for all terms of a model may not add up to the regression sum of squares for the full model when the regression coefficients are correlated. If it is preferred that the extra sum of squares for all terms in the model always add up to the regression sum of squares for the full model then the sequential sum of squares should be used. | ||

=====Example===== | =====Example===== | ||

This example illustrates the | This example illustrates the <math>F\,\!</math> test using the partial sum of squares. The test is conducted for the coefficient <math>{{\beta }_{1}}\,\!</math> corresponding to the predictor variable <math>{{x}_{1}}\,\!</math> for the data. The regression model used for this data set in the [[Multiple_Linear_Regression_Analysis#Example| example]] is: | ||

| Line 675: | Line 685: | ||

::<math>{{F}_{0}}=\frac{S{{S}_{R}}({{\beta }_{ | ::<math>{{F}_{0}}=\frac{S{{S}_{R}}({{\beta }_{1}}|{{\beta }_{2}})/r}{M{{S}_{E}}}\,\!</math> | ||

where <math>S{{S}_{R}}({{\beta }_{ | where <math>S{{S}_{R}}({{\beta }_{1}}|{{\beta }_{2}})\,\!</math> represents the partial sum of squares for <math>{{\beta }_{1}}\,\!</math>, <math>r\,\!</math> represents the number of degrees of freedom for <math>S{{S}_{R}}({{\beta }_{1}}|{{\beta }_{2}})\,\!</math> (which is one because there is just one coefficient, <math>{{\beta }_{1}}\,\!</math>, being tested) and <math>M{{S}_{E}}\,\!</math> is the error mean square and has been calculated in the second [[Multiple_Linear_Regression_Analysis#Example_2|example]] as 30.24. | ||

The partial sum of squares for <math>{{\beta }_{1}}\,\!</math> is the difference between the regression sum of squares for the full model, <math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+\epsilon\,\!</math>, and the regression sum of squares for the model excluding <math>{{\beta }_{1}}\,\!</math>, <math>Y={{\beta }_{0}}+{{\beta }_{2}}{{x}_{2}}+\epsilon\,\!</math>. The regression sum of squares for the full model has been calculated in the second [[Multiple_Linear_Regression_Analysis#Example_2|example]] as 12816.35. Therefore: | The partial sum of squares for <math>{{\beta }_{1}}\,\!</math> is the difference between the regression sum of squares for the full model, <math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+\epsilon\,\!</math>, and the regression sum of squares for the model excluding <math>{{\beta }_{1}}\,\!</math>, <math>Y={{\beta }_{0}}+{{\beta }_{2}}{{x}_{2}}+\epsilon\,\!</math>. The regression sum of squares for the full model has been calculated in the second [[Multiple_Linear_Regression_Analysis#Example_2|example]] as 12816.35. Therefore: | ||

| Line 711: | Line 721: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

S{{S}_{R}}({{\beta }_{ | S{{S}_{R}}({{\beta }_{1}}|{{\beta }_{2}})& = & S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}})-S{{S}_{R}}({{\beta }_{0}},{{\beta }_{2}}) \\ | ||

& = & 12816.35-12518.32 \\ | & = & 12816.35-12518.32 \\ | ||

& = & 298.03 | & = & 298.03 | ||

| Line 721: | Line 731: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

{{f}_{0}} &= & \frac{S{{S}_{R}}({{\beta }_{ | {{f}_{0}} &= & \frac{S{{S}_{R}}({{\beta }_{1}}|{{\beta }_{2}})/r}{M{{S}_{E}}} \\ | ||

& = & \frac{298.03/1}{30.24} \\ | & = & \frac{298.03/1}{30.24} \\ | ||

& = & 9.855 | & = & 9.855 | ||

| Line 736: | Line 746: | ||

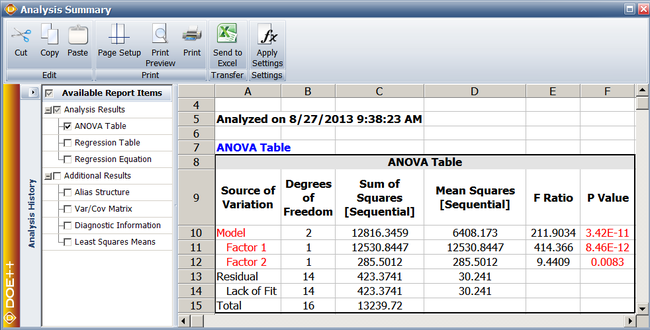

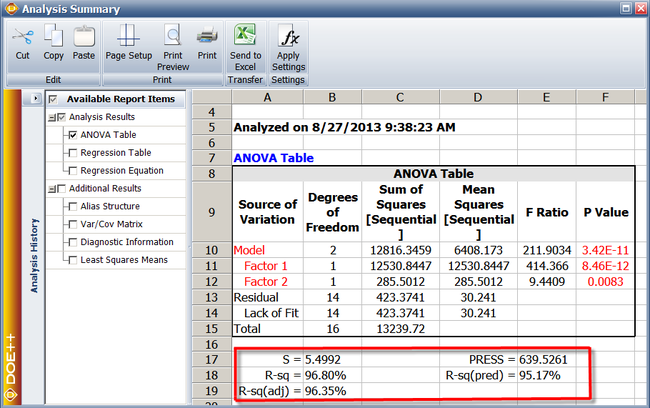

Assuming that the desired significance is 0.1, since <math>p\,\!</math> value < 0.1, <math>{{H}_{0}}:{{\beta }_{1}}=0\,\!</math> is rejected and it can be concluded that <math>{{\beta }_{1}}\,\!</math> is significant. The test for <math>{{\beta }_{2}}\,\!</math> can be carried out in a similar manner. In the results obtained from DOE | Assuming that the desired significance is 0.1, since <math>p\,\!</math> value < 0.1, <math>{{H}_{0}}:{{\beta }_{1}}=0\,\!</math> is rejected and it can be concluded that <math>{{\beta }_{1}}\,\!</math> is significant. The test for <math>{{\beta }_{2}}\,\!</math> can be carried out in a similar manner. In the results obtained from the DOE folio, the calculations for this test are displayed in the ANOVA table as shown in the following figure. Note that the conclusion obtained in this example can also be obtained using the <math>t\,\!</math> test as explained in the [[Multiple_Linear_Regression_Analysis#Example_3|example]] in [[Multiple_Linear_Regression_Analysis#Test_on_Individual_Regression_Coefficients_.28t__Test.29|Test on Individual Regression Coefficients (t Test)]]. The ANOVA and Regression Information tables in the DOE folio represent two different ways to test for the significance of the variables included in the multiple linear regression model. | ||

====Sequential Sum of Squares==== | ====Sequential Sum of Squares==== | ||

| Line 749: | Line 759: | ||

::<math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+{{\beta }_{12}}{{x}_{1}}{{x}_{2}}+{{\beta }_{3}}{{x}_{3}}+{{\beta }_{13}}{{x}_{1}}{{x}_{3}}+\epsilon\,\!</math> | ::<math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+{{\beta }_{12}}{{x}_{1}}{{x}_{2}}+{{\beta }_{3}}{{x}_{3}}+{{\beta }_{13}}{{x}_{1}}{{x}_{3}}+\epsilon\,\!</math> | ||

This is because to maintain the sequence all coefficients preceding <math>{{\beta }_{13}}\,\!</math> must be included in the model. These are the coefficients <math>{{\beta }_{0}}\,\!</math>, <math>{{\beta }_{1}}\,\!</math>, <math>{{\beta }_{2}}\,\!</math>, <math>{{\beta }_{12}}\,\!</math> and <math>{{\beta }_{3}}\,\!</math>. | This is because to maintain the sequence all coefficients preceding <math>{{\beta }_{13}}\,\!</math> must be included in the model. These are the coefficients <math>{{\beta }_{0}}\,\!</math>, <math>{{\beta }_{1}}\,\!</math>, <math>{{\beta }_{2}}\,\!</math>, <math>{{\beta }_{12}}\,\!</math> and <math>{{\beta }_{3}}\,\!</math>. | ||

Similarly the model before <math>{{\beta }_{13}}\,\!</math> is added must contain all coefficients of the equation given above except <math>{{\beta }_{13}}\,\!</math>. This model can be obtained as follows: | Similarly the model before <math>{{\beta }_{13}}\,\!</math> is added must contain all coefficients of the equation given above except <math>{{\beta }_{13}}\,\!</math>. This model can be obtained as follows: | ||

::<math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+{{\beta }_{12}}{{x}_{1}}{{x}_{2}}+{{\beta }_{3}}{{x}_{3}}+\epsilon\,\!</math> | ::<math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+{{\beta }_{12}}{{x}_{1}}{{x}_{2}}+{{\beta }_{3}}{{x}_{3}}+\epsilon\,\!</math> | ||

| Line 764: | Line 772: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

S{{S}_{R}}({{\beta }_{13}}|{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}},{{\beta }_{3}}) | S{{S}_{R}}({{\beta }_{13}}|{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}},{{\beta }_{3}}) & = & S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}},{{\beta }_{3}},{{\beta }_{13}})- S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}},{{\beta }_{3}}) | ||

& = & S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}},{{\beta }_{3}},{{\beta }_{13}})- | |||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

For the present case, <math>{{\ | For the present case, <math>{{\theta}_{2}}=[{{\beta }_{13}}{]}'\,\!</math> and <math>{{\theta}_{1}}=[{{\beta }_{0}},{{\beta }_{1}},{{\beta }_{2}},{{\beta }_{12}},{{\beta }_{3}}{]}'\,\!</math>. It can be noted that for the sequential sum of squares <math>{{\beta }_{1}}\,\!</math> contains all coefficients proceeding the coefficient being tested. | ||

The sequential sum of squares for all terms will add up to the regression sum of squares for the full model, but the sequential sum of squares are order dependent. | The sequential sum of squares for all terms will add up to the regression sum of squares for the full model, but the sequential sum of squares are order dependent. | ||

| Line 790: | Line 796: | ||

::<math>{{F}_{0}}=\frac{S{{S}_{R}}({{\beta }_{ | ::<math>{{F}_{0}}=\frac{S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}})/r}{M{{S}_{E}}}\,\!</math> | ||

where <math>S{{S}_{R}}({{\beta }_{ | where <math>S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}})\,\!</math> represents the sequential sum of squares for <math>{{\beta }_{1}}\,\!</math>, <math>r\,\!</math> represents the number of degrees of freedom for <math>S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}})\,\!</math> (which is one because there is just one coefficient, <math>{{\beta }_{1}}\,\!</math>, being tested) and <math>M{{S}_{E}}\,\!</math> is the error mean square and has been calculated in the second [[Multiple_Linear_Regression_Analysis#Example_2|example]] as 30.24. | ||

The sequential sum of squares for <math>{{\beta }_{1}}\,\!</math> is the difference between the regression sum of squares for the model after adding <math>{{\beta }_{1}}\,\!</math>, <math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+\epsilon\,\!</math>, and the regression sum of squares for the model before adding <math>{{\beta }_{1}}\,\!</math>, <math>Y={{\beta }_{0}}+\epsilon\,\!</math>. | The sequential sum of squares for <math>{{\beta }_{1}}\,\!</math> is the difference between the regression sum of squares for the model after adding <math>{{\beta }_{1}}\,\!</math>, <math>Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+\epsilon\,\!</math>, and the regression sum of squares for the model before adding <math>{{\beta }_{1}}\,\!</math>, <math>Y={{\beta }_{0}}+\epsilon\,\!</math>. | ||

| Line 817: | Line 823: | ||

[[Image: | [[Image:doe5_16.png|center|650px|Sequential sum of squares for the data.]] | ||

| Line 830: | Line 836: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

S{{S}_{R}}({{\beta }_{ | S{{S}_{R}}({{\beta }_{1}}|{{\beta }_{0}}) &= & S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}})-S{{S}_{R}}({{\beta }_{0}}) \\ | ||

& = & 12530.85-0 \\ | & = & 12530.85-0 \\ | ||

& = & 12530.85 | & = & 12530.85 | ||

| Line 840: | Line 846: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

{{f}_{0}} &= & \frac{S{{S}_{R}}({{\beta }_{ | {{f}_{0}} &= & \frac{S{{S}_{R}}({{\beta }_{0}},{{\beta }_{1}})/r}{M{{S}_{E}}} \\ | ||

& = & \frac{12530.85/1}{30.24} \\ | & = & \frac{12530.85/1}{30.24} \\ | ||

& = & 414.366 | & = & 414.366 | ||

| Line 861: | Line 867: | ||

Calculation of confidence intervals for multiple linear regression models are similar to those for simple linear regression models explained in [[Simple_Linear_Regression_Analysis| Simple Linear Regression Analysis]]. | Calculation of confidence intervals for multiple linear regression models are similar to those for simple linear regression models explained in [[Simple_Linear_Regression_Analysis| Simple Linear Regression Analysis]]. | ||

===Confidence Interval on Regression Coefficients=== | ===Confidence Interval on Regression Coefficients=== | ||

A 100 (<math>1-\alpha\,\!</math>) percent confidence interval on the regression coefficient, <math>{{\beta }_{j}}\,\!</math>, is obtained as follows: | A 100 (<math>1-\alpha\,\!</math>) percent confidence interval on the regression coefficient, <math>{{\beta }_{j}}\,\!</math>, is obtained as follows: | ||

::<math>{{\hat{\beta }}_{j}}\pm {{t}_{\alpha /2,n-(k+1)}}\sqrt{{{C}_{jj}}}\,\!</math> | ::<math>{{\hat{\beta }}_{j}}\pm {{t}_{\alpha /2,n-(k+1)}}\sqrt{{{C}_{jj}}}\,\!</math> | ||

The confidence interval on the regression coefficients are displayed in the Regression Information table under the Low | The confidence interval on the regression coefficients are displayed in the Regression Information table under the Low Confidence and High Confidence columns as shown in the following figure. | ||

[[Image:doe5_17.png|center|710px|Confidence interval for the fitted value corresponding to the fifth observation.|link=]] | |||

Confidence Interval on Fitted Values, <math>{{\hat{y}}_{i}}\,\!</math> | Confidence Interval on Fitted Values, <math>{{\hat{y}}_{i}}\,\!</math> | ||

A 100 (<math>1-\alpha\,\!</math>) percent confidence interval on any fitted value, <math>{{\hat{y}}_{i}}\,\!</math>, is given by: | A 100 (<math>1-\alpha\,\!</math>) percent confidence interval on any fitted value, <math>{{\hat{y}}_{i}}\,\!</math>, is given by: | ||

| Line 878: | Line 890: | ||

where: | |||

| Line 891: | Line 903: | ||

In the above [[Multiple_Linear_Regression_Analysis#Example| example]], the fitted value corresponding to the fifth observation was calculated as <math>{{\hat{y}}_{5}}=266.3\,\!</math>. The 90% confidence interval on this value can be obtained as shown in the figure below. The values of 47.3 and 29.9 used in the figure are the values of the predictor variables corresponding to the fifth observation the [[Multiple_Linear_Regression_Analysis#Example|table]]. | In the above [[Multiple_Linear_Regression_Analysis#Example| example]], the fitted value corresponding to the fifth observation was calculated as <math>{{\hat{y}}_{5}}=266.3\,\!</math>. The 90% confidence interval on this value can be obtained as shown in the figure below. The values of 47.3 and 29.9 used in the figure are the values of the predictor variables corresponding to the fifth observation the [[Multiple_Linear_Regression_Analysis#Example|table]]. | ||

===Confidence Interval on New Observations=== | ===Confidence Interval on New Observations=== | ||

| Line 919: | Line 928: | ||

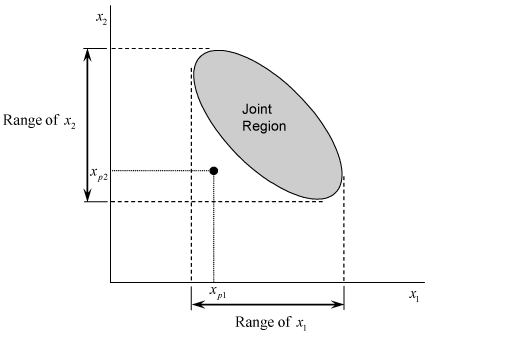

In multiple linear regression, prediction intervals should only be obtained at the levels of the predictor variables where the regression model applies. In the case of multiple linear regression it is easy to miss this. Having values lying within the range of the predictor variables does not necessarily mean that the new observation lies in the region to which the model is applicable. For example, consider the next figure where the shaded area shows the region to which a two variable regression model is applicable. The point corresponding to <math>p\,\!</math> th level of first predictor variable, <math>{{x}_{1}}\,\!</math>, and <math>p\,\!</math> th level of the second predictor variable, <math>{{x}_{2}}\,\!</math>, does not lie in the shaded area, although both of these levels are within the range of the first and second predictor variables respectively. In this case, the regression model is not applicable at this point. | |||

[[Image:doe5.18.png|center|519px|Predicted values and region of model application in multiple linear regression.|link=]] | |||

==Measures of Model Adequacy== | ==Measures of Model Adequacy== | ||

| Line 951: | Line 960: | ||

The adjusted <math>{{R}^{2}}\,\!</math> only increases when significant terms are added to the model. Addition of unimportant terms may lead to a decrease in the value of <math>R_{adj}^{2}\,\!</math>. | The adjusted <math>{{R}^{2}}\,\!</math> only increases when significant terms are added to the model. Addition of unimportant terms may lead to a decrease in the value of <math>R_{adj}^{2}\,\!</math>. | ||

In DOE | In a DOE folio, <math>{{R}^{2}}\,\!</math> and <math>R_{adj}^{2}\,\!</math> values are displayed as R-sq and R-sq(adj), respectively. Other values displayed along with these values are S, PRESS and R-sq(pred). As explained in [[Simple_Linear_Regression_Analysis| Simple Linear Regression Analysis]], the value of S is the square root of the error mean square, <math>M{{S}_{E}}\,\!</math>, and represents the "standard error of the model." | ||

PRESS is an abbreviation for prediction error sum of squares. It is the error sum of squares calculated using the PRESS residuals in place of the residuals, <math>{{e}_{i}}\,\!</math>, in the equation for the error sum of squares. The PRESS residual, <math>{{e}_{(i)}}\,\!</math>, for a particular observation, <math>{{y}_{i}}\,\!</math>, is obtained by fitting the regression model to the remaining observations. Then the value for a new observation, <math>{{\hat{y}}_{p}}\,\!</math>, corresponding to the observation in question, <math>{{y}_{i}}\,\!</math>, is obtained based on the new regression model. The difference between <math>{{y}_{i}}\,\!</math> and <math>{{\hat{y}}_{p}}\,\!</math> gives <math>{{e}_{(i)}}\,\!</math>. The PRESS residual, <math>{{e}_{(i)}}\,\!</math>, can also be obtained using <math>{{h}_{ii}}\,\!</math>, the diagonal element of the hat matrix, <math>H\,\!</math>, as follows: | PRESS is an abbreviation for prediction error sum of squares. It is the error sum of squares calculated using the PRESS residuals in place of the residuals, <math>{{e}_{i}}\,\!</math>, in the equation for the error sum of squares. The PRESS residual, <math>{{e}_{(i)}}\,\!</math>, for a particular observation, <math>{{y}_{i}}\,\!</math>, is obtained by fitting the regression model to the remaining observations. Then the value for a new observation, <math>{{\hat{y}}_{p}}\,\!</math>, corresponding to the observation in question, <math>{{y}_{i}}\,\!</math>, is obtained based on the new regression model. The difference between <math>{{y}_{i}}\,\!</math> and <math>{{\hat{y}}_{p}}\,\!</math> gives <math>{{e}_{(i)}}\,\!</math>. The PRESS residual, <math>{{e}_{(i)}}\,\!</math>, can also be obtained using <math>{{h}_{ii}}\,\!</math>, the diagonal element of the hat matrix, <math>H\,\!</math>, as follows: | ||

| Line 967: | Line 976: | ||

The values of R-sq, R-sq(adj) and S are indicators of how well the regression model fits the observed data. The values of PRESS and R-sq(pred) are indicators of how well the regression model predicts new observations. For example, higher values of PRESS or lower values of R-sq(pred) indicate a model that predicts poorly. The figure below shows these values for the data. The values indicate that the regression model fits the data well and also predicts well. | The values of R-sq, R-sq(adj) and S are indicators of how well the regression model fits the observed data. The values of PRESS and R-sq(pred) are indicators of how well the regression model predicts new observations. For example, higher values of PRESS or lower values of R-sq(pred) indicate a model that predicts poorly. The figure below shows these values for the data. The values indicate that the regression model fits the data well and also predicts well. | ||

[[Image:doe5_19.png|center|650px|Coefficient of multiple determination and related results for the data.]] | |||

===Residual Analysis=== | ===Residual Analysis=== | ||

| Line 978: | Line 989: | ||

Standardized residuals are scaled so that the standard deviation of the residuals is approximately equal to one. This helps to identify possible outliers or unusual observations. However, standardized residuals may understate the true residual magnitude, hence studentized residuals, <math>{{r}_{i}}\,\!</math>, are used in their place. Studentized residuals are calculated as follows: | |||

::<math> | |||

\begin{align} | |||

{{r}_{i}} & = & \frac{{{e}_{i}}}{\sqrt{{{{\hat{\sigma }}}^{2}}(1-{{h}_{ii}})}} \\ | |||

& = & \frac{{{e}_{i}}}{\sqrt{M{{S}_{E}}(1-{{h}_{ii}})}} | |||

\end{align} | |||

\,\! | |||

</math> | |||

| Line 996: | Line 1,012: | ||

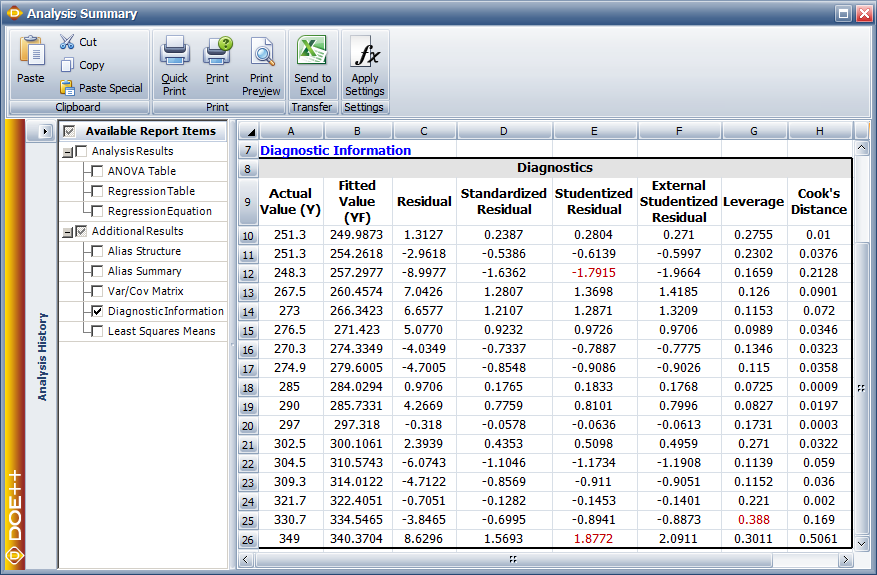

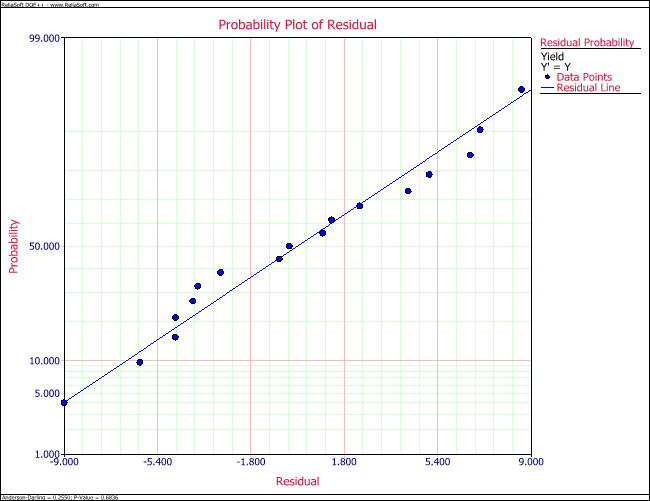

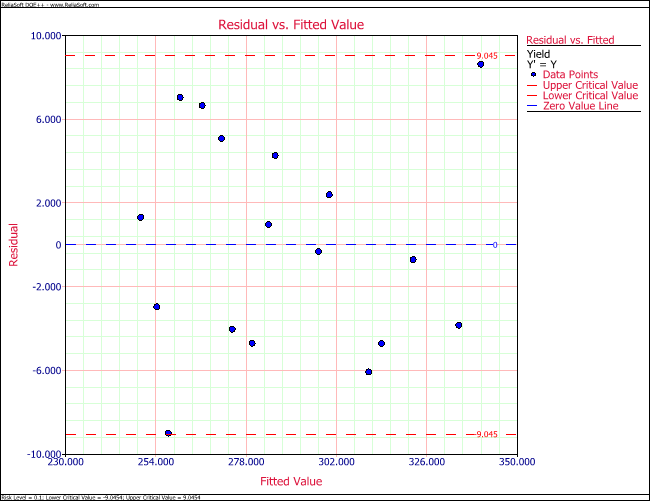

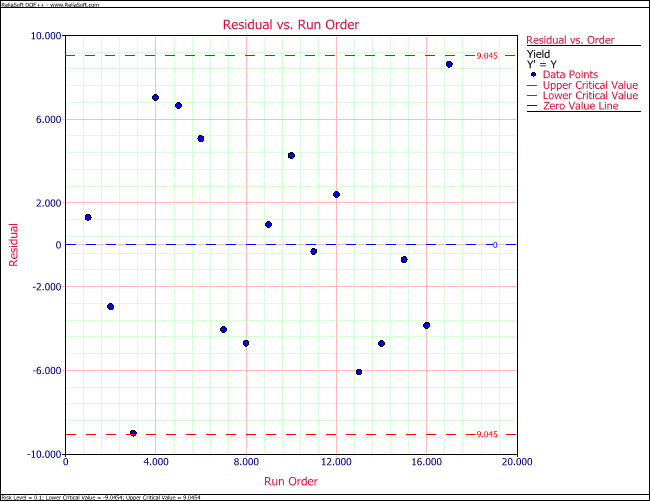

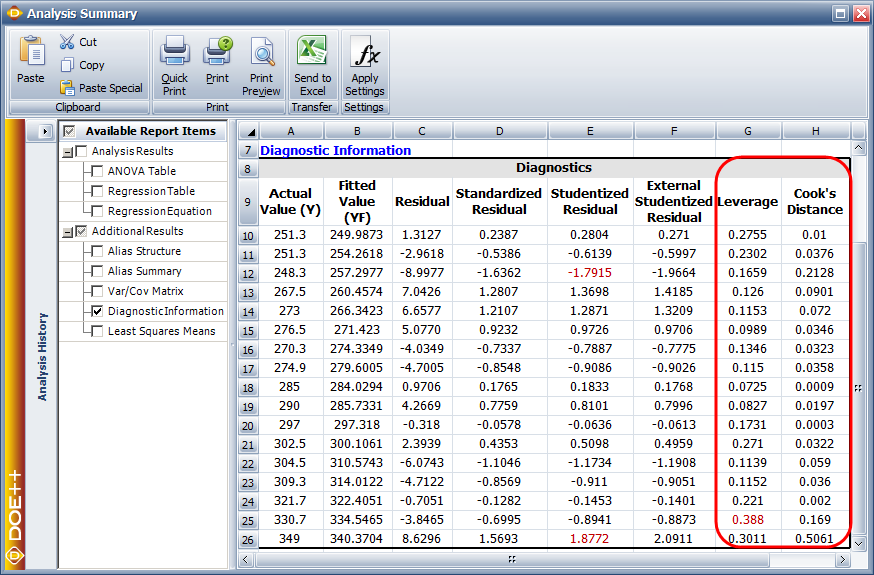

Residual values for the data are shown in the figure below. Standardized residual plots for the data are shown in | Residual values for the data are shown in the figure below. Standardized residual plots for the data are shown in next two figures. The Weibull++ DOE folio compares the residual values to the critical values on the <math>t\,\!</math> distribution for studentized and external studentized residuals. | ||

[[Image:doe5_20.png|center|877px|Residual values for the data.|link=]] | |||

[[Image:doe5_21.png|center|650px|Residual probability plot for the data.|link=]] | |||

For other residuals the normal distribution is used. For example, for the data, the critical values on the <math>t\,\!</math> distribution at a significance of 0.1 are <math>{{t}_{0.05,14}}=1.761\,\!</math> and <math>-{{t}_{0.05,14}}=-1.761\,\!</math> (as calculated in the [[Multiple_Linear_Regression_Analysis#Example_3|example]], [[Multiple_Linear_Regression_Analysis#Test_on_Individual_Regression_Coefficients_.28t__Test.29|Test on Individual Regression Coefficients (''t'' Test)]]). The studentized residual values corresponding to the 3rd and 17th observations lie outside the critical values. Therefore, the 3rd and 17th observations are outliers. This can also be seen on the residual plots in the next two figures. | |||

[[Image:doe5_22.png|center|650px|Residual versus fitted values plot for the data.|link=]] | |||

[[Image:doe5_23.png|center|650px|Residual versus run order plot for the data.|link=]] | |||

===Outlying ''x'' Observations=== | ===Outlying ''x'' Observations=== | ||

| Line 1,013: | Line 1,043: | ||

====Example==== | ====Example==== | ||

Cook's distance measure can be calculated as shown next. The distance measure is calculated for the first observation of the data. The remaining values along with the leverage values are shown in the figure below (displaying Leverage and Cook's distance measure for the data). | Cook's distance measure can be calculated as shown next. The distance measure is calculated for the first observation of the data. The remaining values along with the leverage values are shown in the figure below (displaying Leverage and Cook's distance measure for the data). | ||

[[Image: | [[Image:doe5_24.png|center|874px|Leverage and Cook's distance measure for the data.|link=]] | ||

The standardized residual corresponding to the first observation is: | |||

::<math>\begin{align} | ::<math>\begin{align} | ||

{{r}_{1}} & = & \frac{{{e}_{1}}}{\sqrt{M{{S}_{E}}(1-{{h}_{11}})}} \\ | |||

& = & \frac{1.3127}{\sqrt{30.3(1-0.2755)}} \\ | & = & \frac{1.3127}{\sqrt{30.3(1-0.2755)}} \\ | ||

& = & 0.2804 | & = & 0.2804 | ||

| Line 1,039: | Line 1,062: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

{{D}_{1}} & = & \frac{r_{1}^{2}}{(k+1)}\left[ \frac{{{h}_{11}}}{(1-{{h}_{11}})} \right] \\ | |||

& = & \frac{{{0.2804}^{2}}}{(2+1)}\left[ \frac{0.2755}{(1-0.2755)} \right] \\ | & = & \frac{{{0.2804}^{2}}}{(2+1)}\left[ \frac{0.2755}{(1-0.2755)} \right] \\ | ||

& = & 0.01 | & = & 0.01 | ||

| Line 1,045: | Line 1,068: | ||

The 50th percentile value for <math>{{F}_{3,14}}\,\!</math> is 0.83. Since all <math>{{D}_{i}}\,\!</math> values are less than this value there are no influential observations. | The 50th percentile value for <math>{{F}_{3,14}}\,\!</math> is 0.83. Since all <math>{{D}_{i}}\,\!</math> values are less than this value there are no influential observations. | ||

===Lack-of-Fit Test=== | ===Lack-of-Fit Test=== | ||

| Line 1,073: | Line 1,093: | ||

::<math>dof(S | ::<math> dof(S{S}_{PE}) = nm-n \,\! </math> | ||

| Line 1,085: | Line 1,105: | ||

<math>\begin{align} | ::<math> | ||

\begin{align} | |||

\end{align}\,\!</math> | |||

dof(S{{S}_{LOF}}) & = & dof(S{{S}_{E}})-dof(S{{S}_{PE}}) \\ | |||

& = & n-(k+1)-(nm-n) | |||

\end{align} | |||

\,\! | |||

</math> | |||

| Line 1,095: | Line 1,123: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

{{F}_{0}} & = & \frac{S{{S}_{LOF}}/dof(S{{S}_{LOF}})}{S{{S}_{PE}}/dof(S{{S}_{PE}})} \\ | |||

& = & \frac{M{{S}_{LOF}}}{M{{S}_{PE}}} | |||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

| Line 1,131: | Line 1,159: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

{{x}_{1}} & = & 1,\text{ }{{x}_{2}} & = & 0\text{ Machine Type I} \\ | |||

{{x}_{1}} & = & 0,\text{ }{{x}_{2}} & = & 1\text{ Machine Type II} \\ | |||

{{x}_{1}} & = & 0,\text{ }{{x}_{2}} & = & 0\text{ Machine Type III} | |||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

| Line 1,141: | Line 1,169: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

{{x}_{1}} & = & 1,\text{ }{{x}_{2}}& = &0\text{ Machine Type I} \\ | |||

{{x}_{1}}& = & 0,\text{ }{{x}_{2}}& = &1\text{ Machine Type II} \\ | |||

{{x}_{1}}& = & -1,\text{ }{{x}_{2}}& = &-1\text{ Machine Type III} | |||

\end{align}\,\!</math> | \end{align}\,\!</math> | ||

| Line 1,161: | Line 1,189: | ||

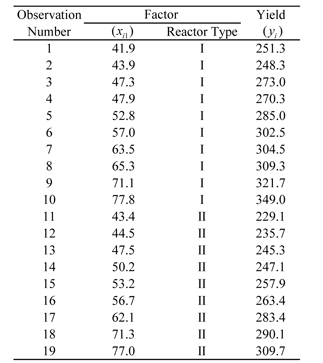

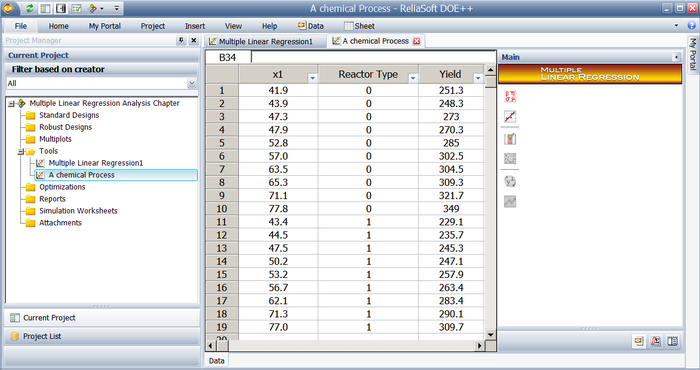

[[Image:doet5.3.png | [[Image:doet5.3.png|center|323px|Yield data from the two types of reactors for a chemical process.|link=]] | ||

Data entry in DOE | Data entry in the DOE folio for this example is shown in the figure after the table below. The regression model for this data is: | ||

| Line 1,174: | Line 1,202: | ||

[[Image: | [[Image:doe5_25.png|center|700px|Data from the table above as entered in Weibull++.]] | ||

| Line 1,181: | Line 1,209: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

\hat{\beta }& = & {{({{X}^{\prime }}X)}^{-1}}{{X}^{\prime }}y \\ | |||

& = & \left[ \begin{matrix} | & = & \left[ \begin{matrix} | ||

153.7 \\ | 153.7 \\ | ||

| Line 1,196: | Line 1,224: | ||

Note that since <math>{{x}_{2}}\,\!</math> represents a qualitative predictor variable, the fitted regression model cannot be plotted simultaneously against <math>{{x}_{1}}\,\!</math> and <math>{{x}_{2}}\,\!</math> in a two dimensional space (because the resulting surface plot will be meaningless for the dimension in <math>{{x}_{2}}\,\!</math> ). To illustrate this, a scatter plot of the data against <math>{{x}_{2}}\,\!</math> is shown in the following figure | Note that since <math>{{x}_{2}}\,\!</math> represents a qualitative predictor variable, the fitted regression model cannot be plotted simultaneously against <math>{{x}_{1}}\,\!</math> and <math>{{x}_{2}}\,\!</math> in a two-dimensional space (because the resulting surface plot will be meaningless for the dimension in <math>{{x}_{2}}\,\!</math> ). To illustrate this, a scatter plot of the data against <math>{{x}_{2}}\,\!</math> is shown in the following figure. | ||

[[Image:doe5_26.png|center|700px|Scatter plot of the observed yield values against <math>x_2\,\!</math> (reactor type)]] | |||

It can be noted that, in the case of qualitative factors, the nature of the relationship between the response (yield) and the qualitative factor (reactor type) cannot be categorized as linear, or quadratic, or cubic, etc. The only conclusion that can be arrived at for these factors is to see if these factors contribute significantly to the regression model. This can be done by employing the partial <math>F\,\!</math> test discussed in [[Multiple_Linear_Regression_Analysis#Test_on_Subsets_of_Regression_Coefficients_.28Partial_F_Test.29|Multiple Linear Regression Analysis]] (using the extra sum of squares of the indicator variables representing these factors). The results of the test for the present example are shown in the ANOVA table. The results show that <math>{{x}_{2}}\,\!</math> (reactor type) contributes significantly to the fitted regression model. | |||

[[Image: | |||

[[Image:doe5_27.png|center|700px|DOE results for the data.]] | |||

===Multicollinearity=== | |||

At times the predictor variables included in a multiple linear regression model may be found to be dependent on each other. Multicollinearity is said to exist in a multiple regression model with strong dependencies between the predictor variables. | |||

Multicollinearity affects the regression coefficients and the extra sum of squares of the predictor variables. In a model with multicollinearity the estimate of the regression coefficient of a predictor variable depends on what other predictor variables are included the model. The dependence may even lead to change in the sign of the regression coefficient. In a such models, an estimated regression coefficient may not be found to be significant individually (when using the <math>t\,\!</math> test on the individual coefficient or looking at the <math>p\,\!</math> value) even though a statistical relation is found to exist between the response variable and the set of the predictor variables (when using the <math>F\,\!</math> test for the set of predictor variables). Therefore, you should be careful while looking at individual predictor variables in models that have multicollinearity. Care should also be taken while looking at the extra sum of squares for a predictor variable that is correlated with other variables. This is because in models with multicollinearity the extra sum of squares is not unique and depends on the other predictor variables included in the model. | |||

| Line 1,219: | Line 1,250: | ||

====Example==== | ====Example==== | ||

Variance inflation factors can be obtained for the data. To calculate the variance inflation factor for <math>{{x}_{1}}\,\!</math>, <math>R_{1}^{2}\,\!</math> has to be calculated.<math>R_{1}^{2}\,\!</math> is the coefficient of determination for the model when <math>{{x}_{1}}\,\!</math> is regressed on the remaining variables. In the case of this example there is just one remaining variable which is <math>{{x}_{2}}\,\!</math>. If a regression model is fit to the data, taking <math>{{x}_{1}}\,\!</math> as the response variable and <math>{{x}_{2}}\,\!</math> as the predictor variable, then the design matrix and the vector of observations are: | Variance inflation factors can be obtained for the data below. | ||

[[Image:doet5.1.png|center|351px|Observed yield data for various levels of two factors.|link=]] | |||

To calculate the variance inflation factor for <math>{{x}_{1}}\,\!</math>, <math>R_{1}^{2}\,\!</math> has to be calculated.<math>R_{1}^{2}\,\!</math> is the coefficient of determination for the model when <math>{{x}_{1}}\,\!</math> is regressed on the remaining variables. In the case of this example there is just one remaining variable which is <math>{{x}_{2}}\,\!</math>. If a regression model is fit to the data, taking <math>{{x}_{1}}\,\!</math> as the response variable and <math>{{x}_{2}}\,\!</math> as the predictor variable, then the design matrix and the vector of observations are: | |||

| Line 1,277: | Line 1,312: | ||

The variance inflation factor for <math>{{x}_{2}}\,\!</math>, <math>VI{{F}_{2}}\,\!</math>, can be obtained in a similar manner. In DOE | The variance inflation factor for <math>{{x}_{2}}\,\!</math>, <math>VI{{F}_{2}}\,\!</math>, can be obtained in a similar manner. In the DOE folios, the variance inflation factors are displayed in the VIF column of the Regression Information table as shown in the following figure. Since the values of the variance inflation factors obtained are considerably greater than 1, multicollinearity is an issue for the data. | ||

[[Image: | [[Image:doe5_28.png|center|888px|Variance inflation factors for the data in.|link=]] | ||

Latest revision as of 22:22, 9 August 2018

This chapter expands on the analysis of simple linear regression models and discusses the analysis of multiple linear regression models. A major portion of the results displayed in Weibull++ DOE folios are explained in this chapter because these results are associated with multiple linear regression. One of the applications of multiple linear regression models is Response Surface Methodology (RSM). RSM is a method used to locate the optimum value of the response and is one of the final stages of experimentation. It is discussed in Response Surface Methods. Towards the end of this chapter, the concept of using indicator variables in regression models is explained. Indicator variables are used to represent qualitative factors in regression models. The concept of using indicator variables is important to gain an understanding of ANOVA models, which are the models used to analyze data obtained from experiments. These models can be thought of as first order multiple linear regression models where all the factors are treated as qualitative factors. ANOVA models are discussed in the One Factor Designs and General Full Factorial Designs chapters.

Multiple Linear Regression Model

A linear regression model that contains more than one predictor variable is called a multiple linear regression model. The following model is a multiple linear regression model with two predictor variables, [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math].

- [math]\displaystyle{ Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+\epsilon\,\! }[/math]

The model is linear because it is linear in the parameters [math]\displaystyle{ {{\beta }_{0}}\,\! }[/math], [math]\displaystyle{ {{\beta }_{1}}\,\! }[/math] and [math]\displaystyle{ {{\beta }_{2}}\,\! }[/math]. The model describes a plane in the three-dimensional space of [math]\displaystyle{ Y\,\! }[/math], [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math]. The parameter [math]\displaystyle{ {{\beta }_{0}}\,\! }[/math] is the intercept of this plane. Parameters [math]\displaystyle{ {{\beta }_{1}}\,\! }[/math] and [math]\displaystyle{ {{\beta }_{2}}\,\! }[/math] are referred to as partial regression coefficients. Parameter [math]\displaystyle{ {{\beta }_{1}}\,\! }[/math] represents the change in the mean response corresponding to a unit change in [math]\displaystyle{ {{x}_{1}}\,\! }[/math] when [math]\displaystyle{ {{x}_{2}}\,\! }[/math] is held constant. Parameter [math]\displaystyle{ {{\beta }_{2}}\,\! }[/math] represents the change in the mean response corresponding to a unit change in [math]\displaystyle{ {{x}_{2}}\,\! }[/math] when [math]\displaystyle{ {{x}_{1}}\,\! }[/math] is held constant.

Consider the following example of a multiple linear regression model with two predictor variables, [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math] :

- [math]\displaystyle{ Y=30+5{{x}_{1}}+7{{x}_{2}}+\epsilon \,\! }[/math]

This regression model is a first order multiple linear regression model. This is because the maximum power of the variables in the model is 1. (The regression plane corresponding to this model is shown in the figure below.) Also shown is an observed data point and the corresponding random error, [math]\displaystyle{ \epsilon\,\! }[/math]. The true regression model is usually never known (and therefore the values of the random error terms corresponding to observed data points remain unknown). However, the regression model can be estimated by calculating the parameters of the model for an observed data set. This is explained in Estimating Regression Models Using Least Squares.

One of the following figures shows the contour plot for the regression model the above equation. The contour plot shows lines of constant mean response values as a function of [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math]. The contour lines for the given regression model are straight lines as seen on the plot. Straight contour lines result for first order regression models with no interaction terms.

A linear regression model may also take the following form:

- [math]\displaystyle{ Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+{{\beta }_{12}}{{x}_{1}}{{x}_{2}}+\epsilon\,\! }[/math]

A cross-product term, [math]\displaystyle{ {{x}_{1}}{{x}_{2}}\,\! }[/math], is included in the model. This term represents an interaction effect between the two variables [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math]. Interaction means that the effect produced by a change in the predictor variable on the response depends on the level of the other predictor variable(s). As an example of a linear regression model with interaction, consider the model given by the equation [math]\displaystyle{ Y=30+5{{x}_{1}}+7{{x}_{2}}+3{{x}_{1}}{{x}_{2}}+\epsilon\,\! }[/math]. The regression plane and contour plot for this model are shown in the following two figures, respectively.

Now consider the regression model shown next:

- [math]\displaystyle{ Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}x_{1}^{2}+{{\beta }_{3}}x_{1}^{3}+\epsilon\,\! }[/math]

This model is also a linear regression model and is referred to as a polynomial regression model. Polynomial regression models contain squared and higher order terms of the predictor variables making the response surface curvilinear. As an example of a polynomial regression model with an interaction term consider the following equation:

- [math]\displaystyle{ Y=500+5{{x}_{1}}+7{{x}_{2}}-3x_{1}^{2}-5x_{2}^{2}+3{{x}_{1}}{{x}_{2}}+\epsilon\,\! }[/math]

This model is a second order model because the maximum power of the terms in the model is two. The regression surface for this model is shown in the following figure. Such regression models are used in RSM to find the optimum value of the response, [math]\displaystyle{ Y\,\! }[/math] (for details see Response Surface Methods for Optimization). Notice that, although the shape of the regression surface is curvilinear, the regression model is still linear because the model is linear in the parameters. The contour plot for this model is shown in the second of the following two figures.

All multiple linear regression models can be expressed in the following general form:

- [math]\displaystyle{ Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+...+{{\beta }_{k}}{{x}_{k}}+\epsilon\,\! }[/math]

where [math]\displaystyle{ k\,\! }[/math] denotes the number of terms in the model. For example, the model can be written in the general form using [math]\displaystyle{ {{x}_{3}}=x_{1}^{2}\,\! }[/math], [math]\displaystyle{ {{x}_{4}}=x_{2}^{3}\,\! }[/math] and [math]\displaystyle{ {{x}_{5}}={{x}_{1}}{{x}_{2}}\,\! }[/math] as follows:

- [math]\displaystyle{ Y=500+5{{x}_{1}}+7{{x}_{2}}-3{{x}_{3}}-5{{x}_{4}}+3{{x}_{5}}+\epsilon\,\! }[/math]

Estimating Regression Models Using Least Squares

Consider a multiple linear regression model with [math]\displaystyle{ k\,\! }[/math] predictor variables:

- [math]\displaystyle{ Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+...+{{\beta }_{k}}{{x}_{k}}+\epsilon\,\! }[/math]

Let each of the [math]\displaystyle{ k\,\! }[/math] predictor variables, [math]\displaystyle{ {{x}_{1}}\,\! }[/math], [math]\displaystyle{ {{x}_{2}}\,\! }[/math]... [math]\displaystyle{ {{x}_{k}}\,\! }[/math], have [math]\displaystyle{ n\,\! }[/math] levels. Then [math]\displaystyle{ {{x}_{ij}}\,\! }[/math] represents the [math]\displaystyle{ i\,\! }[/math] th level of the [math]\displaystyle{ j\,\! }[/math] th predictor variable [math]\displaystyle{ {{x}_{j}}\,\! }[/math]. For example, [math]\displaystyle{ {{x}_{51}}\,\! }[/math] represents the fifth level of the first predictor variable [math]\displaystyle{ {{x}_{1}}\,\! }[/math], while [math]\displaystyle{ {{x}_{19}}\,\! }[/math] represents the first level of the ninth predictor variable, [math]\displaystyle{ {{x}_{9}}\,\! }[/math]. Observations, [math]\displaystyle{ {{y}_{1}}\,\! }[/math], [math]\displaystyle{ {{y}_{2}}\,\! }[/math]... [math]\displaystyle{ {{y}_{n}}\,\! }[/math], recorded for each of these [math]\displaystyle{ n\,\! }[/math] levels can be expressed in the following way:

- [math]\displaystyle{ \begin{align} {{y}_{1}}= & {{\beta }_{0}}+{{\beta }_{1}}{{x}_{11}}+{{\beta }_{2}}{{x}_{12}}+...+{{\beta }_{k}}{{x}_{1k}}+{{\epsilon }_{1}} \\ {{y}_{2}}= & {{\beta }_{0}}+{{\beta }_{1}}{{x}_{21}}+{{\beta }_{2}}{{x}_{22}}+...+{{\beta }_{k}}{{x}_{2k}}+{{\epsilon }_{2}} \\ & .. \\ {{y}_{i}}= & {{\beta }_{0}}+{{\beta }_{1}}{{x}_{i1}}+{{\beta }_{2}}{{x}_{i2}}+...+{{\beta }_{k}}{{x}_{ik}}+{{\epsilon }_{i}} \\ & .. \\ {{y}_{n}}= & {{\beta }_{0}}+{{\beta }_{1}}{{x}_{n1}}+{{\beta }_{2}}{{x}_{n2}}+...+{{\beta }_{k}}{{x}_{nk}}+{{\epsilon }_{n}} \end{align}\,\! }[/math]

The system of [math]\displaystyle{ n\,\! }[/math] equations shown previously can be represented in matrix notation as follows:

- [math]\displaystyle{ y=X\beta +\epsilon\,\! }[/math]

- where

- [math]\displaystyle{ y=\left[ \begin{matrix} {{y}_{1}} \\ {{y}_{2}} \\ . \\ . \\ . \\ {{y}_{n}} \\ \end{matrix} \right]\text{ }X=\left[ \begin{matrix} 1 & {{x}_{11}} & {{x}_{12}} & . & . & . & {{x}_{1n}} \\ 1 & {{x}_{21}} & {{x}_{22}} & . & . & . & {{x}_{2n}} \\ . & . & . & {} & {} & {} & . \\ . & . & . & {} & {} & {} & . \\ . & . & . & {} & {} & {} & . \\ 1 & {{x}_{n1}} & {{x}_{n2}} & . & . & . & {{x}_{nn}} \\ \end{matrix} \right]\,\! }[/math]

- [math]\displaystyle{ \beta =\left[ \begin{matrix} {{\beta }_{0}} \\ {{\beta }_{1}} \\ . \\ . \\ . \\ {{\beta }_{n}} \\ \end{matrix} \right]\text{ and }\epsilon =\left[ \begin{matrix} {{\epsilon }_{1}} \\ {{\epsilon }_{2}} \\ . \\ . \\ . \\ {{\epsilon }_{n}} \\ \end{matrix} \right]\,\! }[/math]

The matrix [math]\displaystyle{ X\,\! }[/math] is referred to as the design matrix. It contains information about the levels of the predictor variables at which the observations are obtained. The vector [math]\displaystyle{ \beta\,\! }[/math] contains all the regression coefficients. To obtain the regression model, [math]\displaystyle{ \beta\,\! }[/math] should be known. [math]\displaystyle{ \beta\,\! }[/math] is estimated using least square estimates. The following equation is used:

- [math]\displaystyle{ \hat{\beta }={{({{X}^{\prime }}X)}^{-1}}{{X}^{\prime }}y\,\! }[/math]

where [math]\displaystyle{ ^{\prime }\,\! }[/math] represents the transpose of the matrix while [math]\displaystyle{ ^{-1}\,\! }[/math] represents the matrix inverse. Knowing the estimates, [math]\displaystyle{ \hat{\beta }\,\! }[/math], the multiple linear regression model can now be estimated as:

- [math]\displaystyle{ \hat{y}=X\hat{\beta }\,\! }[/math]

The estimated regression model is also referred to as the fitted model. The observations, [math]\displaystyle{ {{y}_{i}}\,\! }[/math], may be different from the fitted values [math]\displaystyle{ {{\hat{y}}_{i}}\,\! }[/math] obtained from this model. The difference between these two values is the residual, [math]\displaystyle{ {{e}_{i}}\,\! }[/math]. The vector of residuals, [math]\displaystyle{ e\,\! }[/math], is obtained as:

- [math]\displaystyle{ e=y-\hat{y}\,\! }[/math]

The fitted model can also be written as follows, using [math]\displaystyle{ \hat{\beta }={{({{X}^{\prime }}X)}^{-1}}{{X}^{\prime }}y\,\! }[/math]:

- [math]\displaystyle{ \begin{align} \hat{y} &= & X\hat{\beta } \\ & = & X{{({{X}^{\prime }}X)}^{-1}}{{X}^{\prime }}y \\ & = & Hy \end{align}\,\! }[/math]

where [math]\displaystyle{ H=X{{({{X}^{\prime }}X)}^{-1}}{{X}^{\prime }}\,\! }[/math]. The matrix, [math]\displaystyle{ H\,\! }[/math], is referred to as the hat matrix. It transforms the vector of the observed response values, [math]\displaystyle{ y\,\! }[/math], to the vector of fitted values, [math]\displaystyle{ \hat{y}\,\! }[/math].

Example

An analyst studying a chemical process expects the yield to be affected by the levels of two factors, [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math]. Observations recorded for various levels of the two factors are shown in the following table. The analyst wants to fit a first order regression model to the data. Interaction between [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math] is not expected based on knowledge of similar processes. Units of the factor levels and the yield are ignored for the analysis.

The data of the above table can be entered into the Weibull++ DOE folio using the multiple linear regression folio tool as shown in the following figure.

A scatter plot for the data is shown next.

The first order regression model applicable to this data set having two predictor variables is:

- [math]\displaystyle{ Y={{\beta }_{0}}+{{\beta }_{1}}{{x}_{1}}+{{\beta }_{2}}{{x}_{2}}+\epsilon\,\! }[/math]

where the dependent variable, [math]\displaystyle{ Y\,\! }[/math], represents the yield and the predictor variables, [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and [math]\displaystyle{ {{x}_{2}}\,\! }[/math], represent the two factors respectively. The [math]\displaystyle{ X\,\! }[/math] and [math]\displaystyle{ y\,\! }[/math] matrices for the data can be obtained as:

- [math]\displaystyle{ X=\left[ \begin{matrix} 1 & 41.9 & 29.1 \\ 1 & 43.4 & 29.3 \\ . & . & . \\ . & . & . \\ . & . & . \\ 1 & 77.8 & 32.9 \\ \end{matrix} \right]\text{ }y=\left[ \begin{matrix} 251.3 \\ 251.3 \\ . \\ . \\ . \\ 349.0 \\ \end{matrix} \right]\,\! }[/math]

The least square estimates, [math]\displaystyle{ \hat{\beta }\,\! }[/math], can now be obtained:

- [math]\displaystyle{ \begin{align} \hat{\beta } &= & {{({{X}^{\prime }}X)}^{-1}}{{X}^{\prime }}y \\ & = & {{\left[ \begin{matrix} 17 & 941 & 525.3 \\ 941 & 54270 & 29286 \\ 525.3 & 29286 & 16254 \\ \end{matrix} \right]}^{-1}}\left[ \begin{matrix} 4902.8 \\ 276610 \\ 152020 \\ \end{matrix} \right] \\ & = & \left[ \begin{matrix} -153.51 \\ 1.24 \\ 12.08 \\ \end{matrix} \right] \end{align}\,\! }[/math]

- Thus:

- [math]\displaystyle{ \hat{\beta }=\left[ \begin{matrix} {{{\hat{\beta }}}_{0}} \\ {{{\hat{\beta }}}_{1}} \\ {{{\hat{\beta }}}_{2}} \\ \end{matrix} \right]=\left[ \begin{matrix} -153.51 \\ 1.24 \\ 12.08 \\ \end{matrix} \right]\,\! }[/math]

and the estimated regression coefficients are [math]\displaystyle{ {{\hat{\beta }}_{0}}=-153.51\,\! }[/math], [math]\displaystyle{ {{\hat{\beta }}_{1}}=1.24\,\! }[/math] and [math]\displaystyle{ {{\hat{\beta }}_{2}}=12.08\,\! }[/math]. The fitted regression model is:

- [math]\displaystyle{ \begin{align} \hat{y} & = & {{{\hat{\beta }}}_{0}}+{{{\hat{\beta }}}_{1}}{{x}_{1}}+{{{\hat{\beta }}}_{2}}{{x}_{2}} \\ & = & -153.5+1.24{{x}_{1}}+12.08{{x}_{2}} \end{align}\,\! }[/math]

The fitted regression model can be viewed in the Weibull++ DOE folio, as shown next.

A plot of the fitted regression plane is shown in the following figure.

![Fitted regression plane [math]\displaystyle{ \hat{y}=-153.5+1.24 x_1+12.08 x_2\,\! }[/math] for the data from the table. Fitted regression plane [math]\displaystyle{ \hat{y}=-153.5+1.24 x_1+12.08 x_2\,\! }[/math] for the data from the table.](/images/6/68/Doe5.10.png)

The fitted regression model can be used to obtain fitted values, [math]\displaystyle{ {{\hat{y}}_{i}}\,\! }[/math], corresponding to an observed response value, [math]\displaystyle{ {{y}_{i}}\,\! }[/math]. For example, the fitted value corresponding to the fifth observation is:

- [math]\displaystyle{ \begin{align} {{{\hat{y}}}_{i}} &= & -153.5+1.24{{x}_{i1}}+12.08{{x}_{i2}} \\ {{{\hat{y}}}_{5}} & = & -153.5+1.24{{x}_{51}}+12.08{{x}_{52}} \\ & = & -153.5+1.24(47.3)+12.08(29.9) \\ & = & 266.3 \end{align}\,\! }[/math]

The observed fifth response value is [math]\displaystyle{ {{y}_{5}}=273.0\,\! }[/math]. The residual corresponding to this value is:

- [math]\displaystyle{ \begin{align} {{e}_{i}} & = & {{y}_{i}}-{{{\hat{y}}}_{i}} \\ {{e}_{5}}& = & {{y}_{5}}-{{{\hat{y}}}_{5}} \\ & = & 273.0-266.3 \\ & = & 6.7 \end{align}\,\! }[/math]

In Weibull++ DOE folios, fitted values and residuals are shown in the Diagnostic Information table of the detailed summary of results. The values are shown in the following figure.

The fitted regression model can also be used to predict response values. For example, to obtain the response value for a new observation corresponding to 47 units of [math]\displaystyle{ {{x}_{1}}\,\! }[/math] and 31 units of [math]\displaystyle{ {{x}_{2}}\,\! }[/math], the value is calculated using:

- [math]\displaystyle{ \begin{align} \hat{y}(47,31)& = & -153.5+1.24(47)+12.08(31) \\ & = & 279.26 \end{align}\,\! }[/math]

Properties of the Least Square Estimators for Beta

The least square estimates, [math]\displaystyle{ {{\hat{\beta }}_{0}}\,\! }[/math], [math]\displaystyle{ {{\hat{\beta }}_{1}}\,\! }[/math], [math]\displaystyle{ {{\hat{\beta }}_{2}}\,\! }[/math]... [math]\displaystyle{ {{\hat{\beta }}_{k}}\,\! }[/math], are unbiased estimators of [math]\displaystyle{ {{\beta }_{0}}\,\! }[/math], [math]\displaystyle{ {{\beta }_{1}}\,\! }[/math], [math]\displaystyle{ {{\beta }_{2}}\,\! }[/math]... [math]\displaystyle{ {{\beta }_{k}}\,\! }[/math], provided that the random error terms, [math]\displaystyle{ {{\epsilon }_{i}}\,\! }[/math], are normally and independently distributed. The variances of the [math]\displaystyle{ \hat{\beta }\,\! }[/math] s are obtained using the [math]\displaystyle{ {{({{X}^{\prime }}X)}^{-1}}\,\! }[/math] matrix. The variance-covariance matrix of the estimated regression coefficients is obtained as follows:

- [math]\displaystyle{ C={{\hat{\sigma }}^{2}}{{({{X}^{\prime }}X)}^{-1}}\,\! }[/math]

[math]\displaystyle{ C\,\! }[/math] is a symmetric matrix whose diagonal elements, [math]\displaystyle{ {{C}_{jj}}\,\! }[/math], represent the variance of the estimated [math]\displaystyle{ j\,\! }[/math] th regression coefficient, [math]\displaystyle{ {{\hat{\beta }}_{j}}\,\! }[/math]. The off-diagonal elements, [math]\displaystyle{ {{C}_{ij}}\,\! }[/math], represent the covariance between the [math]\displaystyle{ i\,\! }[/math] th and [math]\displaystyle{ j\,\! }[/math] th estimated regression coefficients, [math]\displaystyle{ {{\hat{\beta }}_{i}}\,\! }[/math] and [math]\displaystyle{ {{\hat{\beta }}_{j}}\,\! }[/math]. The value of [math]\displaystyle{ {{\hat{\sigma }}^{2}}\,\! }[/math] is obtained using the error mean square, [math]\displaystyle{ M{{S}_{E}}\,\! }[/math]. The variance-covariance matrix for the data in the table (see Estimating Regression Models Using Least Squares) can be viewed in the DOE folio, as shown next.

Calculations to obtain the matrix are given in this example. The positive square root of [math]\displaystyle{ {{C}_{jj}}\,\! }[/math] represents the estimated standard deviation of the [math]\displaystyle{ j\,\! }[/math] th regression coefficient, [math]\displaystyle{ {{\hat{\beta }}_{j}}\,\! }[/math], and is called the estimated standard error of [math]\displaystyle{ {{\hat{\beta }}_{j}}\,\! }[/math] (abbreviated [math]\displaystyle{ se({{\hat{\beta }}_{j}})\,\! }[/math] ).

- [math]\displaystyle{ se({{\hat{\beta }}_{j}})=\sqrt{{{C}_{jj}}}\,\! }[/math]

Hypothesis Tests in Multiple Linear Regression

This section discusses hypothesis tests on the regression coefficients in multiple linear regression. As in the case of simple linear regression, these tests can only be carried out if it can be assumed that the random error terms, [math]\displaystyle{ {{\epsilon }_{i}}\,\! }[/math], are normally and independently distributed with a mean of zero and variance of [math]\displaystyle{ {{\sigma }^{2}}\,\! }[/math]. Three types of hypothesis tests can be carried out for multiple linear regression models:

- Test for significance of regression: This test checks the significance of the whole regression model.

- [math]\displaystyle{ t\,\! }[/math] test: This test checks the significance of individual regression coefficients.

- [math]\displaystyle{ F\,\! }[/math] test: This test can be used to simultaneously check the significance of a number of regression coefficients. It can also be used to test individual coefficients.

Test for Significance of Regression

The test for significance of regression in the case of multiple linear regression analysis is carried out using the analysis of variance. The test is used to check if a linear statistical relationship exists between the response variable and at least one of the predictor variables. The statements for the hypotheses are:

- [math]\displaystyle{ \begin{align} & {{H}_{0}}:& {{\beta }_{1}}={{\beta }_{2}}=...={{\beta }_{k}}=0 \\ & {{H}_{1}}:& {{\beta }_{j}}\ne 0\text{ for at least one }j \end{align}\,\! }[/math]

The test for [math]\displaystyle{ {{H}_{0}}\,\! }[/math] is carried out using the following statistic:

- [math]\displaystyle{ {{F}_{0}}=\frac{M{{S}_{R}}}{M{{S}_{E}}}\,\! }[/math]

where [math]\displaystyle{ M{{S}_{R}}\,\! }[/math] is the regression mean square and [math]\displaystyle{ M{{S}_{E}}\,\! }[/math] is the error mean square. If the null hypothesis, [math]\displaystyle{ {{H}_{0}}\,\! }[/math], is true then the statistic [math]\displaystyle{ {{F}_{0}}\,\! }[/math] follows the [math]\displaystyle{ F\,\! }[/math] distribution with [math]\displaystyle{ k\,\! }[/math] degrees of freedom in the numerator and [math]\displaystyle{ n-\,\! }[/math] ( [math]\displaystyle{ k+1\,\! }[/math] ) degrees of freedom in the denominator. The null hypothesis, [math]\displaystyle{ {{H}_{0}}\,\! }[/math], is rejected if the calculated statistic, [math]\displaystyle{ {{F}_{0}}\,\! }[/math], is such that:

- [math]\displaystyle{ {{F}_{0}}\gt {{f}_{\alpha ,k,n-(k+1)}}\,\! }[/math]

Calculation of the Statistic [math]\displaystyle{ {{F}_{0}}\,\! }[/math]

To calculate the statistic [math]\displaystyle{ {{F}_{0}}\,\! }[/math], the mean squares [math]\displaystyle{ M{{S}_{R}}\,\! }[/math] and [math]\displaystyle{ M{{S}_{E}}\,\! }[/math] must be known. As explained in Simple Linear Regression Analysis, the mean squares are obtained by dividing the sum of squares by their degrees of freedom. For example, the total mean square, [math]\displaystyle{ M{{S}_{T}}\,\! }[/math], is obtained as follows:

- [math]\displaystyle{ M{{S}_{T}}=\frac{S{{S}_{T}}}{dof(S{{S}_{T}})}\,\! }[/math]